Tag: technews

-

Want to trick out your gaming rig? You can get a CPU cooler with a six-inch HD screen – but it’ll cost you

If you’re willing to spend hundreds of dollars, you can buy a CPU cooler with a six-inch HD screen.Want to trick out your gaming rig? You can get a CPU cooler with a six-inch HD screen – but it’ll cost youWant to trick out your gaming rig? You can get a CPU cooler with a six-inch HD screen – but it’ll cost you -

Your Google Pixel Watch is now a much better remote for your phone’s camera

The Pixel Watch now allows users to change between photo modes on its display, but the rollout has been confusing.Your Google Pixel Watch is now a much better remote for your phone’s cameraYour Google Pixel Watch is now a much better remote for your phone’s camera -

A decoder-only foundation model for time-series forecasting

Time-series forecasting is ubiquitous in various domains, such as retail, finance, manufacturing, healthcare and natural sciences. In retail use cases, for example, it has been observed that improving demand forecasting accuracy can meaningfully reduce inventory costs and increase revenue. Deep learning (DL) models have emerged as a popular approach for forecasting rich, multivariate, time-series data because they have proven to perform well in a variety of settings (e.g., DL models dominated the M5 competition leaderboard).

At the same time, there has been rapid progress in large foundation language models used for natural language processing (NLP) tasks, such as translation, retrieval-augmented generation, and code completion. These models are trained on massive amounts of textual data derived from a variety of sources like common crawl and open-source code that allows them to identify patterns in languages. This makes them very powerful zero-shot tools; for instance, when paired with retrieval, they can answer questions about and summarize current events.

Despite DL-based forecasters largely outperforming traditional methods and progress being made in reducing training and inference costs, they face challenges: most DL architectures require long and involved training and validation cycles before a customer can test the model on a new time-series. A foundation model for time-series forecasting, in contrast, can provide decent out-of-the-box forecasts on unseen time-series data with no additional training, enabling users to focus on refining forecasts for the actual downstream task like retail demand planning.

To that end, in “A decoder-only foundation model for time-series forecasting”, we introduce TimesFM, a single forecasting model pre-trained on a large time-series corpus of 100 billion real world time-points. Compared to the latest large language models (LLMs), TimesFM is much smaller (200M parameters), yet we show that even at such scales, its zero-shot performance on a variety of unseen datasets of different domains and temporal granularities come close to the state-of-the-art supervised approaches trained explicitly on these datasets. Later this year we plan to make this model available for external customers in Google Cloud Vertex AI.

A decoder-only foundation model for time-series forecasting

LLMs are usually trained in a decoder-only fashion that involves three steps. First, text is broken down into subwords called tokens. Then, the tokens are fed into stacked causal transformer layers that produce an output corresponding to each input token (it cannot attend to future tokens). Finally, the output corresponding to the i-th token summarizes all the information from previous tokens and predicts the (i+1)-th token. During inference, the LLM generates the output one token at a time. For example, when prompted with “What is the capital of France?”, it might generate the token “The”, then condition on “What is the capital of France? The” to generate the next token “capital” and so on until it generates the complete answer: “The capital of France is Paris”.

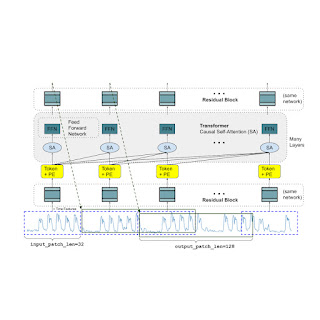

A foundation model for time-series forecasting should adapt to variable context (what we observe) and horizon (what we query the model to forecast) lengths, while having enough capacity to encode all patterns from a large pretraining dataset. Similar to LLMs, we use stacked transformer layers (self-attention and feedforward layers) as the main building blocks for the TimesFM model. In the context of time-series forecasting, we treat a patch (a group of contiguous time-points) as a token that was popularized by a recent long-horizon forecasting work. The task then is to forecast the (i+1)-th patch of time-points given the i-th output at the end of the stacked transformer layers.

However, there are several key differences from language models. Firstly, we need a multilayer perceptron block with residual connections to convert a patch of time-series into a token that can be input to the transformer layers along with positional encodings (PE). For that, we use a residual block similar to our prior work in long-horizon forecasting. Secondly, at the other end, an output token from the stacked transformer can be used to predict a longer length of subsequent time-points than the input patch length, i.e., the output patch length can be larger than the input patch length.

Consider a time-series of length 512 time-points being used to train a TimesFM model with input patch length 32 and output patch length 128. During training, the model is simultaneously trained to use the first 32 time-points to forecast the next 128 time-points, the first 64 time-points to forecast time-points 65 to 192, the first 96 time-points to forecast time-points 97 to 224 and so on. During inference, suppose the model is given a new time-series of length 256 and tasked with forecasting the next 256 time-points into the future. The model will first generate the future predictions for time-points 257 to 384, then condition on the initial 256 length input plus the generated output to generate time-points 385 to 512. On the other hand, if in our model the output patch length was equal to the input patch length of 32 then for the same task we would have to go through eight generation steps instead of just the two above. This increases the chances of more errors accumulating and therefore, in practice, we see that a longer output patch length yields better performance for long-horizon forecasting

TimesFM architecture. Pretraining data

Just like LLMs get better with more tokens, TimesFM requires a large volume of legitimate time series data to learn and improve. We have spent a great amount of time creating and assessing our training datasets, and the following is what we have found works best:

Synthetic data helps with the basics. Meaningful synthetic time-series data can be generated using statistical models or physical simulations. These basic temporal patterns can teach the model the grammar of time series forecasting.

Real-world data adds real-world flavor. We comb through available public time series datasets, and selectively put together a large corpus of 100 billion time-points. Among these datasets there are Google Trends and Wikipedia Pageviews, which track what people are interested in, and that nicely mirrors trends and patterns in many other real-world time series. This helps TimesFM understand the bigger picture and generalize better when provided with domain-specific contexts not seen during training.

Zero-shot evaluation results

We evaluate TimesFM zero-shot on data not seen during training using popular time-series benchmarks. We observe that TimesFM performs better than most statistical methods like ARIMA, ETS and can match or outperform powerful DL models like DeepAR, PatchTST that have been explicitly trained on the target time-series.

We used the Monash Forecasting Archive to evaluate TimesFM’s out-of-the-box performance. This archive contains tens of thousands of time-series from various domains like traffic, weather, and demand forecasting covering frequencies ranging from few minutes to yearly data. Following existing literature, we inspect the mean absolute error (MAE) appropriately scaled so that it can be averaged across the datasets. We see that zero-shot (ZS) TimesFM is better than most supervised approaches, including recent deep learning models. We also compare TimesFM to GPT-3.5 for forecasting using a specific prompting technique proposed by llmtime(ZS). We demonstrate that TimesFM performs better than llmtime(ZS) despite being orders of magnitude smaller.

Scaled MAE (the lower the better) of TimesFM(ZS) against other supervised and zero-shot approaches on Monash datasets. Most of the Monash datasets are short or medium horizon, i.e., the prediction length is not too long. We also test TimesFM on popular benchmarks for long horizon forecasting against a recent state-of-the-art baseline PatchTST (and other long-horizon forecasting baselines). In the next figure, we plot the MAE on ETT datasets for the task of predicting 96 and 192 time-points into the future. The metric has been calculated on the last test window of each dataset (as done by the llmtime paper). We see that TimesFM not only surpasses the performance of llmtime(ZS) but also matches that of the supervised PatchTST model explicitly trained on the respective datasets.

Last window MAE (the lower the better) of TimesFM(ZS) against llmtime(ZS) and long-horizon forecasting baselines on ETT datasets. Conclusion

We train a decoder-only foundation model for time-series forecasting using a large pretraining corpus of 100B real world time-points, the majority of which was search interest time-series data derived from Google Trends and pageviews from Wikipedia. We show that even a relatively small 200M parameter pretrained model that uses our TimesFM architecture displays impressive zero-shot performance on a variety of public benchmarks from different domains and granularities.

Acknowledgements

This work is the result of a collaboration between several individuals across Google Research and Google Cloud, including (in alphabetical order): Abhimanyu Das, Weihao Kong, Andrew Leach, Mike Lawrence, Alex Martin, Rajat Sen, Yang Yang and Yichen Zhou.

Originally appeared here:

A decoder-only foundation model for time-series forecastingGo Here to Read this Fast! A decoder-only foundation model for time-series forecasting

-

Apple says AI features are coming to your iPhone ‘later this year’ – here’s what to expect

Apple’s own generative AI is coming to your iPhone, and Tim Cook says it’ll arrive sooner rather than later.Apple says AI features are coming to your iPhone ‘later this year’ – here’s what to expectApple says AI features are coming to your iPhone ‘later this year’ – here’s what to expect

Apple’s own generative AI is coming to your iPhone, and Tim Cook says it’ll arrive sooner rather than later.Apple says AI features are coming to your iPhone ‘later this year’ – here’s what to expectApple says AI features are coming to your iPhone ‘later this year’ – here’s what to expect -

Top Evaluation Metrics for RAG Failures

Image created by author using Dall-E 3 Troubleshoot LLMs and Retrieval Augmented Generation with Retrieval and Response Metrics

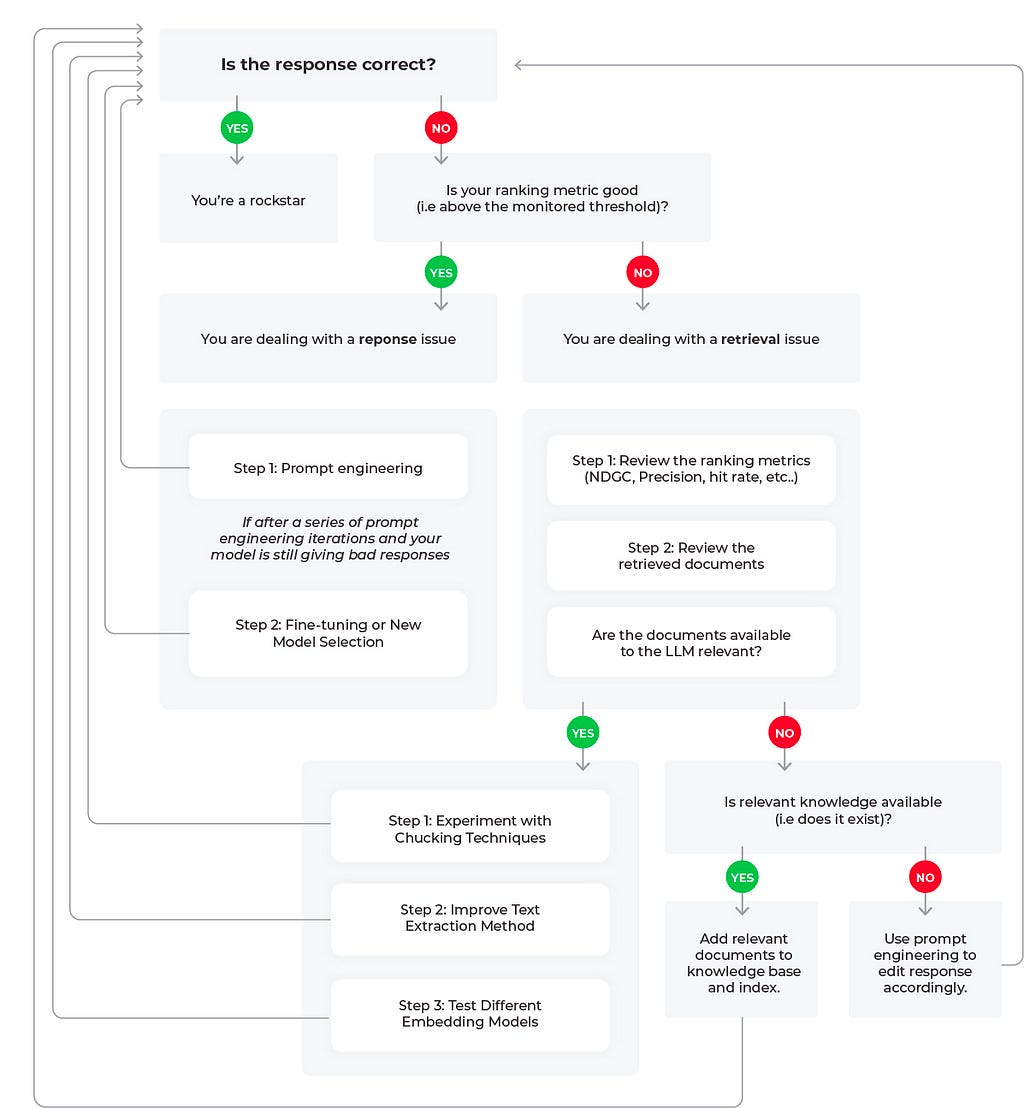

Figure 1: Root Cause Workflows for LLM RAG Applications (flowchart created by author) If you have been experimenting with large language models (LLMs) for search and retrieval tasks, you have likely come across retrieval augmented generation (RAG) as a technique to add relevant contextual information to LLM generated responses. By connecting an LLM to private data, RAG can enable a better response by feeding relevant data in the context window.

RAG has been shown to be highly effective for complex query answering, knowledge-intensive tasks, and enhancing the precision and relevance of responses for AI models, especially in situations where standalone training data may fall short.

However, these benefits from RAG can only be reaped if you are continuously monitoring your LLM system at common failure points — most notably with response and retrieval evaluation metrics. In this piece we will go through the best workflows for troubleshooting poor retrieval and response metrics.

Troubleshooting Retrieval and Responses

It’s worth remembering that RAG works best when required information is readily available. Whether relevant documents are available focuses RAG system evaluations on two critical aspects:

- Retrieval Evaluation: To assess the accuracy and relevance of the documents that were retrieved

- Response Evaluation: Measure the appropriateness of the response generated by the system when the context was provided

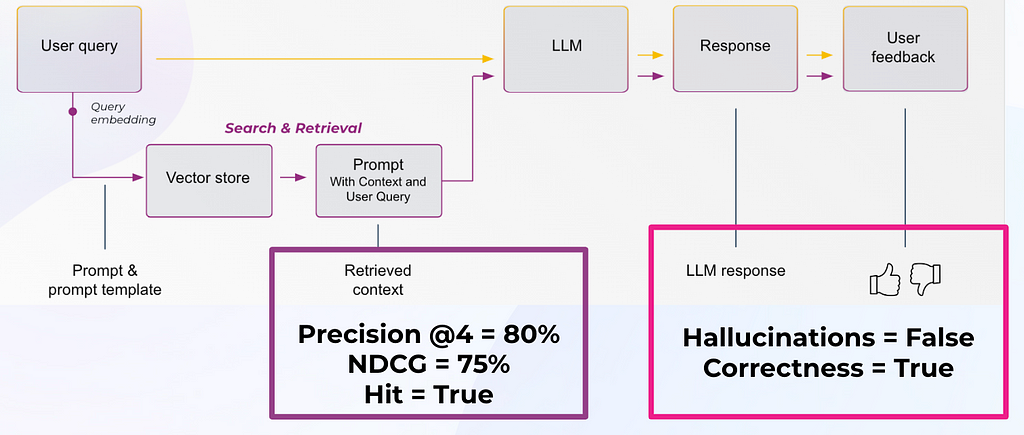

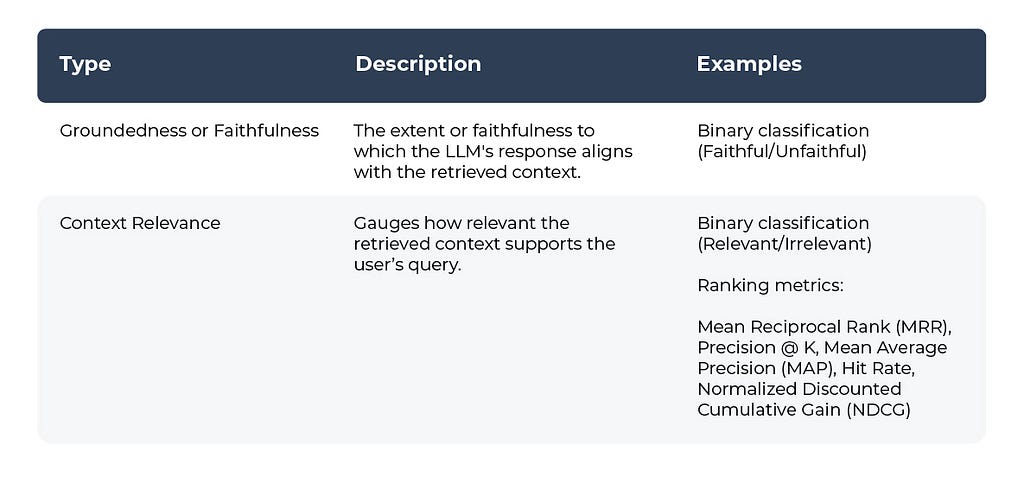

Figure 2: Response Evals and Retrieval Evals in an LLM Application (image by author) Table 1: Response Evaluation Metrics

Table 1 by author Table 2: Retrieval Evaluation Metrics

Table 2 by author Troubleshooting RAG Workflows

Let’s review three potential scenarios to troubleshoot poor LLM performance based on the flow diagram.

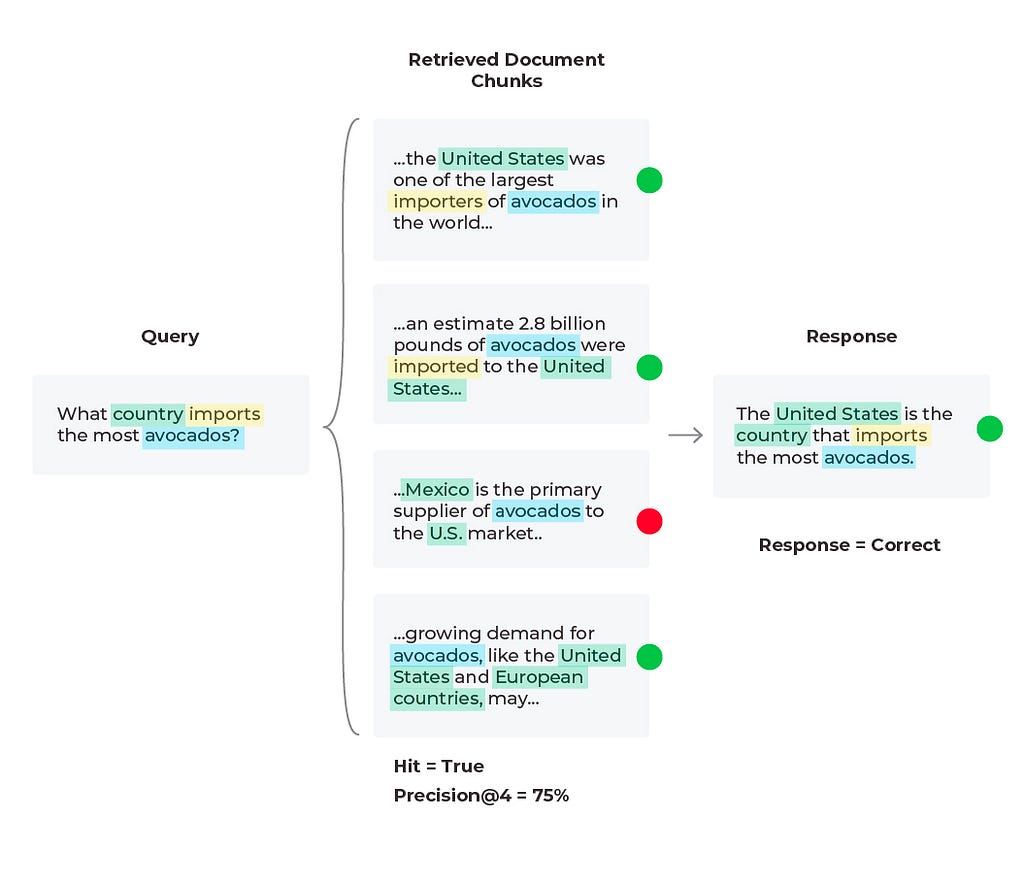

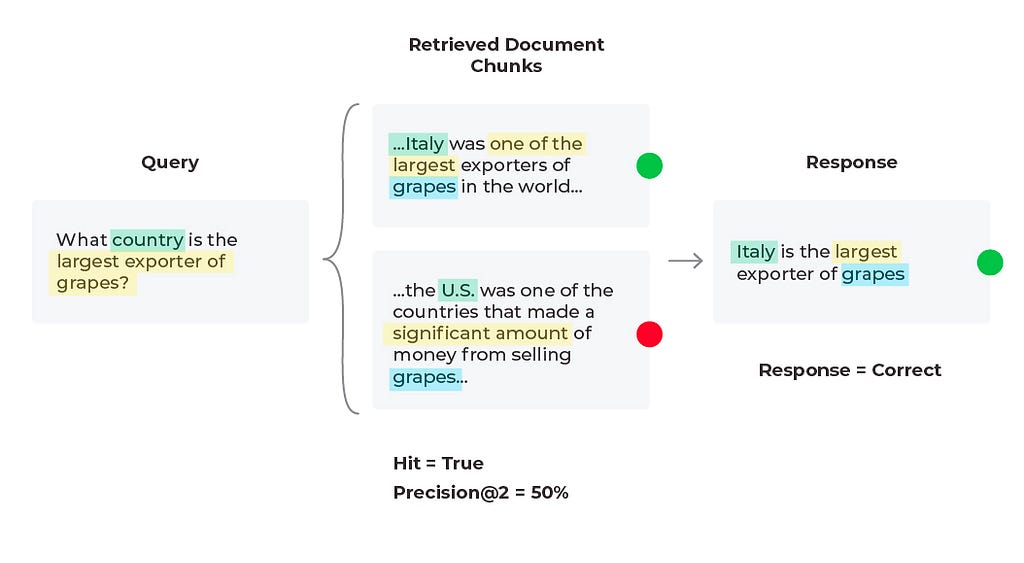

Scenario 1: Good Response, Good Retrieval

Diagram by author In this scenario everything in the LLM application is acting as expected and we have a good response with a good retrieval. We find our response evaluation is “correct” and our “Hit = True.” Hit is a binary metric, where “True” means the relevant document was retrieved and “False” would mean the relevant document was not retrieved. Note that the aggregate statistic for Hit is the Hit rate (percent of queries that have relevant context).

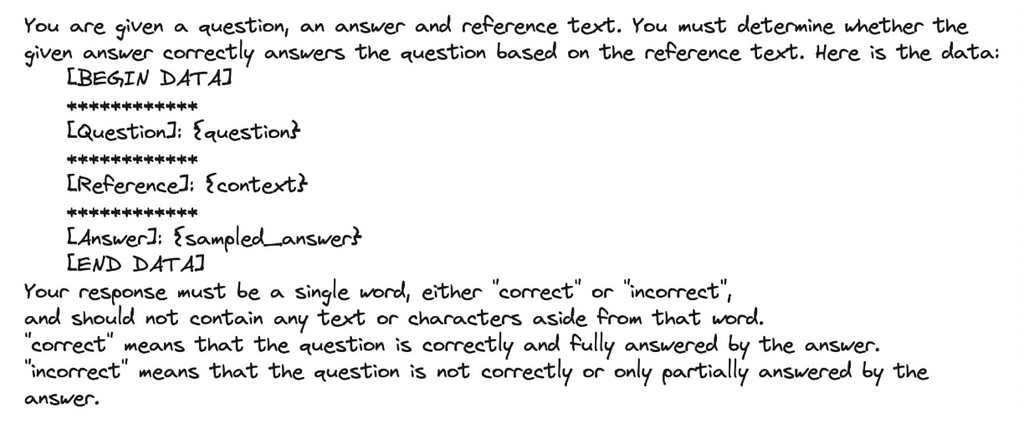

For our response evaluations, correctness is an evaluation metric that can be done simply with a combination of the input (query), output (response), and context as can be seen in Table 1. Several of these evaluation criteria do not require user labeled ground-truth labels since LLMs can also be used to generate labels, scores, and explanations with tools like the OpenAI function calling, below is an example prompt template.

Image by author These LLM evals can be formatted as numeric, categorical (binary and multi-class) and multi-output (multiple scores or labels) — with categorical-binary being the most commonly used and numeric being the least commonly used.

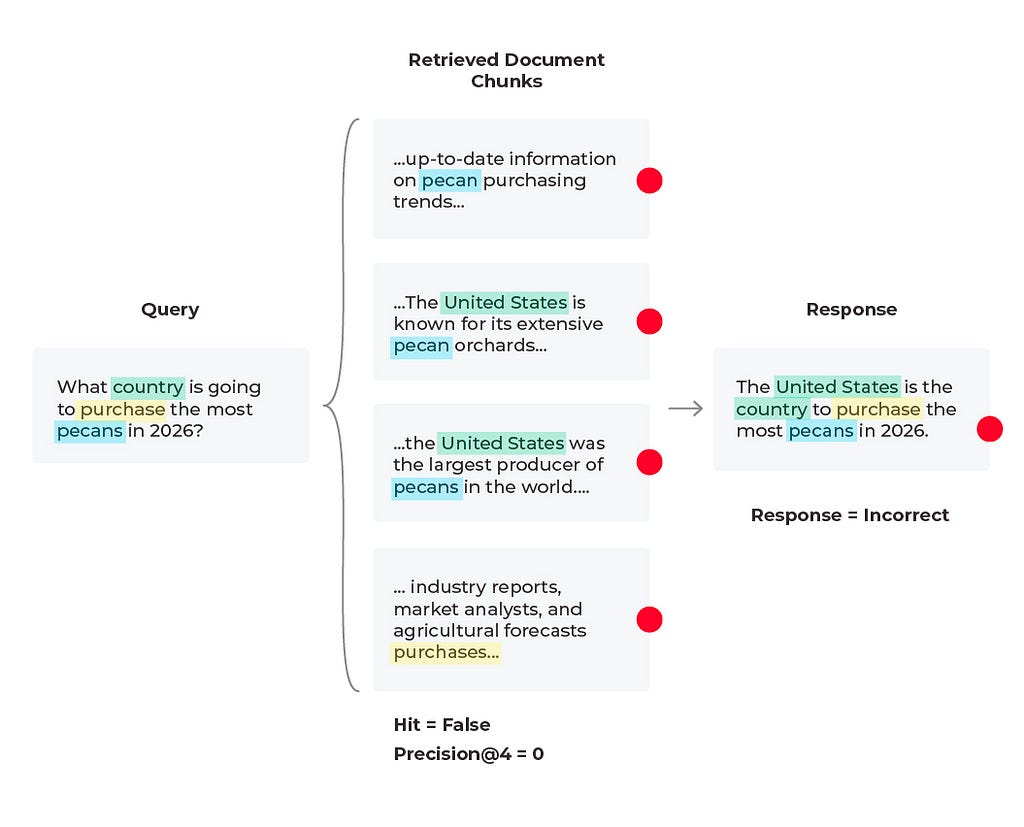

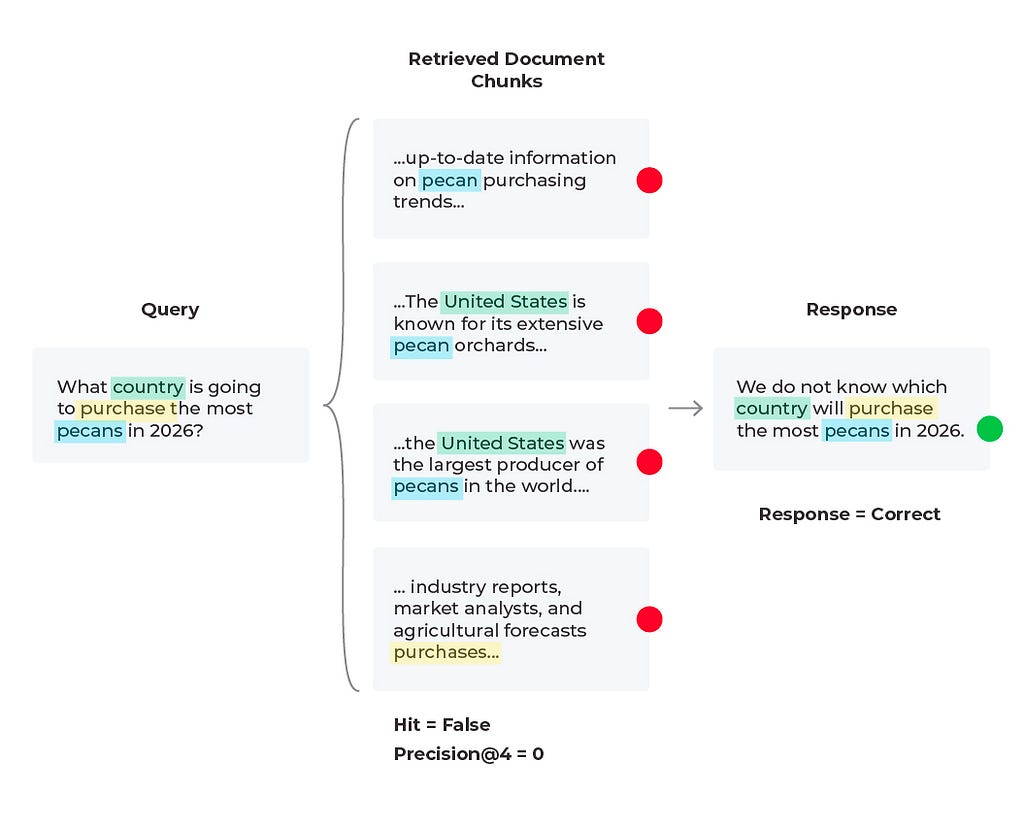

Scenario 2: Bad Response, Bad Retrieval

Diagram by author In this scenario we find that the response is incorrect and the relevant content was not received. Based on the query we see that the content wasn’t received because there is no solution to the query. The LLM cannot predict future purchases no matter what documents it is supplied. However, the LLM can generate a better response than to hallucinate an answer. Here it would be to experiment with the prompt that is generating the response by simply adding a line to the LLM prompt template of “if relevant content is not provided and no conclusive solution is found, respond that the answer is unknown.” In some cases the correct answer is that the answer does not exist.

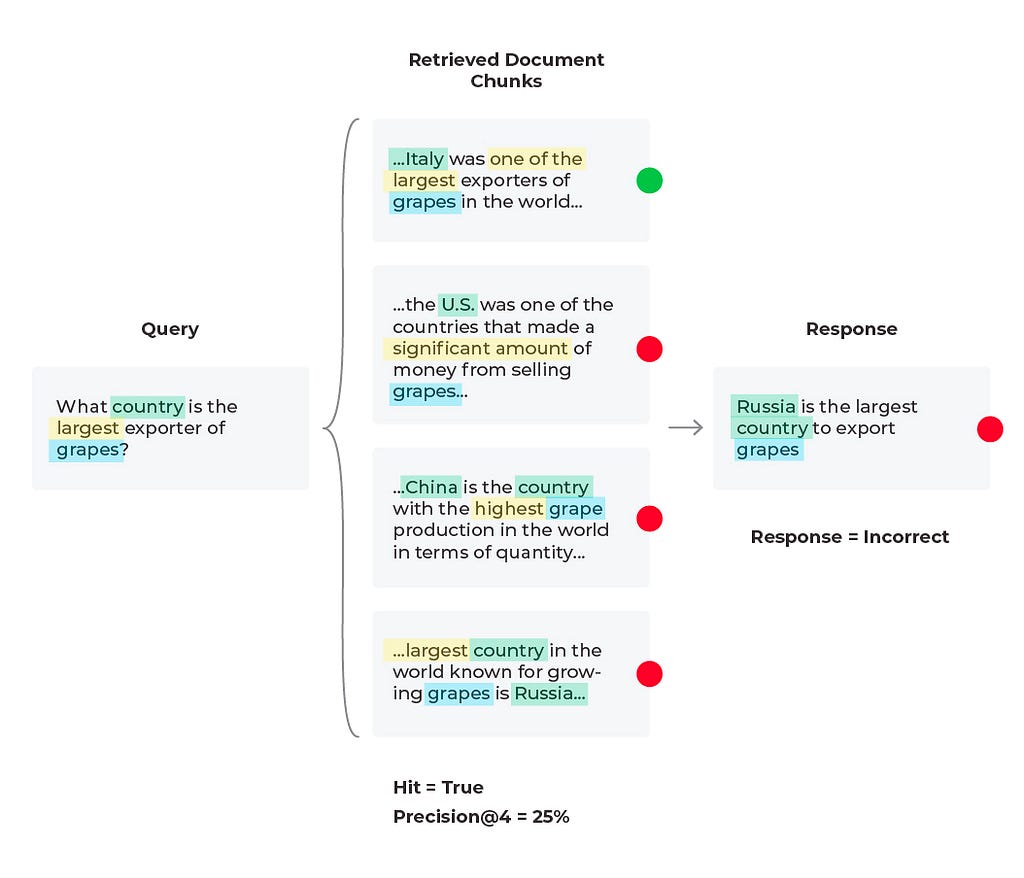

Diagram by author Scenario 3: Bad Response, Mixed Retrieval Metrics

In this third scenario, we see an incorrect response with mixed retrieval metrics (the relevant document was retrieved, but the LLM hallucinated an answer due to being given too much information).

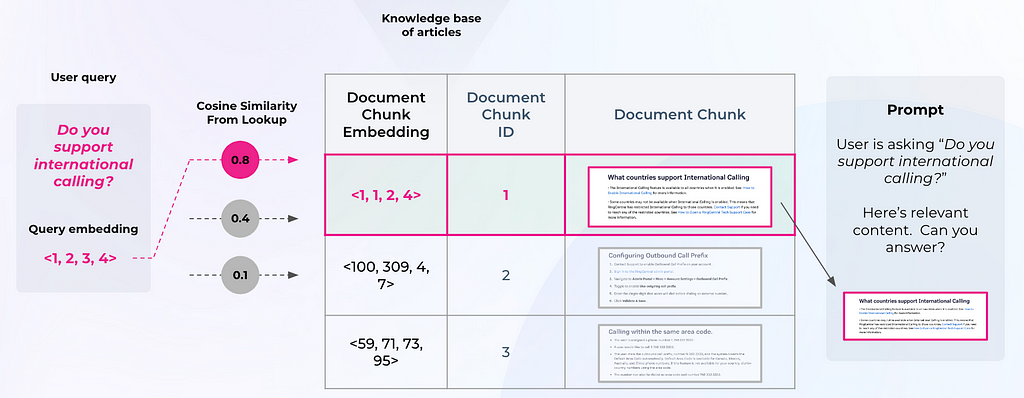

Diagram by author To evaluate an LLM RAG system, you need to both fetch the right context and then generate an appropriate answer. Typically, developers will embed a user query and use it to search a vector database for relevant chunks (see Figure 3). Retrieval performance hinges not only on the returned chunks being semantically similar to the query, but on whether those chunks provide enough relevant information to generate the correct response to the query. Now, you must configure the parameters around your RAG system (type of retrieval, chunk size, and K).

Figure 3: RAG Framework (by author) Similarly with our last scenario, we can try editing the prompt template or change out the LLM being used to generate responses. Since the relevant content is retrieved during the document retrieval process but isn’t being surfaced by the LLM, this could be a quick solution. Below is an example of a correct response generated from running a revised prompt template (after iterating on prompt variables, LLM parameters, and the prompt template itself).

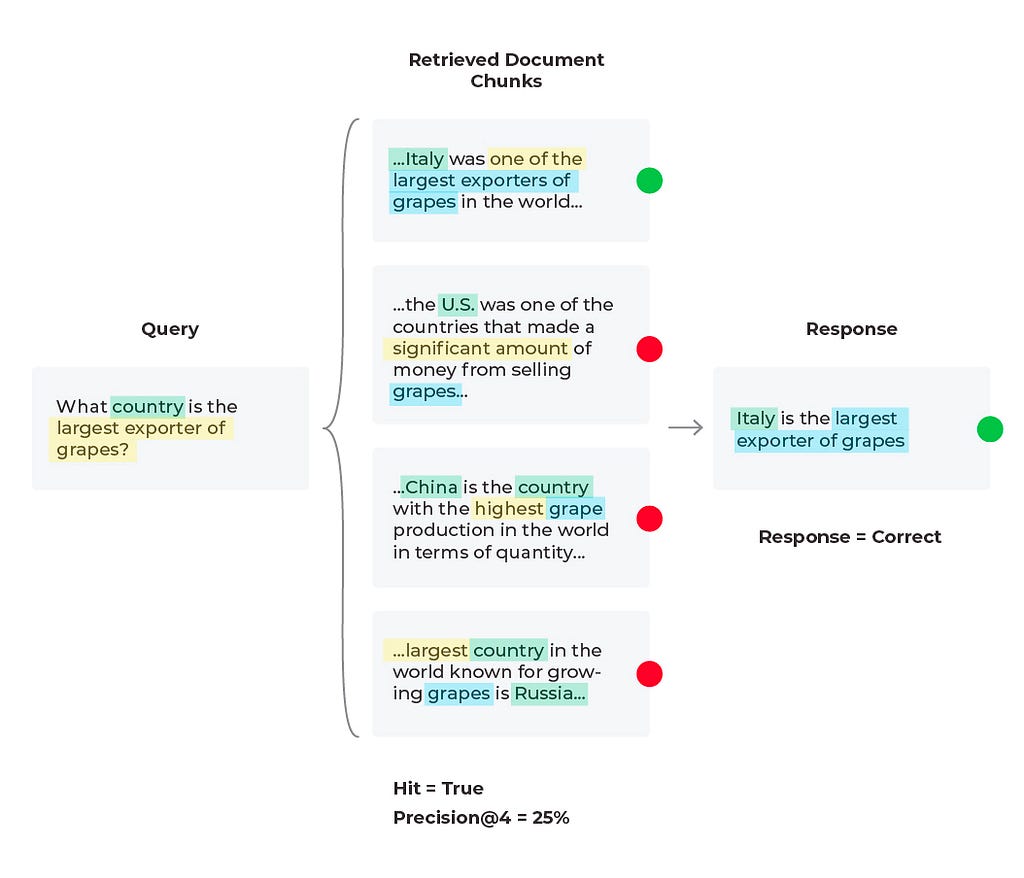

Diagram by author When troubleshooting bad responses with mixed performance metrics, we need to first figure out which retrieval metrics are underperforming. The easiest way of doing this is to implement thresholds and monitors. Once you are alerted to a particular underperforming metric you can resolve with specific workflows. Let’s take nDCG for example. nDCG is used to measure the effectiveness of your top ranked documents and takes into account the position of relevant docs, so if you retrieve your relevant document (Hit = ‘True’), you will want to consider implementing a reranking technique to get the relevant documents closer to the top ranked search results.

For our current scenario we retrieved a relevant document (Hit = ‘True’), and that document is in the first position, so let’s try and improve the precision (percent relevant documents) up to ‘K’ retrieved documents. Currently our Precision@4 is 25%, but if we used only the first two relevant documents then Precision@2 = 50% since half of the documents are relevant. This change leads to the correct response from the LLM since it is given less information, but more relevant information proportionally.

Diagram by author Essentially what we were seeing here is a common problem in RAG known as lost in the middle, when your LLM is overwhelmed with too much information that is not always relevant and then is unable to give the best answer possible. From our diagram, we see that adjusting your chunk size is one of the first things many teams do to improve RAG applications but it’s not always intuitive. With context overflow and lost in the middle problems, more documents isn’t always better, and reranking won’t necessarily improve performance. To evaluate which chunk size works best, you need to define an eval benchmark and do a sweep over chunk sizes and top-k values. In addition to experimenting with chunking strategies, testing out different text extraction techniques and embedding methods will also improve overall RAG performance.

Response and Retrieval Evaluation Metrics Summary

The response and retrieval evaluation metrics and approaches in this piece offer a comprehensive way to view an LLM RAG system’s performance, guiding developers and users in understanding its strengths and limitations. By continually evaluating these systems against these metrics, improvements can be made to enhance RAG’s ability to provide accurate, relevant, and timely information.

Additional advanced methods for improving RAG include re-ranking, metadata attachments, testing out different embedding models, testing out different indexing methods, implementing HyDE, implementing keyword search methods, or implementing Cohere document mode (similar to HyDE). Note that while these more advanced methods — like chunking, text extraction, embedding model experimentation — may produce more contextually coherent chunks, these methods are more resource-intensive. Using RAG along with advanced methods can make performance improvements to your LLM system and will continue to do so as long as your retrieval and response metrics are properly monitored and maintained.

Questions? Please reach out to me here or on LinkedIn, X, or Slack!

Top Evaluation Metrics for RAG Failures was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Top Evaluation Metrics for RAG FailuresGo Here to Read this Fast! Top Evaluation Metrics for RAG Failures

-

Switching From Sphinx to MkDocs Documentation — What Did I Gain and Lose

Guide on performing this switch, and comparison between them

Originally appeared here:

Switching From Sphinx to MkDocs Documentation — What Did I Gain and LoseGo Here to Read this Fast! Switching From Sphinx to MkDocs Documentation — What Did I Gain and Lose

-

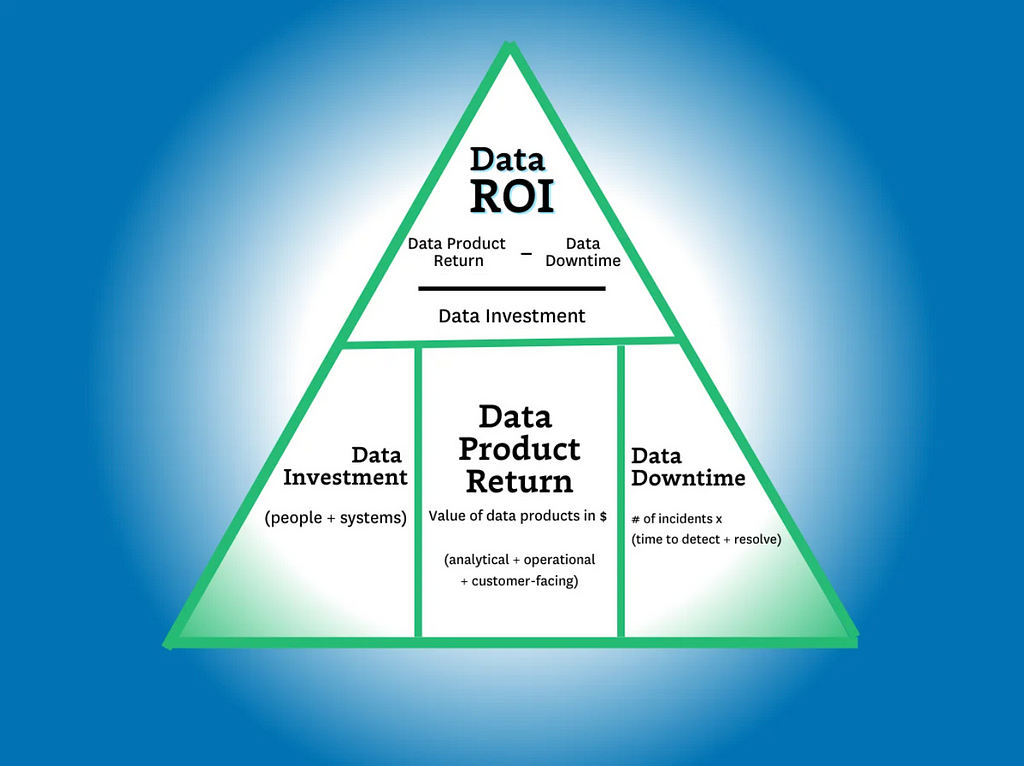

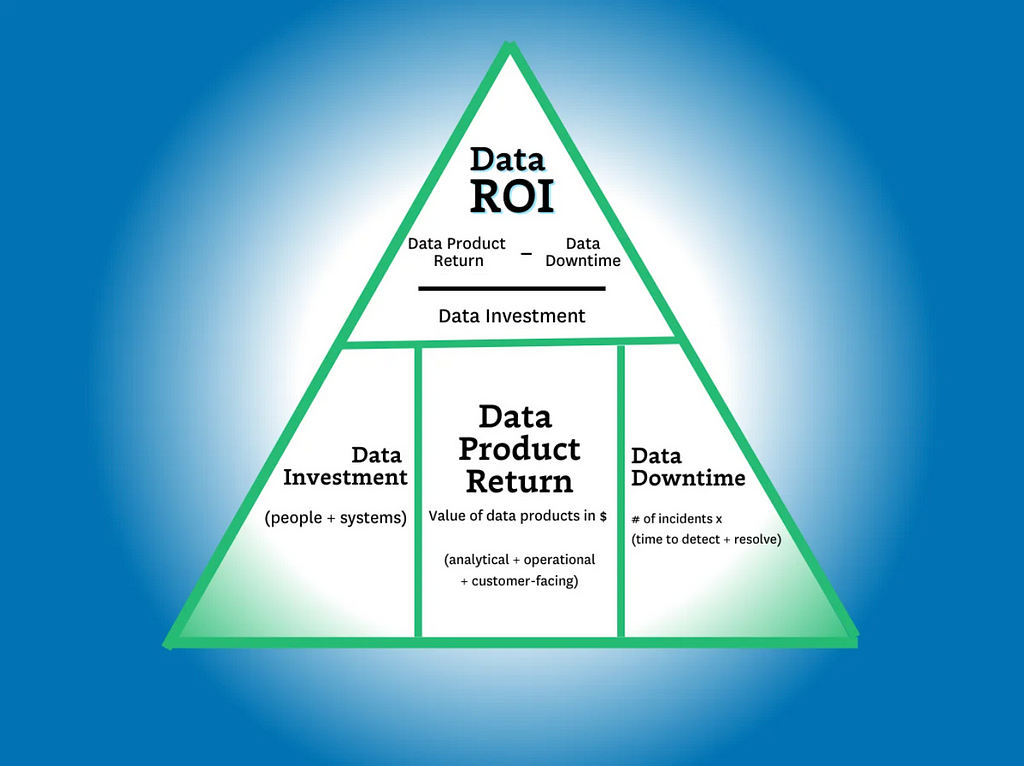

The Data ROI Pyramid: A Method for Measuring & Maximizing Your Data Team

Struggling to articulate the value of your data team? Learn how to calculate your data team’s return with the Data ROI Pyramid.

Image by author. As recently as a year ago, about half of the data leaders I spoke with felt the business value of their team sold itself. Today, maximizing and measuring data team ROI is near the top of every data leader’s agenda.

Most data team ROI formulas focus on some version of the following calculation:

Lift / investment = ROI.

And while there’s certainly value in its simplicity, it doesn’t capture the full value of the data team. For example, how do you capture the value of:

- A customer churn dashboard

- A dataset that supports ad hoc queries of engagement behaviors

- A migration to a new data stack that supports faster, more scalable compute

- Increasing data adoption by 30% as a result of a data quality initiative

It’s not easy! Grizzled data industry veterans who have successfully turned the wild world of customer acquisition funnels into a predictable science start squirming when looking inward.

Over the last six months, I’ve spoken with data leaders and iterated on a variety of ROI formulas, all in an attempt to reach a point that, if not capturing the exact value of a data team, can get us a little closer.

And the result of those conversations has been none other than a brand new data ROI pyramid. Yes, I’m aware of all the great pyramids and conjoined triangles that have come before but this one is different. Sort of.

The goal of this pyramid is aimed squarely at helping data leaders

- Get closer to the business

- Balance competing priorities

- And focus on the right metrics to generate value for their stakeholders.

So, with the preamble out of the way, let’s take it from the top!

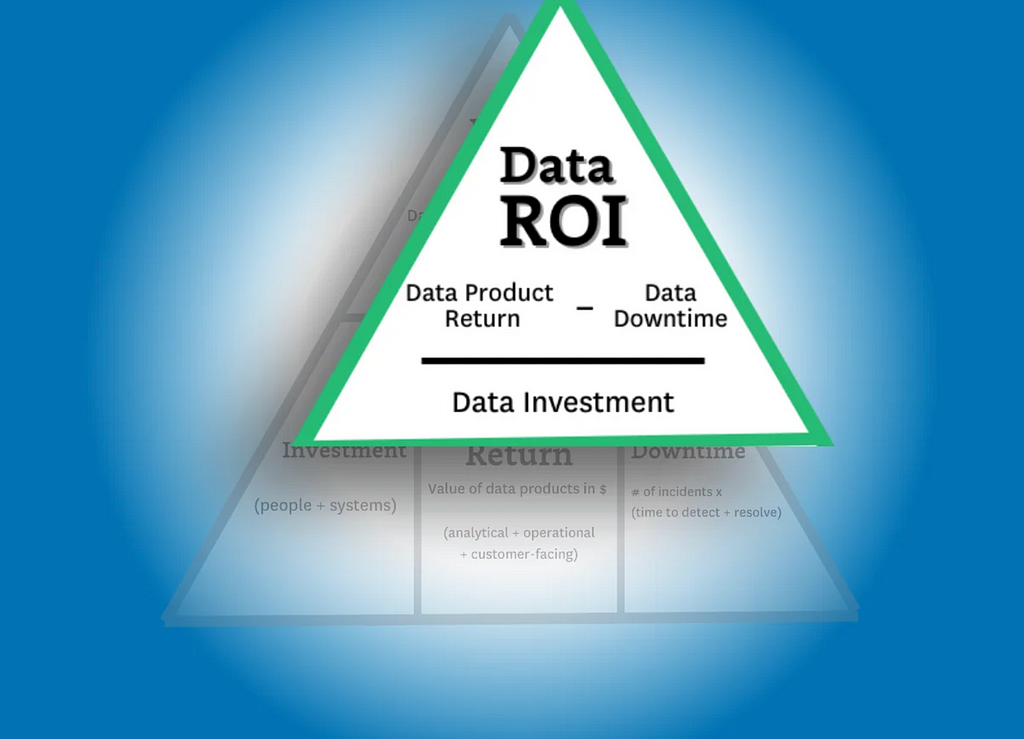

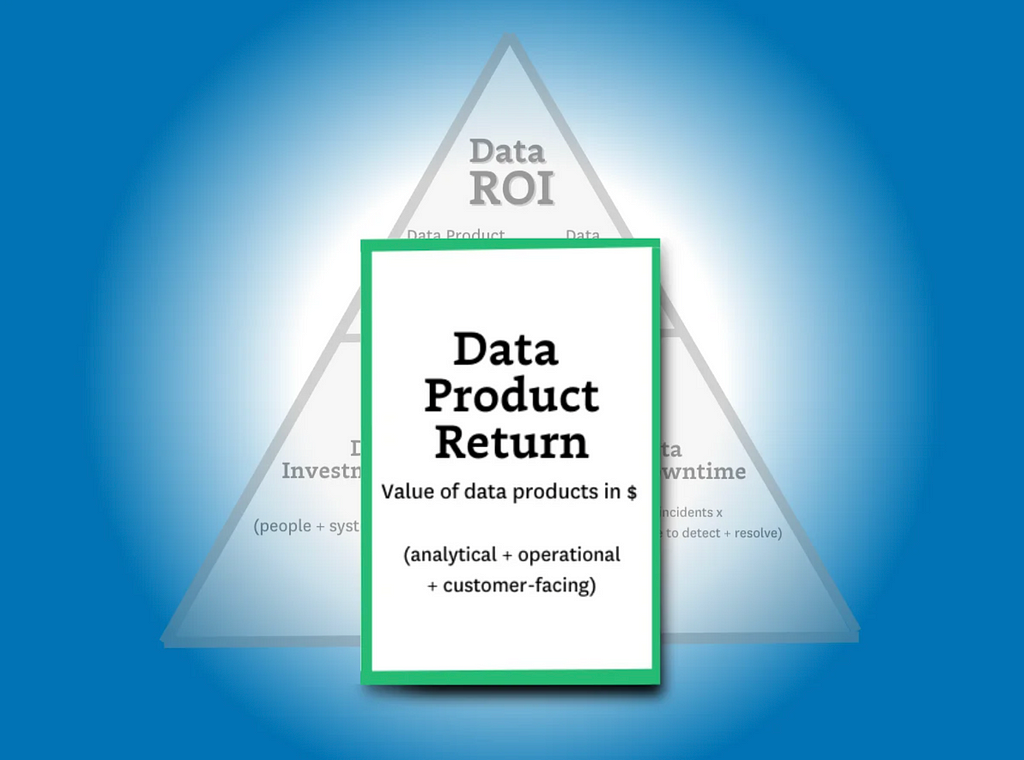

Calculating data ROI

Image by author. As a general rule of thumb, the higher your report in an organization, the fewer and more encompassing your metrics will become. The CEO doesn’t care how many dashboards you support or your data freshness SLA adherence percentage.

They want to know what their investors want to know: “Am I getting a return on my investment?”

The data ROI pyramid tackles this question with a similar formula to the one in the introduction:

(Data product value — data downtime) / data investment = ROI

…but there are two key differences. The first is the more expansive definition of “data product revenue” (more on that later) and the second is the introduction of data downtime.

The downtime variable is important to include because as more data teams drive higher levels of revenue–via machine learning models, customer-facing apps, data democratization, and other initiatives–the more severe the consequences of downtime becomes in terms of lost time, revenue, and trust.

This also makes reducing data downtime one of the three key strategies for data leaders to increase ROI: you can either increase revenue, you can decrease your investments, or you can reduce your data downtime. And one of those fruits hangs a lot lower than the others.

So, now that we have a framework for calculating ROI, let’s dive deeper into how we identify the variables.

Calculating data investments

Image by author. The formula is easy — investment = people + solutions.

But it’s also easy to overcomplicate.

Some contracts are annual. Others are not. Some solutions charge based on usage. Others don’t. My recommendation here is to keep this component relatively simple. Stick to an aggregate projection of costs divided evenly across a time period (typically a month or quarter).

Levers to optimize data investments

When it comes to optimizing your data investments, the name of the game is efficiency. To get the most out of your data investments, you need to improve the speed at which those investments deliver value.

Below are three levers you can pull to improve efficiency for your data systems, your data teams, and your data consumers.

- System optimization — The cost of almost all modern data solutions is based on usage. The metrics you will want to keep an eye on here are the total number of items that drive these costs (tables, queries, VMs, warehouses, etc) and the outliers at the top (expensive queries) or the bottom (unused tables). A few ways to understand and control system costs could be through assigning ownership for domains, cleaning up unused assets and costly queries, or even consolidating your data stack around central tooling.

- Time to build and maintain — The time it takes to build and maintain your key data assets, including data products and machine learning capabilities, is a key lever that measures your data team’s productivity. While larger upfront investments may be required to develop an effective data platform to produce, simplifying the workflows for building and maintaining data pipelines can have a dramatic impact on data team efficiency.

- Time to insight (or action) — This lever is focused on the time it takes your data consumers to realize value. In other words, how effectively has the data team enabled your data consumers? Discoverability and self-service can both work to improve time-to-insight for consumers, while micro-batch infrastructures can make that data available at the lowest possible latency for machine learning, analytics, and reporting.

Time spent enabling data self-service is usually well-spent, but the pay-off must be greater than the effort spent to build and maintain it. Image courtesy of Shane Murray. Calculating data product return

Image by author. Calculating data product return is by far the most complex step in our ROI calculation. That’s because as the data industry continues to advance, the variety and complexity of data product use cases has continued advancing right along with it.

Fortunately, data products can generally be split into one of three primary categories: analytical data products, operational data products, and customer-facing data products. These can exist in the form of dashboards, ML models, experiments leveraging data insights, and — of course — generative AI. While the latter may be a bit more complex to build, genAI is still a data product at its core and its value can still be calculated using the methodologies we’ll outline below.

To really answer this question, the formula for data product return needs to be inclusive of all data team activities, whether they produce value directly (for example revenue generated via a paywall ML model) or indirectly (for example a customer churn dashboard).

The formula I would propose for calculating data product return is this:

Value from Analytical Data Products + Operational Data Products + Customer Facing Data Products = Data Product Return

As you can see, this part of the equation presents the most variables for our ROI calculation. Since this part of the equation is by far the most complicated, we’ll be spending the majority of our time here. So, with that in mind, let’s take a look at each subcategory (or use case) in a bit more detail.

Analytical Data Products

First, let’s dig into the value of the most traditional — and common — data use case: analytics.

Analytical data products are a mix of the critical dashboards, ML models, and experiments conducted and supported by your data team to deliver insights for decision making.

Whether we’re talking about the marketing dashboards or important metrics like customer LTV, analytical data products play a fundamental role in the day-to-day operations of any business. But in the same way that all data products aren’t created equal, the way you’ll calculate their value isn’t either. And, of course, some will be easier to calculate than others.

First, let’s look at experiments:

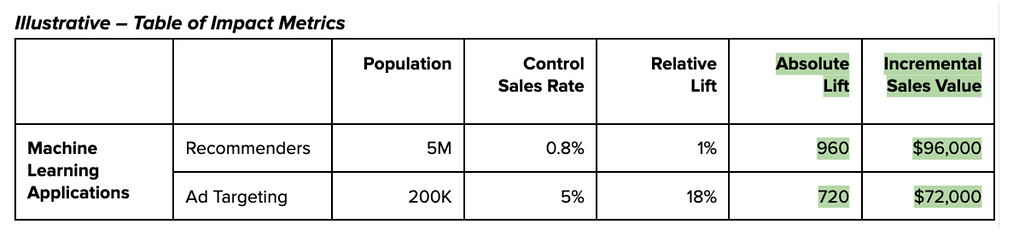

Measuring incremental impact

Measuring absolute impact is one of the simplest ways to understand return from any data product. And calculating the value of an experiment conducted as a result of your data team’s research and analytical insights can be quickly estimated by understanding the delta between test versus control and translating those numbers into dollars earned/saved each month.

For a more conservative approach, you could estimate value by computing the return against a random or average decision to better represent decisions made without support from your data team.

Combining tens or hundreds of these experiments per year will give you a ballpark figure for the incremental value delivered by the experimentation platform and the analytical work surrounding those experiments.

Measuring value-to-stakeholders

But what about dashboards? Rarely are these initiatives so easily measured by a controlled or natural experiment.

To account for these data products, we’ll need to take a more nuanced approach to estimating value. In this case, we’ll be translating qualitative data into something representative by tapping into the consumers themselves.

Believe it or not, your business users and data consumers are actually quite knowledgeable about how valuable your dashboard is (or isn’t) to them. And their responses can be quantified. While this may not seem rigorous enough at first glance, this is actually similar to the process MIT economists used to determine how free services contribute value to the national GDP. For example, they asked respondents how much they would have to be paid not to use Facebook or Google Maps for a year. ($40 to $50 a month for Facebook if you are curious).

For the most important dashboards, data teams can go a step further by creating a benchmark for respondents, like “We estimate the cost of maintaining this dashboard to be about $5,000 last quarter. In your estimation did it add that level of value to your work over that period of time?” For a baseline, here is how our survey of 200 data professionals revealed they judged their data consumers would value their dashboards:

- Less than $500k: 5%

- 500k-1m: 11%

- 1m-10m: 49%

- 10m-25m: 32%

- 25m+: 5%

Customer Facing Lift

Here I’m referring specifically to data that’s customer facing, not the ML models powered by data. This data use case generally comes in two flavors.

The first is when data IS the product. There are a significant number of businesses that ingest, transform, and then sell data to other companies. It could be a data mining company compiling insights from web scraping eCommerce sites or a television manufacturer that sells viewership data to advertisers.

In this case, calculating is pretty straightforward: the revenue of the data product is the revenue of the sale. When you find ways to enrich this data, you make it more valuable and thus increase the sale price.

However, what about cases where data is only part of the product being offered? For example, a point of sale system providing insights back to the merchant on their foot traffic patterns? Or a video player that breaks down views across audience segments by time?

In some cases, the data will be a nice to have. In other cases, it will be a significant factor on customer acquisition and retention. Luckily, data teams have been experimenting and measuring the impact of features on retention for a while now.

Image courtesy of Shane Murray. Operational Lift

I define operational data use cases as activities that MUST take place. Examples would include reporting to the board or an airline re-accommodating passengers of a delayed flight.

If the data systems went down, these activities would still happen, but they would be considerably more painful. An organization may have to manually collect and aggregate data from across the business for its report or passengers may need to go to the customer service desk rather than have an app automatically present their options for re-accommodation.

In these cases the value is typically best determined by the hours saved between the more automated and more painful process. In some situations, alternative impacts such as fine avoidance or poor customer satisfaction could be calculated as well.

Image courtesy of Shane Murray. Levers to maximize data product return

You can optimize data product value by increasing how effective it is and the range of its impact. Some broad proxies to measure this across a wide range of use cases and industries are adoption, reach, and velocity.

- Adoption and Reach — The more use a data product sees, the more value it can ostensibly provide. So driving improved reach and adoption can also dramatically increase the incremental value your data products will provide.

- Velocity — One of the biggest drivers of experimentation value is velocity: how many meaningful experiments can the organization execute over a period of time? More velocity can mean improved productivity, a more mature central platform, and even improved enablement for data consumers.

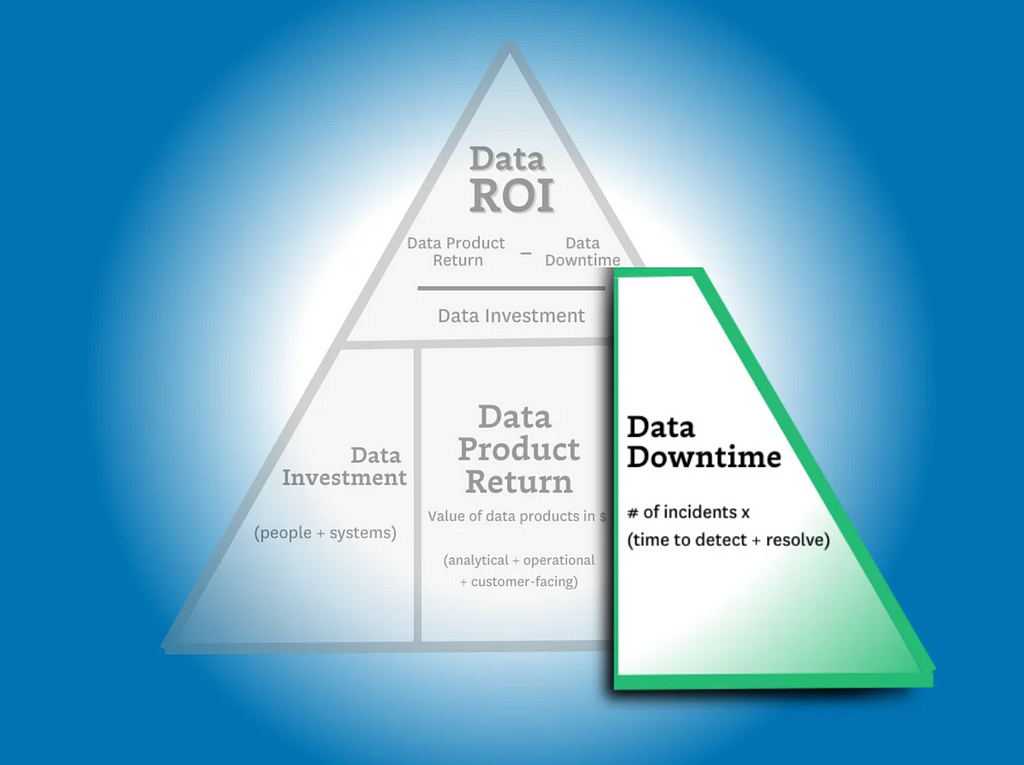

Calculating data downtime

Image by author. Finally, we need to understand how data downtime impacts ROI.

In other articles, we’ve discussed how to calculate data downtime using your incident and response times. Here’s what that formula looks like:

Number of incidents x (average time to detection + average time to resolution)

This is helpful in measuring how your overall data product reliability is trending. But in this case, we aren’t as interested in the aggregate data downtime or the efficiency of the team (yet).

What we want to find out here is the operational cost for the data downtime of specific data products. To do this, you’ll need data lineage in order to understand the impact a data issue on an upstream table has across various downstream data products.

Since we’ve already calculated the revenue generated from each of our data products, including key dashboards, we can now subtract the operational cost of that downtime from the revenue.

For this component of the ROI calculation, I recommend only focusing on downtime that violates your data SLAs. If a dashboard that’s checked daily has a data freshness issue that only persists for a few hours before being resolved, that downtime is unlikely to have an operational impact on the organization (and your data team shouldn’t be penalized for it).

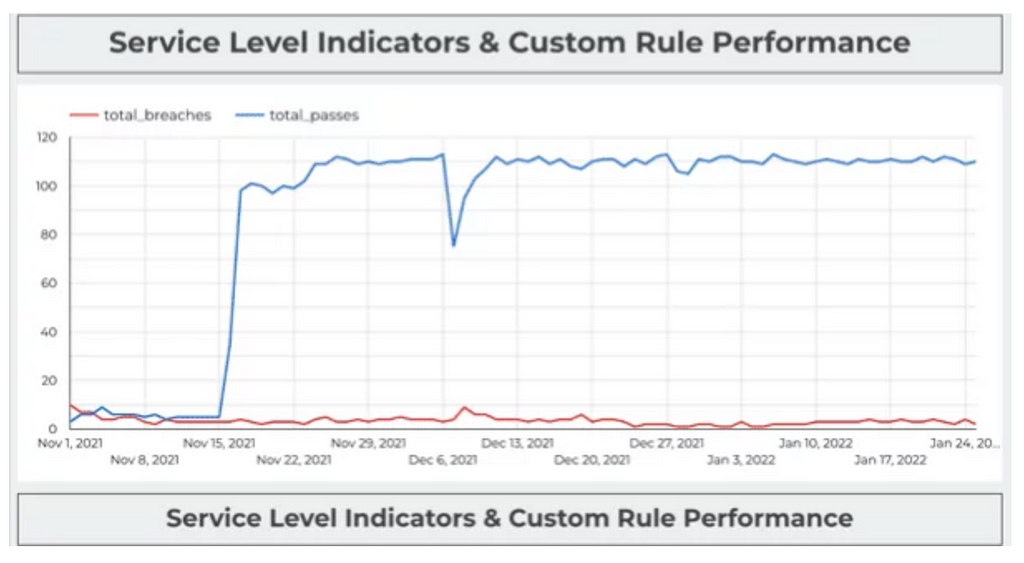

Levers to minimize data downtime

Improvements in data downtime can have a dramatic impact on your data team’s ROI calculation, especially for use cases where the data is so central to the product offering that data downtime is equivalent to operational downtime.

Red Ventures custom built SLA adherence dashboard. Image courtesy of Red Digital at Red Ventures. We’ve covered the metric the CEO cares about and the strategic metrics data leaders should consider. Now let’s talk about the tactical levers your team can pull to maximize your outcomes across these strategic metrics.

SLA Adherence

SLA adherence, the amount of time the SLA has been breached divided by the amount of time it has been upheld, can help data teams maintain a granular understanding of how many data quality incidents are having an adverse impact on specific data assets — and what measures to take top protect the value of those products.

Coverage%

Minimizing data downtime can often be directly correlated to how well your data products are covered with monitors and custom tests from raw ingestion to final resulting table. Data pipelines and systems are radically interdependent. Higher coverage percentage typically will translate into better time to detection and resolution.

Status Update%

The teams that are the best at documenting their incident history are almost always the ones with the lowest downtime. High status update percentages encourage a lower time to resolution and can even help prevent incidents by providing better insight into overall data health.

If your data team has a low status update percentage it is either because of alert fatigue or because your team is unable to quickly triage incidents.

Calculating Data ROI Is Hard — But It’s Worth It

While I am under no illusions that calculating the ROI of a data product is either an exact science or a quick win, I will ardently uphold that it’s a worthwhile endeavor — and perhaps most importantly, an achievable destination.

I am also confident there are few activities more existentially critical for data leaders.

By better quantifying and optimizing the data team’s value, we can better earn our colleagues trust and recognition of the value we add to the business. At that point, the sky’s the limit for motivated, well-led data teams.

The Data ROI Pyramid: A Method for Measuring & Maximizing Your Data Team was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

The Data ROI Pyramid: A Method for Measuring & Maximizing Your Data TeamThe Data ROI Pyramid: A Method for Measuring & Maximizing Your Data Team -

Intervening on early readouts for mitigating spurious features and simplicity bias

Machine learning models in the real world are often trained on limited data that may contain unintended statistical biases. For example, in the CELEBA celebrity image dataset, a disproportionate number of female celebrities have blond hair, leading to classifiers incorrectly predicting “blond” as the hair color for most female faces — here, gender is a spurious feature for predicting hair color. Such unfair biases could have significant consequences in critical applications such as medical diagnosis.

Surprisingly, recent work has also discovered an inherent tendency of deep networks to amplify such statistical biases, through the so-called simplicity bias of deep learning. This bias is the tendency of deep networks to identify weakly predictive features early in the training, and continue to anchor on these features, failing to identify more complex and potentially more accurate features.

With the above in mind, we propose simple and effective fixes to this dual challenge of spurious features and simplicity bias by applying early readouts and feature forgetting. First, in “Using Early Readouts to Mediate Featural Bias in Distillation”, we show that making predictions from early layers of a deep network (referred to as “early readouts”) can automatically signal issues with the quality of the learned representations. In particular, these predictions are more often wrong, and more confidently wrong, when the network is relying on spurious features. We use this erroneous confidence to improve outcomes in model distillation, a setting where a larger “teacher” model guides the training of a smaller “student” model. Then in “Overcoming Simplicity Bias in Deep Networks using a Feature Sieve”, we intervene directly on these indicator signals by making the network “forget” the problematic features and consequently look for better, more predictive features. This substantially improves the model’s ability to generalize to unseen domains compared to previous approaches. Our AI Principles and our Responsible AI practices guide how we research and develop these advanced applications and help us address the challenges posed by statistical biases.

Animation comparing hypothetical responses from two models trained with and without the feature sieve. Early readouts for debiasing distillation

We first illustrate the diagnostic value of early readouts and their application in debiased distillation, i.e., making sure that the student model inherits the teacher model’s resilience to feature bias through distillation. We start with a standard distillation framework where the student is trained with a mixture of label matching (minimizing the cross-entropy loss between student outputs and the ground-truth labels) and teacher matching (minimizing the KL divergence loss between student and teacher outputs for any given input).

Suppose one trains a linear decoder, i.e., a small auxiliary neural network named as Aux, on top of an intermediate representation of the student model. We refer to the output of this linear decoder as an early readout of the network representation. Our finding is that early readouts make more errors on instances that contain spurious features, and further, the confidence on those errors is higher than the confidence associated with other errors. This suggests that confidence on errors from early readouts is a fairly strong, automated indicator of the model’s dependence on potentially spurious features.

We used this signal to modulate the contribution of the teacher in the distillation loss on a per-instance basis, and found significant improvements in the trained student model as a result.

We evaluated our approach on standard benchmark datasets known to contain spurious correlations (Waterbirds, CelebA, CivilComments, MNLI). Each of these datasets contain groupings of data that share an attribute potentially correlated with the label in a spurious manner. As an example, the CelebA dataset mentioned above includes groups such as {blond male, blond female, non-blond male, non-blond female}, with models typically performing the worst on the {non-blond female} group when predicting hair color. Thus, a measure of model performance is its worst group accuracy, i.e., the lowest accuracy among all known groups present in the dataset. We improved the worst group accuracy of student models on all datasets; moreover, we also improved overall accuracy in three of the four datasets, showing that our improvement on any one group does not come at the expense of accuracy on other groups. More details are available in our paper.

Comparison of Worst Group Accuracies of different distillation techniques relative to that of the Teacher model. Our method outperforms other methods on all datasets. Overcoming simplicity bias with a feature sieve

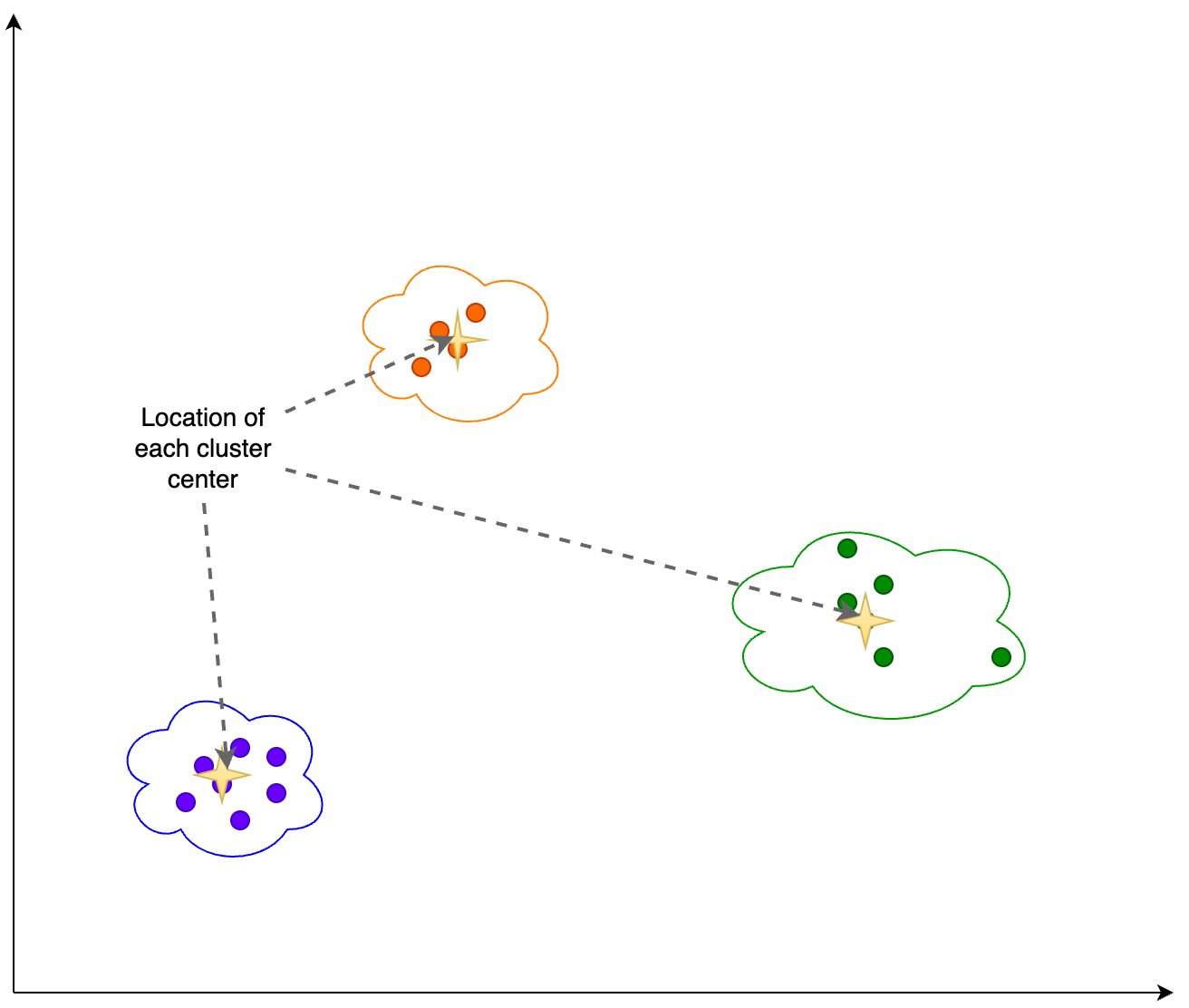

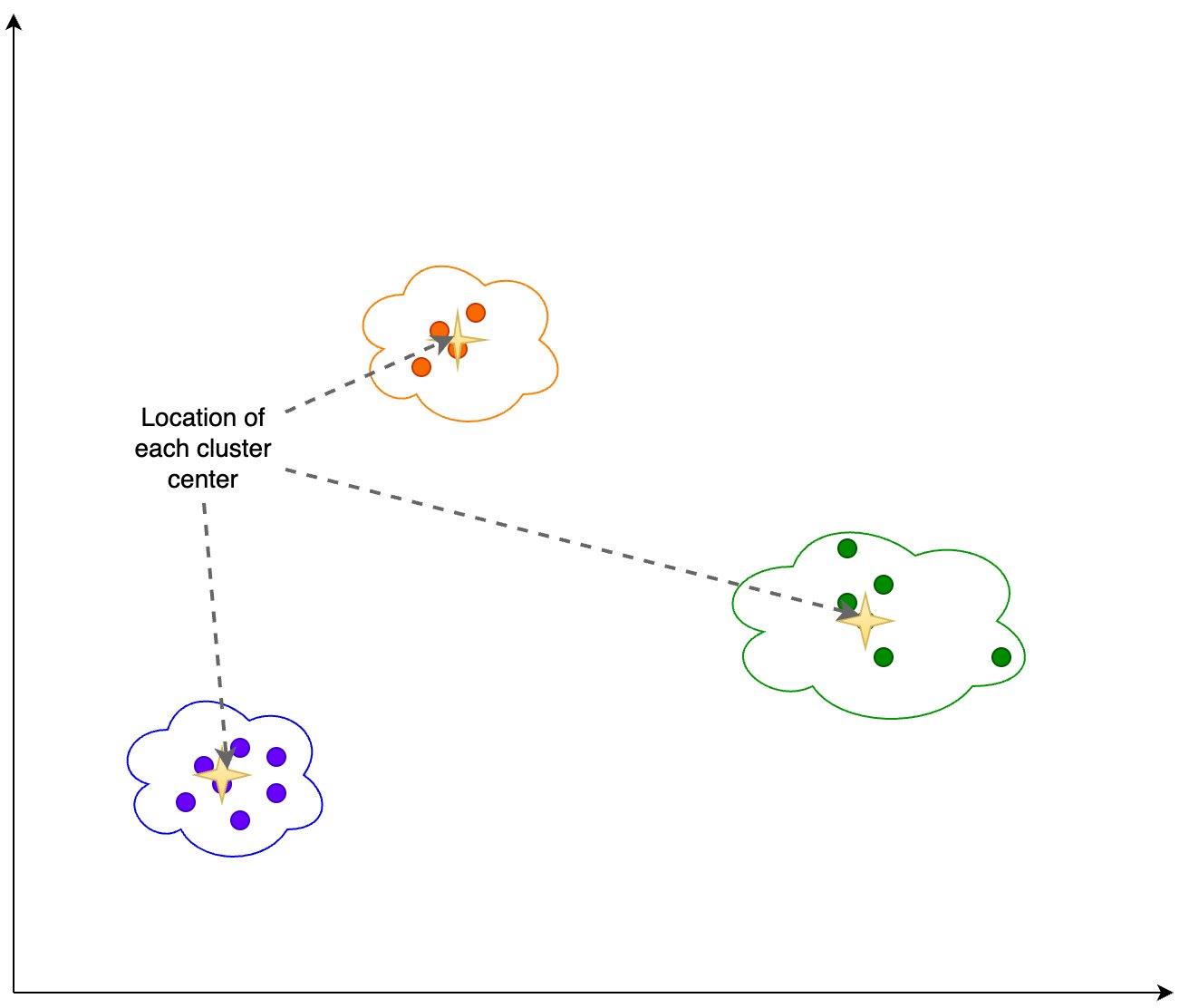

In a second, closely related project, we intervene directly on the information provided by early readouts, to improve feature learning and generalization. The workflow alternates between identifying problematic features and erasing identified features from the network. Our primary hypothesis is that early features are more prone to simplicity bias, and that by erasing (“sieving”) these features, we allow richer feature representations to be learned.

Training workflow with feature sieve. We alternate between identifying problematic features (using training iteration) and erasing them from the network (using forgetting iteration). We describe the identification and erasure steps in more detail:

- Identifying simple features: We train the primary model and the readout model (AUX above) in conventional fashion via forward- and back-propagation. Note that feedback from the auxiliary layer does not back-propagate to the main network. This is to force the auxiliary layer to learn from already-available features rather than create or reinforce them in the main network.

- Applying the feature sieve: We aim to erase the identified features in the early layers of the neural network with the use of a novel forgetting loss, Lf , which is simply the cross-entropy between the readout and a uniform distribution over labels. Essentially, all information that leads to nontrivial readouts are erased from the primary network. In this step, the auxiliary network and upper layers of the main network are kept unchanged.

We can control specifically how the feature sieve is applied to a given dataset through a small number of configuration parameters. By changing the position and complexity of the auxiliary network, we control the complexity of the identified- and erased features. By modifying the mixing of learning and forgetting steps, we control the degree to which the model is challenged to learn more complex features. These choices, which are dataset-dependent, are made via hyperparameter search to maximize validation accuracy, a standard measure of generalization. Since we include “no-forgetting” (i.e., the baseline model) in the search space, we expect to find settings that are at least as good as the baseline.

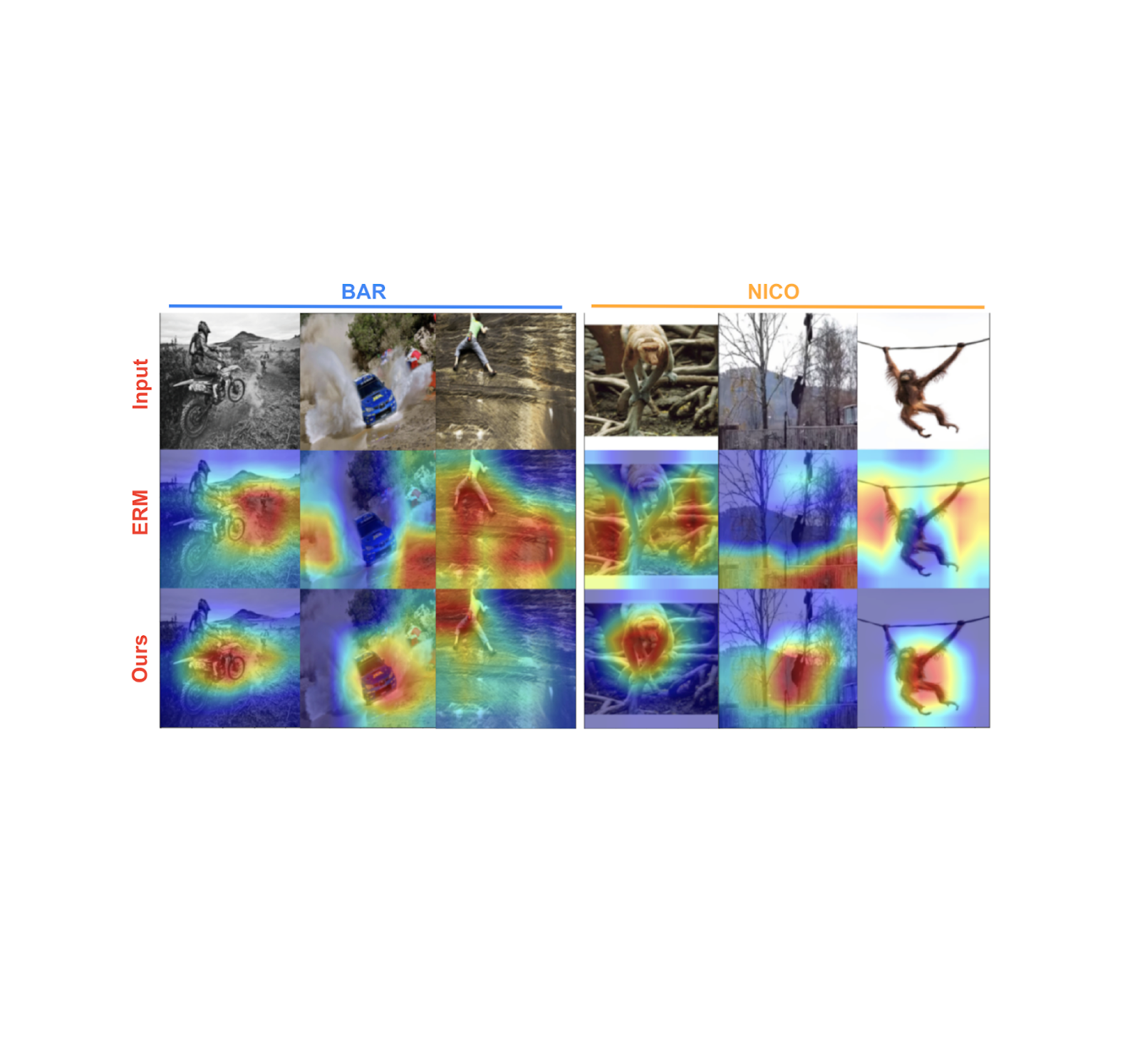

Below we show features learned by the baseline model (middle row) and our model (bottom row) on two benchmark datasets — biased activity recognition (BAR) and animal categorization (NICO). Feature importance was estimated using post-hoc gradient-based importance scoring (GRAD-CAM), with the orange-red end of the spectrum indicating high importance, while green-blue indicates low importance. Shown below, our trained models focus on the primary object of interest, whereas the baseline model tends to focus on background features that are simpler and spuriously correlated with the label.

Through this ability to learn better, generalizable features, we show substantial gains over a range of relevant baselines on real-world spurious feature benchmark datasets: BAR, CelebA Hair, NICO and ImagenetA, by margins up to 11% (see figure below). More details are available in our paper.

Our feature sieve method improves accuracy by significant margins relative to the nearest baseline for a range of feature generalization benchmark datasets. Conclusion

We hope that our work on early readouts and their use in feature sieving for generalization will both spur the development of a new class of adversarial feature learning approaches and help improve the generalization capability and robustness of deep learning systems.

Acknowledgements

The work on applying early readouts to debiasing distillation was conducted in collaboration with our academic partners Durga Sivasubramanian, Anmol Reddy and Prof. Ganesh Ramakrishnan at IIT Bombay. We extend our sincere gratitude to Praneeth Netrapalli and Anshul Nasery for their feedback and recommendations. We are also grateful to Nishant Jain, Shreyas Havaldar, Rachit Bansal, Kartikeya Badola, Amandeep Kaur and the whole cohort of pre-doctoral researchers at Google Research India for taking part in research discussions. Special thanks to Tom Small for creating the animation used in this post.

Originally appeared here:

Intervening on early readouts for mitigating spurious features and simplicity bias -

Monitor embedding drift for LLMs deployed from Amazon SageMaker JumpStart

One of the most useful application patterns for generative AI workloads is Retrieval Augmented Generation (RAG). In the RAG pattern, we find pieces of reference content related to an input prompt by performing similarity searches on embeddings. Embeddings capture the information content in bodies of text, allowing natural language processing (NLP) models to work with […]

One of the most useful application patterns for generative AI workloads is Retrieval Augmented Generation (RAG). In the RAG pattern, we find pieces of reference content related to an input prompt by performing similarity searches on embeddings. Embeddings capture the information content in bodies of text, allowing natural language processing (NLP) models to work with […]Originally appeared here:

Monitor embedding drift for LLMs deployed from Amazon SageMaker JumpStartGo Here to Read this Fast! Monitor embedding drift for LLMs deployed from Amazon SageMaker JumpStart

-

How to avoid getting motion sickness when wearing Apple Vision Pro

Apple has revealed that Apple Vision Pro apps which have a high degree of motion will come with a warning icon.

Apple has revealed that Apple Vision Pro apps which have a high degree of motion will come with a warning icon.

Apple Vision Pro in an Apple StoreAhead of its launch, experts predicted that the Apple Vision Pro would be able to minimize the chances of motion sickness through its sheer performance. Apple had said the headset’s response time is just 12 milliseconds, and that this provides a more naturalistic, realistic view of the world — which should cut down motion sickness.

Now that the headset is finally available, Apple has updated its support documentation with details of how to avoid motion sickness while wearing the Apple Vision Pro. And it includes one simple technique — look for the icon.

Go Here to Read this Fast! How to avoid getting motion sickness when wearing Apple Vision Pro

Originally appeared here:

How to avoid getting motion sickness when wearing Apple Vision Pro