Go Here to Read this Fast! 3 underrated Netflix shows you should watch this weekend (May 3-5)

Originally appeared here:

3 underrated Netflix shows you should watch this weekend (May 3-5)

Go Here to Read this Fast! 3 underrated Netflix shows you should watch this weekend (May 3-5)

Originally appeared here:

3 underrated Netflix shows you should watch this weekend (May 3-5)

X is using Grok to publish AI-generated summaries of news and other topics that trend on the platform. The feature, which is currently only available to premium subscribers, is called “Stories on X,” according to a post from the company’s engineering account.

According to X, Grok relies on users’ posts to generate the text snippets. Some seem to be more news-focused, while others are summaries of conversations happening on the platform itself. One user posted a screenshot that showed stories about Apple’s earnings report and aid to Ukraine, as well as one for “Musk, Experts Debate National Debt,” which was a summary of a “candid online discussion” between Musk and other “prominent figures” on X.

If any of this sounds familiar, it’s also remarkably similar to Moments, the longtime Twitter feature that curated authoritative tweets about important news and cultural moments on the platform. The feature, which was overseen by a team of human staffers, was killed in 2022.

Like other generative AI tools, Grok’s summaries come with a disclaimer. “This story is a summary of posts on X and may evolve over time,” it says. “Grok can make mistakes, verify its outputs.” Grok, of course, doesn’t exactly have the best track record when it comes to accurately interpreting current events. It previously generated a bizarre story suggesting that NBA player Klay Thompson went on a “vandalism spree” because it couldn’t understand what “throwing bricks” meant in the context of a basketball game.

This article originally appeared on Engadget at https://www.engadget.com/x-is-using-grok-to-publish-ai-generated-news-summaries-215753934.html?src=rss

Go Here to Read this Fast! X is using Grok to publish AI-generated news summaries

Originally appeared here:

X is using Grok to publish AI-generated news summaries

Go Here to Read this Fast! Nearly half of all Steam users are using Windows 11 — but why?

Originally appeared here:

Nearly half of all Steam users are using Windows 11 — but why?

Originally appeared here:

Microsoft quietly improves Edge browser with a new internet tester and fixes

Go Here to Read this Fast! This sinister Omen gaming PC build just might keep you up at night

Originally appeared here:

This sinister Omen gaming PC build just might keep you up at night

Originally appeared here:

Counterfeit Cisco gear ended up in US military bases, used in combat operations

Originally appeared here:

Microsoft ties executive pay to security following multiple failures and breaches

“In the rugged mountain of the Andes, lived three very beautiful creatures — Rio, Rocky and Sierra. With their lustrous coat and sparkling eyes, they stood out as a beacon of strength and resilience.

As the story goes, it was said that from a very young age their thirst for knowledge was never-ending. They would seek out the wise elders of their herd, listening intently to their stories and absorbing their wisdom like a sponge. With that grew their superpower which was working together with others and learning that teamwork was the key to acing the trials in the challenging terrain of the Andes.

If they encountered travelers who had lost their way or needed help, Rio took in their perspective and led them with comfort, Rocky provided swift solutions while Sierra made sure they had the strength to carry on. And with this they earned admiration and encouraged everyone to follow their example.

As the sun set over the Andes, Rio, Rocky, and Sierra stood together, their spirits intertwined like the mountains themselves. And so, their story lived on as a testament to the power of knowledge, wisdom and collaboration and the will to make a difference.

They were the super-Llamas and the trio was lovingly called LlaMA3!”

And this story is not very far from the story of Meta’s open-source Large Language Model (LLM) — LlaMA 3 (Large Language Model Meta AI). On April 18, 2024, Meta released their LlaMa 3 family of large language models in 8B and 70B parameter sizes, claiming a major leap over LlaMA 2 and vying for the best state-of-the-art LLM models at that scale.

According to Meta, there were four key focus points while building LlaMA 3 — the model architecture, the pre-training data, scaling up pre-training, and instruction fine-tuning. This leads us to ponder what we can do to reap the most out of this very competent model — on an enterprise scale as well as at the grass-root level.

To help explore the answers to some of these questions, I collaborated with Edurado Ordax, Generative AI Lead at AWS and Prof. Tom Yeh, CS Professor at University of Colorado, Boulder.

So, let’s start the trek:

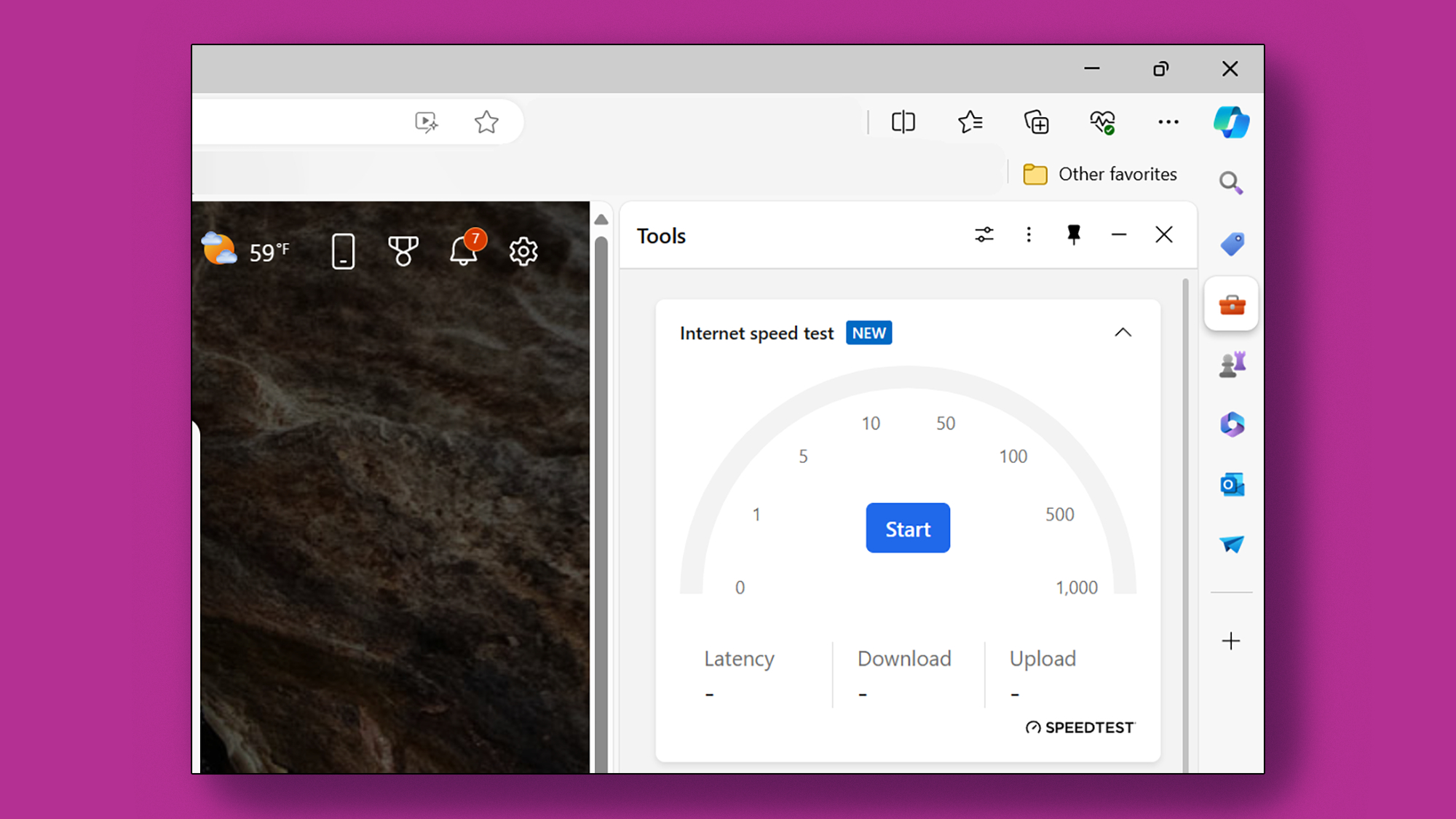

As per the recent practices, there are two main ways by which these LLMs are being accessed and worked with — API and Fine-Tuning. Even with those two very diverse approaches there are other factors in the process, as can be seen in the following images, that become crucial.

(All images in this section are courtesy to Eduardo Ordax.)

There are mainly 6 stages of how a user can interact with LlaMA 3.

Stage 1 : Cater to a broad-case usage by using the model as is.

Stage 2 : Use the model as per a user-defined application.

Stage 3 : Use prompt-engineering to train the model to produce the desired outputs.

Stage 4 : Use prompt-engineering on the user side along with delving a bit into data retrieval and fine-tuning which is still mostly managed by the LLM provider.

Stage 5 : Take most of the matters in your own hand (the user), starting from prompt-engineering to data retrieval and fine-tuning (RAG models, PEFT models and so on).

Stage 6 : Create the entire foundational model starting from scratch — pre-training to post-training.

To gain the most out of these models, it is suggested that the best approach would be entering Stage 5 because then the flexibility lies a lot with the user. Being able to customize the model as per the domain-need is crucial in order to maximize its gains. And for that, not getting involved into the systems does not yield optimal returns.

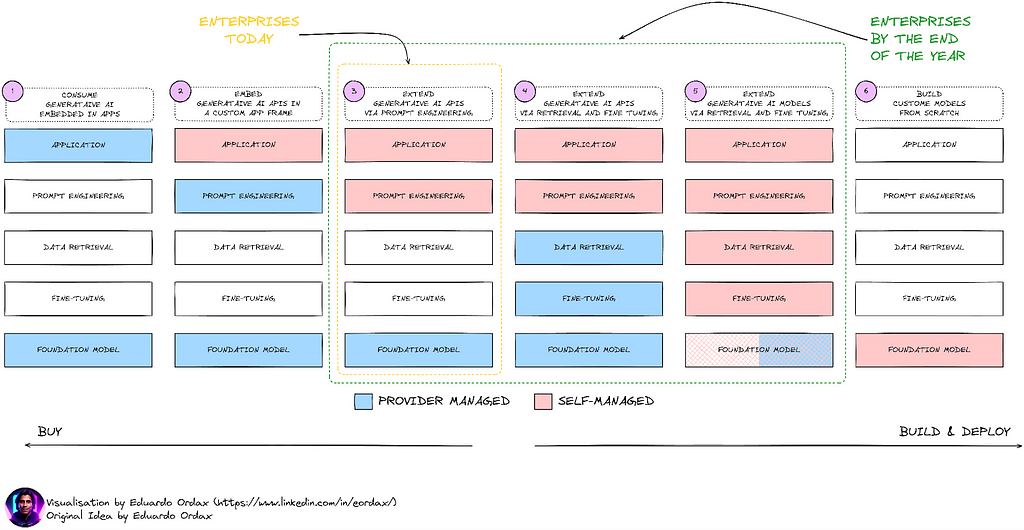

To be able to do so, here is a high-level picture of the tools that could prove to be useful:

The picture dictates that in order to get the highest benefit from the models, a set structure and a road map is essential. There are three components to it:

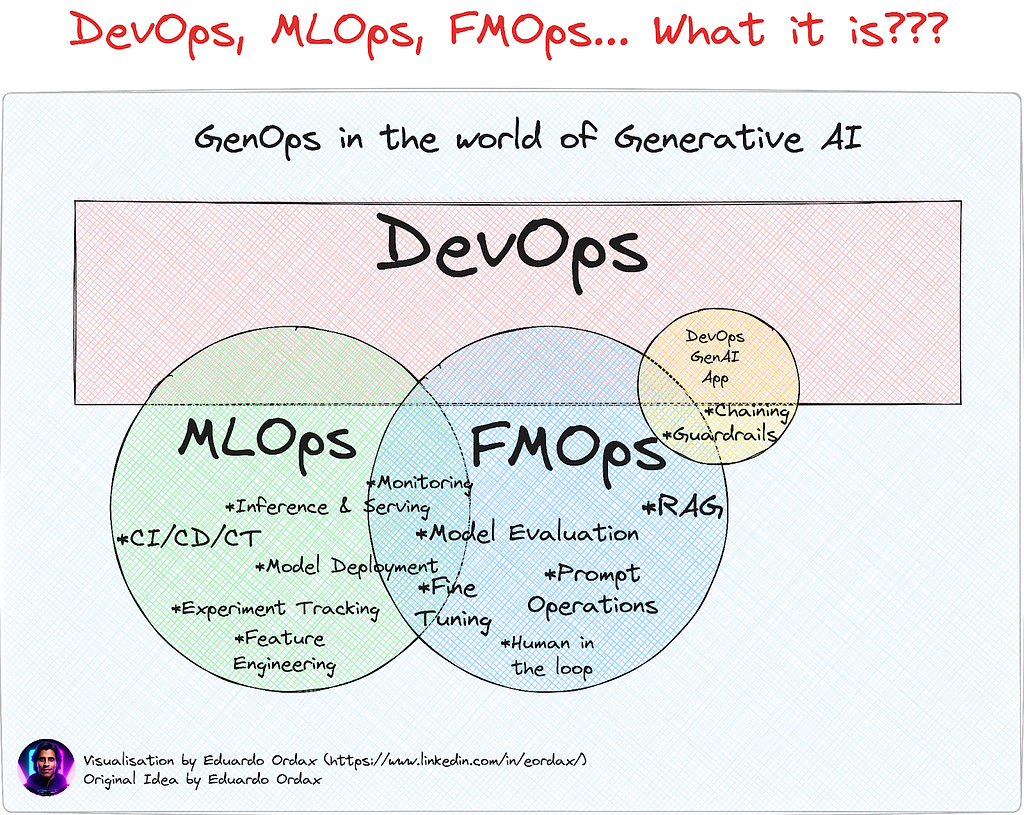

Of course, this is true for an enterprise-level deployment such that the actual benefits of the model can be reaped. And to be able to do so, the tools and practices under MLOps become very important. Combined with FMOps, these models can prove to be very valuable and enrich the GenAI ecosystem.

FMOps ⊆ MLOps ⊆ DevOps

MLOps also known as Machine Learning Operations is a part of Machine Learning Engineering that focuses on the development as well as the deployment, and maintenance of ML models ensuring that they run reliably and efficiently.

MLOps fall under DevOps (Development and Operations) but specifically for ML models.

FMOps (Foundational Model Operations) on the other hand work for Generative AI scenarios by selecting, evaluating and fine-tuning the LLMs.

With all if it being said, one thing however remains constant. And that is the fact that LlaMA 3 is after all an LLM and its implementation on the enterprise-level is possible and beneficial only after the foundational elements are set and validated with rigor. To be able to do so, let us explore the technical details behind LlaMA 3.

At the fundamental level, yes, it is the transformer. If we go a little higher up in the process, the answer would be the transformer architecture but highly optimized to achieve superior performance on the common industry benchmarks while also enabling newer capabilities.

Good news is that since LlaMa 3 is open (open-source at Meta’s discretion), we have access to the Model Card that gives us the details to how this powerful architecture is configured.

So, let’s dive in and unpack the goodness:

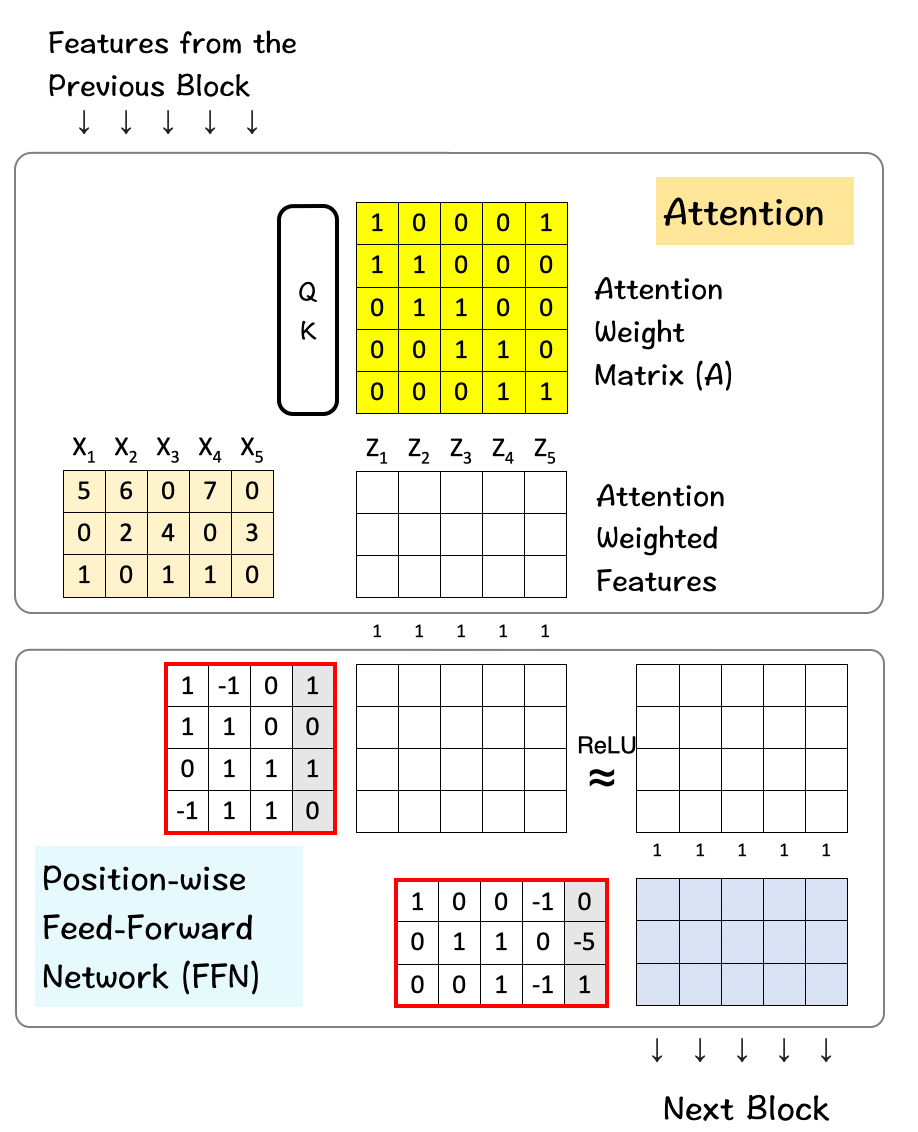

To start with, here is a quick review on how the transformer works:

(All the images in this section, unless otherwise noted, are by Prof. Tom Yeh, which I have edited with his permission.)

Below is a basic form of how the architecture looks like and how it functions.

Here are the links to the deep-dive articles for Transformers and Self-Attention where the entire process is discussed in detail.

It’s time to get into the nitty-gritty and discover how the transformer numbers play out in the real-life LlaMa 3 model. For our discussion, we will only consider the 8B variant. Here we go:

The primary numbers/values that we need to explore here are for the parameters that play a key role in the transformer architecture. And they are as below:

With the terms defined, let us refer to the actual numbers for these parameters in the LlaMA 3 model. (The original source code where these numbers are stated can be found here.)

Keeping these values in mind, the next steps illustrate how each of them play their part in the model. They are listed in their order of appearance in the source-code.

While instantiating the LlaMa class, the variable max_seq_len defines the context-window. There are other parameters in the class but this one serves our purpose in relation to the transformer model. The max_seq_len here is 8K which implies the attention head is able to scan 8K tokens at one go.

Next up is the Transformer class which defines the vocabulary size and the number of layers. Once again the vocabulary size here refers to the set of words (and tokens) that the model can recognize and process. Attention layers here refer to the transformer block (the combination of the attention and feed-forward layers) used in the model.

Based on these numbers, LlaMA 3 has a vocabulary size of 128K which is quite large. Additionally, it has 32 copies of the transformer block.

The feature dimension and the attention-heads make their way into the Self-Attention module. Feature dimension refers to the vector-size of the tokens in the embedding space and the attention-heads consist of the QK-module that powers the self-attention mechanism in the transformers.

The hidden dimension features in the Feed-Forward class specifying the number of hidden layers in the model. For LlaMa 3, the hidden layer is 1.3 times the size of the feature dimension. A larger number of hidden layers allows the network to create and manipulate richer representations internally before projecting them back to the smaller output dimension.

LlaMA 3 combines 32 of these above transformer blocks with the output of one passing down into the next block until the last one is reached.

Once we have set all the above pieces in motion, it is time to put it all together and see how they produce the LlaMA effect.

So, what is happening here?

Step 1 : First we have our input matrix, which is the size of 8K (context-window) x 128K (vocabulary-size). This matrix undergoes the process of embedding which takes this high-dimensional matrix into a lower dimension.

Step 2 : This lower dimension in this case turns out to be 4096 which is the specified dimension of the features in the LlaMA model as we had seen before. (A reduction from 128K to 4096 is immense and noteworthy.)

Step 3: This feature goes through the Transformer block where it is processed first by the Attention layer and then the FFN layer. The attention layer processes it across features horizontally whereas the FFN layer does it vertically across dimensions.

Step 4: Step 3 is repeated for 32 layers of the Transformer block. In the end the resultant matrix has the same dimension as the one used for the feature dimension.

Step 5: Finally this matrix is transformed back to the original size of the vocabulary matrix which is 128K so that the model can choose and map those words as available in the vocabulary.

And that’s how LlaMA 3 is essentially scoring high on those benchmarks and creating the LlaMA 3 effect.

LlaMA 3 was released in two model versions — 8B and 70B parameters to serve a wide range of use-cases. In addition to achieving state-of-the-art performances on standard benchmarks, a new and rigorous human-evaluation set was also developed. And Meta promises to release better and stronger versions of the model with it becoming multilingual and multimodal. The news is newer and larger models are coming soon with over 400B parameters (early reports here show that it is already crushing benchmarks by an almost 20% score increase over LlaMA 3).

However, it is imperative to say that in spite of all the upcoming changes and all the updates, one thing is going to remain the same — the foundation of it all — the transformer architecture and the transformer block that enables this incredible technical advancement.

It could be a coincidence that LlaMA models were named so, but based on legend from the Andes mountains, the real llamas have always been revered for their strength and wisdom. Not very different from the Gen AI — ‘LlaMA’ models.

So, let’s follow along in this exciting journey of the GenAI Andes while keeping in mind the foundation that powers these large language models!

P.S. If you would like to work through this exercise on your own, here is a link to a blank template for your use.

Blank Template for hand-exercise

Now go have fun and create some LlaMA 3 effect!

Deep Dive into LlaMA 3 by Hand ✍️ was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Deep Dive into LlaMA 3 by Hand ✍️

Go Here to Read this Fast! Deep Dive into LlaMA 3 by Hand ✍️

While hierarchies are a familiar concept with data, some sources deliver their data in an unusual format. Let’s see a not-so-unusual case.

Originally appeared here:

On handling precalculated hierarchical data in Power BI

Go Here to Read this Fast! On handling precalculated hierarchical data in Power BI