Originally appeared here:

Create a generative AI–powered custom Google Chat application using Amazon Bedrock

Originally appeared here:

Create a generative AI–powered custom Google Chat application using Amazon Bedrock

Originally appeared here:

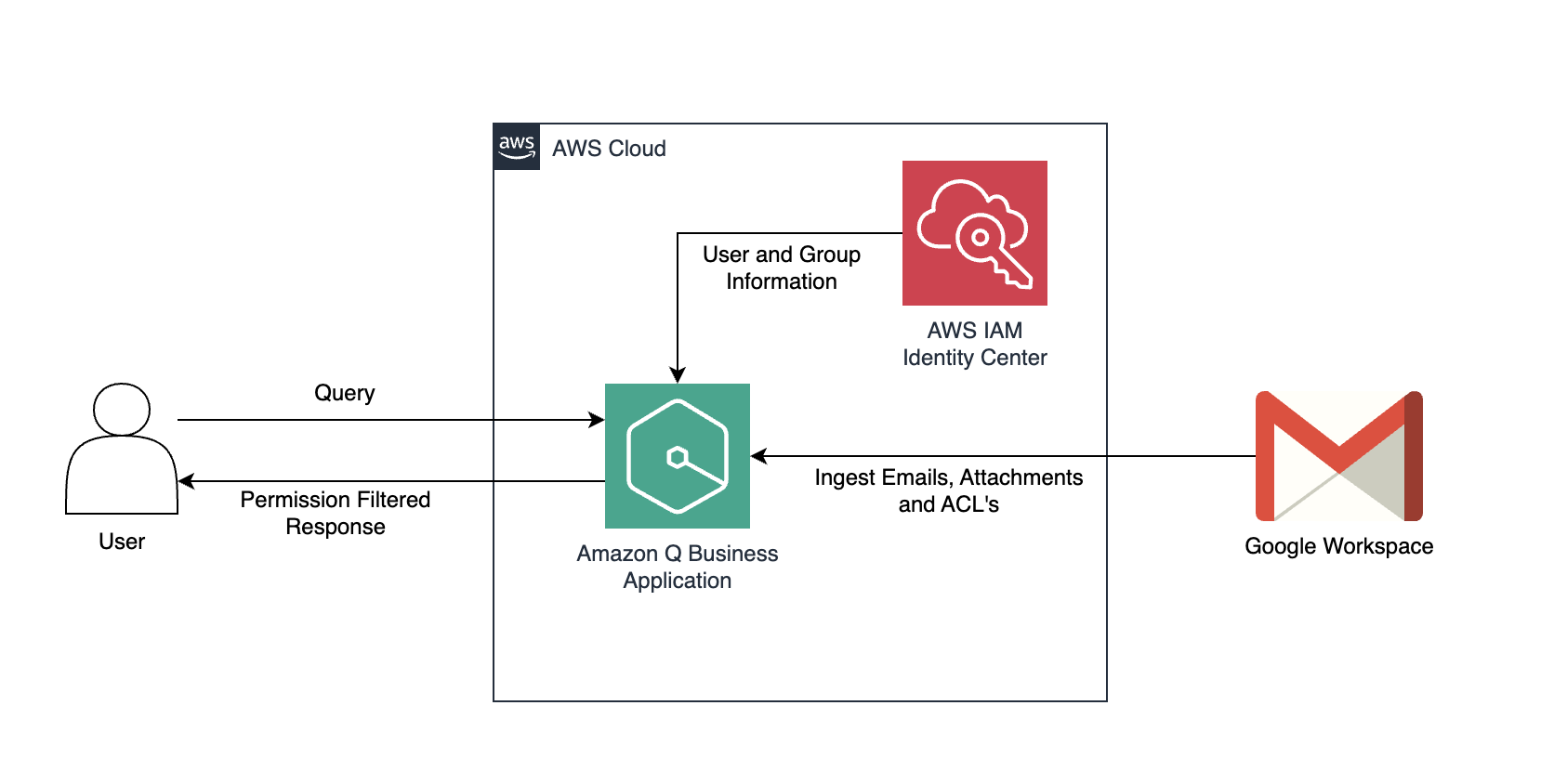

Discover insights from Gmail using the Gmail connector for Amazon Q Business

Originally appeared here:

Accelerate custom labeling workflows in Amazon SageMaker Ground Truth without using AWS Lambda

Techniques for data cleaning, transformation, and validation to ensure quality data

Originally appeared here:

Practical Guide to Data Analysis and Preprocessing

Go Here to Read this Fast! Practical Guide to Data Analysis and Preprocessing

Feeling inspired to write your first TDS post? We’re always open to contributions from new authors.

We seem to be in that sweet spot on the calendar between the end of summer and the final rush before things slow down for the holiday season—in other words, it’s the perfect time of year for learning, tinkering, and exploration.

Our most-read articles from October reflect this spirit of focused energy, covering a slew of hands-on topics. From actionable AI project ideas and data science revenue streams to accessible guides on time-series analysis and LLMs, these stories do a great job representing the breadth of our authors’ expertise and the diversity of their (and our readers’) interests. If you haven’t read them yet, what better time than now?

Every month, we’re thrilled to see a fresh group of authors join TDS, each sharing their own unique voice, knowledge, and experience with our community. If you’re looking for new writers to explore and follow, just browse the work of our latest additions, including David Foutch, Robin von Malottki, Ruth Crasto, Stéphane Derosiaux, Rodrigo Nader, Tezan Sahu, Robson Tigre, Charles Ide, Aamir Mushir Khan, Aneesh Naik, Alex Held, caleb lee, Benjamin Bodner, Vignesh Baskaran, Ingo Nowitzky, Trupti Bavalatti, Sarah Lea, Felix Germaine, Marc Polizzi, Aymeric Floyrac, Bárbara A. Cancino, Hattie Biddlecombe, Carlo Peron, Minda Myers, Marc Linder, Akash Mukherjee, Jake Minns, Leandro Magga, Jack Vanlightly, Rohit Patel, Ben Hagag, Lucas See, Max Shap, Fhilipus Mahendra, Prakhar Ganesh, and Maxime Jabarian.

Thank you for supporting the work of our authors! We love publishing articles from new authors, so if you’ve recently written an interesting project walkthrough, tutorial, or theoretical reflection on any of our core topics, don’t hesitate to share it with us.

Until the next Variable,

TDS Team

LLM Evaluation, AI Side Projects, User-Friendly Data Tables, and Other October Must-Reads was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

LLM Evaluation, AI Side Projects, User-Friendly Data Tables, and Other October Must-Reads

Build a Marimo notebook using NetworkX Python library, uncovering the hidden structures in Victor Hugo’s masterpiece

Originally appeared here:

Les Misérables Social Network Analysis Using Marimo Notebooks and the NetworkX Python library️⚔️

We hear a lot about productionized machine learning, but what does it really mean to have a model that can thrive in real-world applications?There are plenty of things that go into, and contribute, to the efficacy of a machine learning model in production. For the sake of this article we will be focusing on five of them.

The most important part of building a production-ready machine learning model is being able to access it.

For this purpose, we build a fastapi client that serves sentiment analysis responses. We utilize pydantic to ensure structure for the input and output. The model that we use is the base sentiment analysis pipeline from huggingface’s transformers library, allowing us to begin testing with a pre-trained model.

# Filename: main.py

from fastapi import FastAPI

from pydantic import BaseModel

from transformers import pipeline

app = FastAPI()

classifier = pipeline("sentiment-analysis")

class TextInput(BaseModel):

text: str

class SentimentOutput(BaseModel):

text: str

sentiment: str

score: float

@app.post("/predict", response_model=SentimentOutput)

async def predict_sentiment(input_data: TextInput):

result = classifier(input_data.text)[0]

return SentimentOutput(

text=input_data.text,

sentiment=result["label"],

score=result["score"]

)

To ensure that our work is reproducible, we can use a requirements.txt file and pip.

# Filename: requirements.txt

# Note: This has all required packages for the final result.

fastapi==0.68.1

uvicorn==0.15.0

transformers==4.30.0

torch==2.0.0

pydantic==1.10.0

numpy==1.24.3

sentencepiece==0.1.99

protobuf==3.20.3

prometheus-client==0.17.1

To install this, initialize venv in your files and run:pip install -r requirements.txt.

To host this API simply run: uvicorn main:app –reload.

Now you have an api that you can query using:

curl -X POST "http://localhost:8000/predict"

-H "Content-Type: application/json"

-d '{"text": "I love using FastAPI!"}'

or any API tool you wish (i.e. Postman). You should get a result back that includes the text query, the sentiment predicted, and the confidence of the prediction.

We will be using GitHub for CI/CD later, so I would recommend initializing and using git in this directory.

We now have a locally hosted machine learning inference API.

To allow our code to have more consistent execution, we will utilize Docker. Docker simulates a lightweight environment that allows applications to run in isolated containers, similar to virtual machines. This isolation ensures that applications can execute consistently across any computer with Docker installed, regardless of the underlying system.

Firstly, set up Docker for your given operating system.

# Filename: Dockerfile

# Use the official Python 3.9 slim image as the base

FROM python:3.9-slim

# Set the working directory inside the container to /app

WORKDIR /app

# Copy the requirements.txt file to the working directory

COPY requirements.txt .

# Install the Python dependencies listed in requirements.txt

RUN pip install -r requirements.txt

# Copy the main application file (main.py) to the working directory

COPY main.py .

# Define the command to run the FastAPI application with Uvicorn

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

At this point, you should have the directory as below.

your-project/

├── Dockerfile

├── requirements.txt

└── main.py

Now, you can build the image and run this API using:

# Build the Docker image

docker build -t sentiment-api .

# Run the container

docker run -p 8000:8000 sentiment-api

You should now be able to query just as you did before.

curl -X POST "http://localhost:8000/predict"

-H "Content-Type: application/json"

-d '{"text": "I love using FastAPI!"}'

We now have a containerized, locally hosted machine learning inference API.

In machine learning applications, monitoring is crucial for understanding model performance and ensuring it meets expected accuracy and efficiency. Tools like Prometheus help track metrics such as prediction latency, request counts, and model output distributions, enabling you to identify issues like model drift or resource bottlenecks. This proactive approach ensures that your ML models remain effective over time and can adapt to changing data or usage patterns. In our case, we are focused on prediction time, requests, and gathering information about our queries.

from fastapi import FastAPI

from pydantic import BaseModel

from transformers import pipeline

from prometheus_client import Counter, Histogram, start_http_server

import time

# Start prometheus metrics server on port 8001

start_http_server(8001)

app = FastAPI()

# Metrics

PREDICTION_TIME = Histogram('prediction_duration_seconds', 'Time spent processing prediction')

REQUESTS = Counter('prediction_requests_total', 'Total requests')

SENTIMENT_SCORE = Histogram('sentiment_score', 'Histogram of sentiment scores', buckets=[0.0, 0.25, 0.5, 0.75, 1.0])

class TextInput(BaseModel):

text: str

class SentimentOutput(BaseModel):

text: str

sentiment: str

score: float

@app.post("/predict", response_model=SentimentOutput)

async def predict_sentiment(input_data: TextInput):

REQUESTS.inc()

start_time = time.time()

result = classifier(input_data.text)[0]

score = result["score"]

SENTIMENT_SCORE.observe(score) # Record the sentiment score

PREDICTION_TIME.observe(time.time() - start_time)

return SentimentOutput(

text=input_data.text,

sentiment=result["label"],

score=score

)

While the process of building and fine-tuning a model is not the intent of this project, it is important to understand how a model can be added to this process.

# Filename: train.py

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

from datasets import load_dataset

from torch.utils.data import DataLoader

def train_model():

# Load dataset

full_dataset = load_dataset("stanfordnlp/imdb", split="train")

dataset = full_dataset.shuffle(seed=42).select(range(10000))

model_name = "distilbert-base-uncased"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSequenceClassification.from_pretrained(model_name, num_labels=2)

optimizer = torch.optim.AdamW(model.parameters(), lr=2e-5)

# Use GPU if available

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

model.train()

# Create a DataLoader for batching

dataloader = DataLoader(dataset, batch_size=8, shuffle=True)

# Training loop

num_epochs = 3 # Set the number of epochs

for epoch in range(num_epochs):

total_loss = 0

for batch in dataloader:

inputs = tokenizer(batch["text"], truncation=True, padding=True, return_tensors="pt", max_length=512).to(device)

labels = torch.tensor(batch["label"]).to(device)

optimizer.zero_grad()

outputs = model(**inputs, labels=labels)

loss = outputs.loss

loss.backward()

optimizer.step()

total_loss += loss.item()

avg_loss = total_loss / len(dataloader)

print(f"Epoch {epoch + 1}/{num_epochs}, Loss: {avg_loss:.4f}")

# Save the model

model.save_pretrained("./model/")

tokenizer.save_pretrained("./model/")

# Test the model with sample sentences

test_sentences = [

"This movie was fantastic!",

"I absolutely hated this film.",

"It was just okay, not great.",

"An absolute masterpiece!",

"Waste of time!",

"A beautiful story and well acted.",

"Not my type of movie.",

"It could have been better.",

"A thrilling adventure from start to finish!",

"Very disappointing."

]

# Switch model to evaluation mode

model.eval()

# Prepare tokenizer for test inputs

inputs = tokenizer(test_sentences, truncation=True, padding=True, return_tensors="pt", max_length=512).to(device)

with torch.no_grad():

outputs = model(**inputs)

predictions = torch.argmax(outputs.logits, dim=1)

# Print predictions

for sentence, prediction in zip(test_sentences, predictions):

sentiment = "positive" if prediction.item() == 1 else "negative"

print(f"Input: "{sentence}" -> Predicted sentiment: {sentiment}")

# Call the function to train the model and test it

train_model()

To make sure that we can query our new model that we have trained we have to update a few of our existing files. For instance, in main.py we now use the model from ./model and load it as a pretrained model. Additionally, for comparison’s sake, we add now have two endpoints to use, /predict/naive and predict/trained.

# Filename: main.py

from fastapi import FastAPI

from pydantic import BaseModel

from transformers import AutoModelForSequenceClassification, AutoTokenizer

from transformers import pipeline

from prometheus_client import Counter, Histogram, start_http_server

import time

# Start prometheus metrics server on port 8001

start_http_server(8001)

app = FastAPI()

# Load the trained model and tokenizer from the local directory

model_path = "./model" # Path to your saved model

tokenizer = AutoTokenizer.from_pretrained(model_path)

trained_model = AutoModelForSequenceClassification.from_pretrained(model_path)

# Create pipelines

naive_classifier = pipeline("sentiment-analysis", device=-1)

trained_classifier = pipeline("sentiment-analysis", model=trained_model, tokenizer=tokenizer, device=-1)

# Metrics

PREDICTION_TIME = Histogram('prediction_duration_seconds', 'Time spent processing prediction')

REQUESTS = Counter('prediction_requests_total', 'Total requests')

SENTIMENT_SCORE = Histogram('sentiment_score', 'Histogram of sentiment scores', buckets=[0.0, 0.25, 0.5, 0.75, 1.0])

class TextInput(BaseModel):

text: str

class SentimentOutput(BaseModel):

text: str

sentiment: str

score: float

@app.post("/predict/naive", response_model=SentimentOutput)

async def predict_naive_sentiment(input_data: TextInput):

REQUESTS.inc()

start_time = time.time()

result = naive_classifier(input_data.text)[0]

score = result["score"]

SENTIMENT_SCORE.observe(score) # Record the sentiment score

PREDICTION_TIME.observe(time.time() - start_time)

return SentimentOutput(

text=input_data.text,

sentiment=result["label"],

score=score

)

@app.post("/predict/trained", response_model=SentimentOutput)

async def predict_trained_sentiment(input_data: TextInput):

REQUESTS.inc()

start_time = time.time()

result = trained_classifier(input_data.text)[0]

score = result["score"]

SENTIMENT_SCORE.observe(score) # Record the sentiment score

We also must update our Dockerfile to include our model files.

# Filename: Dockerfile

FROM python:3.9-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install -r requirements.txt

COPY main.py .

COPY ./model ./model

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

Importantly, if you are using git, make sure that you add the pytorch_model.bin file to git lfs, so that you can push to GitHub. git lfs allows you to use version control on very large files.

CI/CD and testing streamline the deployment of machine learning models by ensuring that code changes are automatically integrated, tested, and deployed, which reduces the risk of errors and enhances model reliability. This process promotes continuous improvement and faster iteration cycles, allowing teams to deliver high-quality, production-ready models more efficiently. Firstly, we create two very basic tests to ensure that our model is performing acceptably.

# Filename: test_model.py

import pytest

from fastapi.testclient import TestClient

from main import app

client = TestClient(app)

def test_positive_sentiment():

response = client.post(

"/predict/trained",

json={"text": "This is amazing!"}

)

assert response.status_code == 200

data = response.json()

assert data["sentiment"] == "LABEL_1"

assert data["score"] > 0.5

def test_negative_sentiment():

response = client.post(

"/predict/trained",

json={"text": "This is terrible!"}

)

assert response.status_code == 200

data = response.json()

assert data["sentiment"] == "LABEL_0"

assert data["score"] < 0.5

To test your code, you can simply run pytest or python -m pytest while your endpoint is running.

However, we will add automated testing CI/CD (continuous integration and continuous delivery) when pushed to GitHub.

# Filename: .github/workflows/ci_cd.yml

name: CI/CD

on: [push]

jobs:

test:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v2

with:

lfs: true

- name: Set up Python

uses: actions/setup-python@v2

with:

python-version: '3.9'

- name: Install dependencies

run: |

pip install -r requirements.txt

pip install pytest httpx

- name: Run tests

run: pytest

Our final project structure should appear as below.

sentiment-analysis-project/

├── .github/

│ └── workflows/

│ └── ci_cd.yml

├── test_model.py

├── main.py

├── Dockerfile

├── requirements.txt

└── train.py

Now, whenever we push to GitHub, it will run an automated process that checks out the code, sets up a Python 3.9 environment, installs dependencies, and runs our tests using pytest.

In this project, we’ve developed a production-ready sentiment analysis API that highlights key aspects of deploying machine learning models. While it doesn’t encompass every facet of the field, it provides a representative sampling of essential tasks involved in the process. By examining these components, I hope to clarify concepts you may have encountered but weren’t quite sure how they fit together in a practical setting.

Minimum Viable MLE was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Minimum Viable MLE

Step-by-Step Instructions for Constructing a Dataset of PubMed-Listed Publications on Cardiovascular Disease Research

Originally appeared here:

Building a PubMed Dataset

Storage accounts play a vital role in a medallion architecture for establishing an enterprise data lake. They act as a centralized repository, enabling seamless data exchange between producers and consumers. This setup empowers consumers to perform data science tasks and build machine learning (ML) models. Furthermore, consumers can use the data for Retrieval Augmented Generation (RAG), facilitating interaction with company data through Large Language Models (LLMs) like ChatGPT.

Highly sensitive data is typically stored in the storage account. Defense in depth measures must be in place before data scientists and ML pipelines can access the data. To do defense in depth, multiple measurement shall be in place such as 1) advanced threat protection to detect malware, 2) authentication using Microsoft Entra, 3) authorization to do fine grained access control, 4) audit trail to monitor access, 5) data exfiltration prevention, 6) encryption, and last but not least 7) network access control using service endpoint or private endpoints.

This article focuses on network access control of the storage account. In the next chapter, the different concepts are explained (demystified) on storage account network access. Following that, a hands-on comparison is done between service endpoint and private endpoints. Finally, a conclusion is drawn.

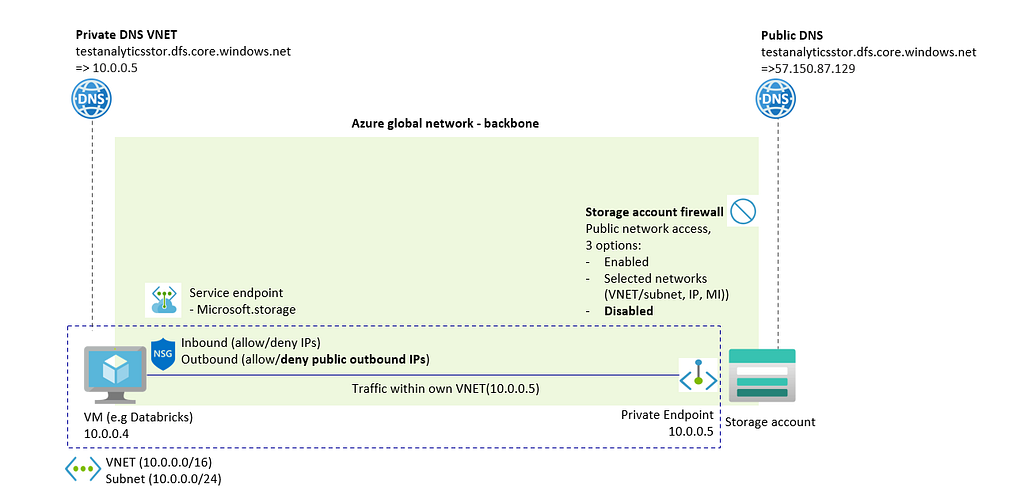

A typical scenario is that a virtual machine needs to have network access to a storage account. This virtual machine often acts as a Spark cluster to analyze data from the storage account. The image below provides an overview of the available network access controls.

The components in the image can be described as follows:

Azure global network — backbone: Traffic always goes over Azure backbone between two regions (unless customer forces to not do it), see also Microsoft global network — Azure | Microsoft Learn. This is regardless of what firewall rule is used in the storage account and regardless whether service endpoints or private endpoints are used.

Azure storage firewalls: Firewall rules can restrict or disable public access. Common rules include whitelisting VNET/subnet, public IP addresses, system-assigned managed identities as resource instances, or allowing trusted services. When a VNET/subnet is whitelisted, the Azure Storage account identifies the traffic’s origin and its private IP address. However, the storage account itself is not integrated into the VNET/subnet — private endpoints are needed for that purpose.

Public DNS storage account: Storage accounts will always have a public DNS that can be access via network tooling, see also Azure Storage Account — Public Access Disabled — but still some level of connectivity — Microsoft Q&A. That is, even when public access is disabled in the storage account firewall, the public DNS will remain.

Virtual Network (VNET): Network in which virtual machines are deployed. While a storage account is never deployed within a VNET, the VNET can be whitelisted in the Azure storage firewall. Alternatively, the VNET can create a private endpoint for secure, private connectivity.

Service endpoints: When whitelisting a VNET/subnet in the Storage account firewall, the service endpoint must be turned on for the VNET/subnet. The service endpoint should be Microsoft.Storage when the VNET and storage account are in the same region or Microsoft.Storage.Global when the VNET and storage are in different regions. Note that service endpoints is also used as an overarching term, encompassing both the whitelisting of a VNET/subnet on the Azure Storage Firewall and the enabling of the service endpoint on the VNET/subnet.

Private endpoints: Integrating a Network Interface Card (NIC) of a Storage Account within the VNET where the virtual machine operates. This integration assigns the storage account a private IP address, making it part of the VNET.

Private DNS storage account: Within a VNET, a private DNS zone can be created in which the storage account DNS resolves to the private endpoint. This is to make sure that virtual machine can still connect to the URL of the storage account and the URL of the storage account resolves to a private IP address rather than a public address.

Network Security Group (NSG): Deploy an NSG to limit inbound and outbound access of the VNET where the virtual machine runs. This can prevent data exfiltration. However, an NSG works only with IP addresses or tags, not with URLs. For more advanced data exfiltration protection, use an Azure Firewall. For simplicity, the article omits this and uses NSG to block outbound traffic.

In the next chapter, service endpoints and private endpoints are discussed.

The chapter begins by exploring the scenario of unrestricted network access. Then the details of service endpoints and private endpoints are discussed with practical examples.

Suppose the following scenario in which a virtual machine and a storage account is created. The firewall of the storage account has public access enabled, see image below.

Using this configuration, a the virtual machine can access the storage account over the network. Since the virtual machine is also deployed in Azure, traffic will go over Azure Backbone and will be accepted, see image below.

Enterprises typically establish firewall rules to limit network access. This involves disabling public access or allowing only selected networks and whitelisting specific ones. The image below illustrates public access being disabled and traffic being blocked by the firewall.

In the next paragraph, service endpoints and selected network firewall rules are used to grant network access to storage account again.

To enable virtual machine VNET access to the storage account, activate the service endpoint on the VNET. Use Microsoft.Storage for within the regions or Microsoft.Storage.Global for cross region. Next, whitelist the VNET/subnet in the storage account firewall. Traffic is then blocked again, see also image below.

Traffic is now accepted. When VNET/subnet is removed from Azure storage account firewall or public access is disabled, then traffic is blocked again.

In case an NSG is used to block public outbound IPs in the VNET of the virtual machine, then traffic is also blocked again. This is because the public DNS of the storage account is used, see also image below.

In that case, private endpoints shall be used to make sure that traffic does not leave VNET. This is discussed in the next chapter.

To reestablish network access for the virtual machine to the storage account, use a private endpoint. This action creates a network interface card (NIC) for the storage account within the VNET of the virtual machine, ensuring that traffic remains within the VNET. The image below provides further illustration.

Again, an NSG can be used again to block all traffic, see image below.

This is however counterintuitive, since first a private endpoint is created in the VNET and then traffic is blocked by NSG in the same VNET.

Enterprise always requires network rules in place to limit network access to their storage account. In this blog post, both service endpoints and private endpoint are considered to limit access.

Both is true for service endpoints and private endpoints:

For service endpoints, the following hold:

For private endpoints, the following hold:

There are a lot of other things to consider whether to use service endpoints or private endpoints (costs, migration effort since service endpoints have been out there longer than private endpoints, networking complexity when using private endpoints, limited service endpoint support of newer Azure services, hard limit of number private endpoints in storage account of 200).

However, in case it is required (“must have”) that 1) traffic shall never leave VNET/subnet of virtual machine or 2) it is not allowed to create firewall rules in Azure storage firewall and must be locked down, then service endpoint is not feasible.

In other scenarios, it’s possible to consider both solutions, and the best fit should be determined based on the specific requirements of each scenario.

Demystifying Azure Storage Account network access was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Demystifying Azure Storage Account network access

Go Here to Read this Fast! Demystifying Azure Storage Account network access