Dog Poop Compass

A Bayesian analysis of canine business

tl;dr

Do dogs poop facing North and South? Turns out they do! Want to learn how to measure this at home using a compass app, Bayesian statistics, and one dog (dog not included)? Jump in!

Introduction

This is my dog. His name is Auri and he is a 5 year old Cavalier King Charles Spaniel.

As many other dog owners, during our walks I noticed that Auri has a very particular ritual when he needs to go to the bathroom. When he finds a perfect spot he starts circling around something like a compass.

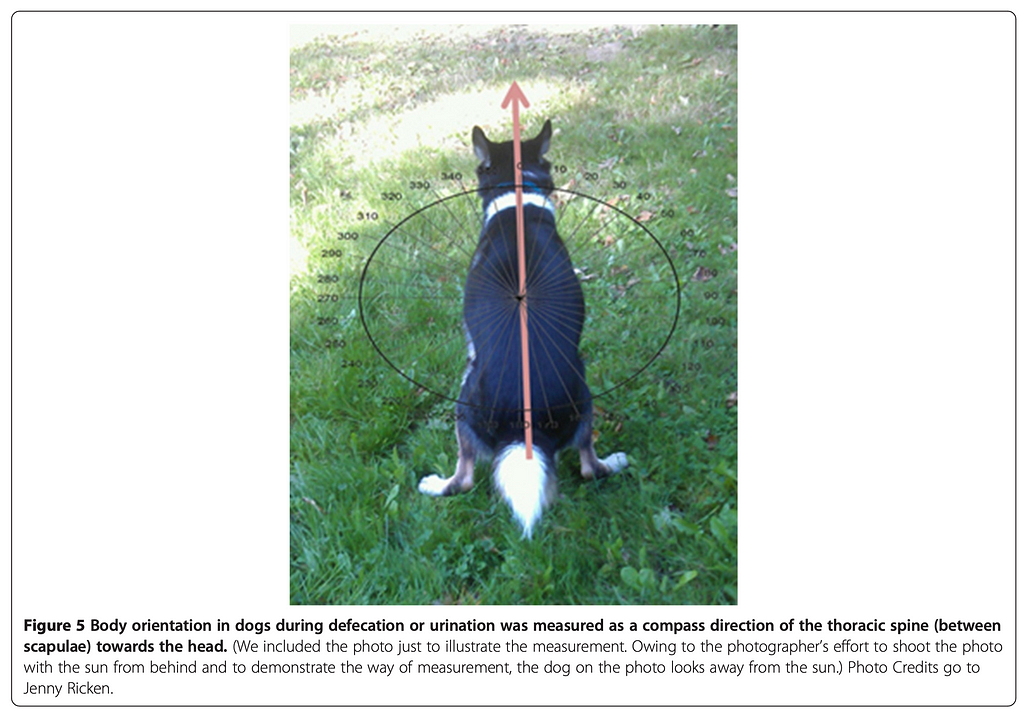

At first I was simply amused by this behaviour. After all, who knows what goes through a dog’s mind? But after a while I remembered reading a research paper from 2013 titled “Dogs are sensitive to small variations of the Earth’s magnetic field”. The research was conducted on a relatively large sample of dogs and confirmed that “dogs preferred to excrete with the body being aligned along the north-south axis”.

Now that could be an interesting research to try out! I thought to myself. And how lucky that I have a perfect test subject, a lovely canine of my own. I decided to replicate the findings and confirm (or debunk!) the hypothesis with Auri, my N=1 unsuspecting research participant.

And so began my long journey of data collection spanning multiple months and capturing over 150 of “alignment sessions” if you catch my drift.

Data Collection

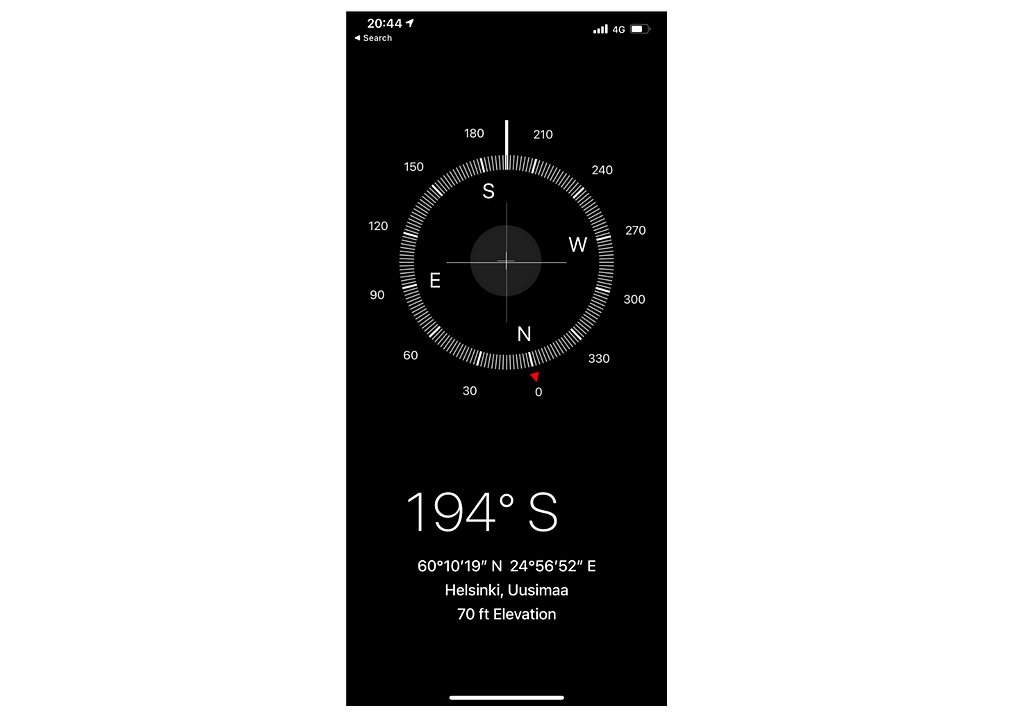

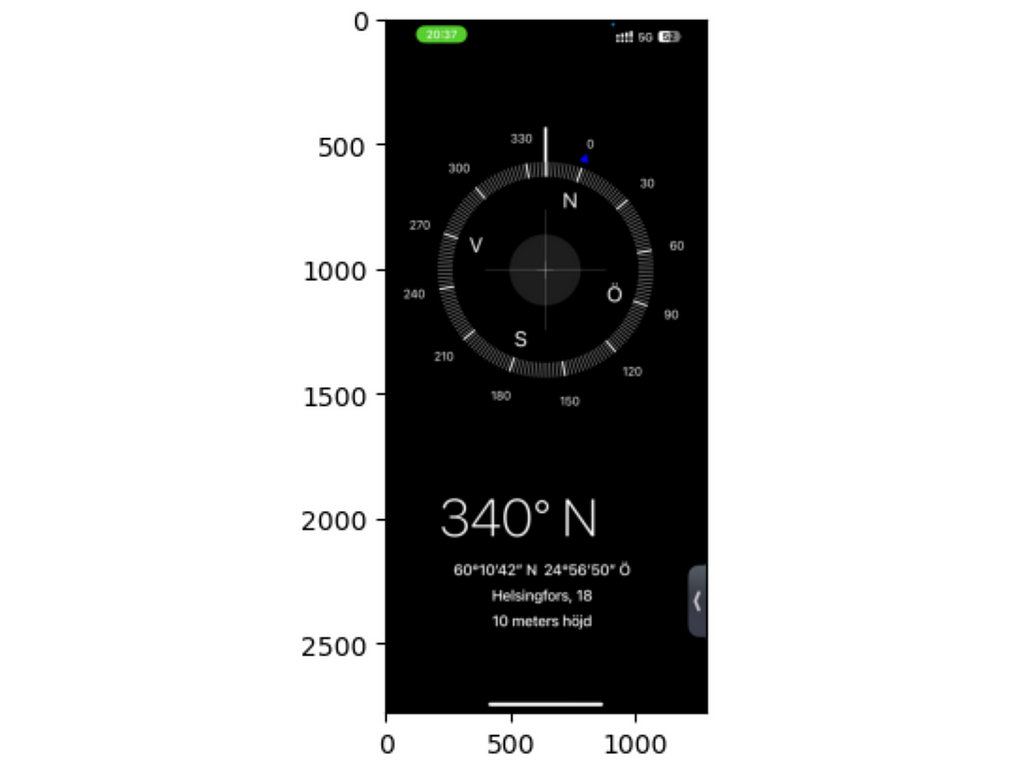

For my study, I needed to have a compass measurement for every time Auri pooped. Thank to modern technological advancements we not only have a calculator app for iPad but also a fairly accurate compass on a phone. And that’s what I decided to use.

The approach was very simple. Every time my dog settled to have some private time I opened the compass app, aligned my phone along Auri’s body and took a screenshot. In the original paper the authors eloquently called the alignment as a compass direction of the thoracic spine (between scapulae) towards the head. Very scientific. All it means is that the compass arrow is supposed to point at the same direction as dog’s head.

Anyways, that’s what I did in total 150 something times over the course of a few months. I could almost sense the passer bys’ confusion mixed with curiosity as I was seemingly taking pictures of all my dog’s relief acts. But was it worth it? Let’s find out!

Analysis

I’ll briefly discuss the data extraction and preprocessing here and then jump straight to working with circular distributions and hypothesis testing.

As always, all the code can be found on my GitHub here: Data Wondering.

How to process app screenshots?

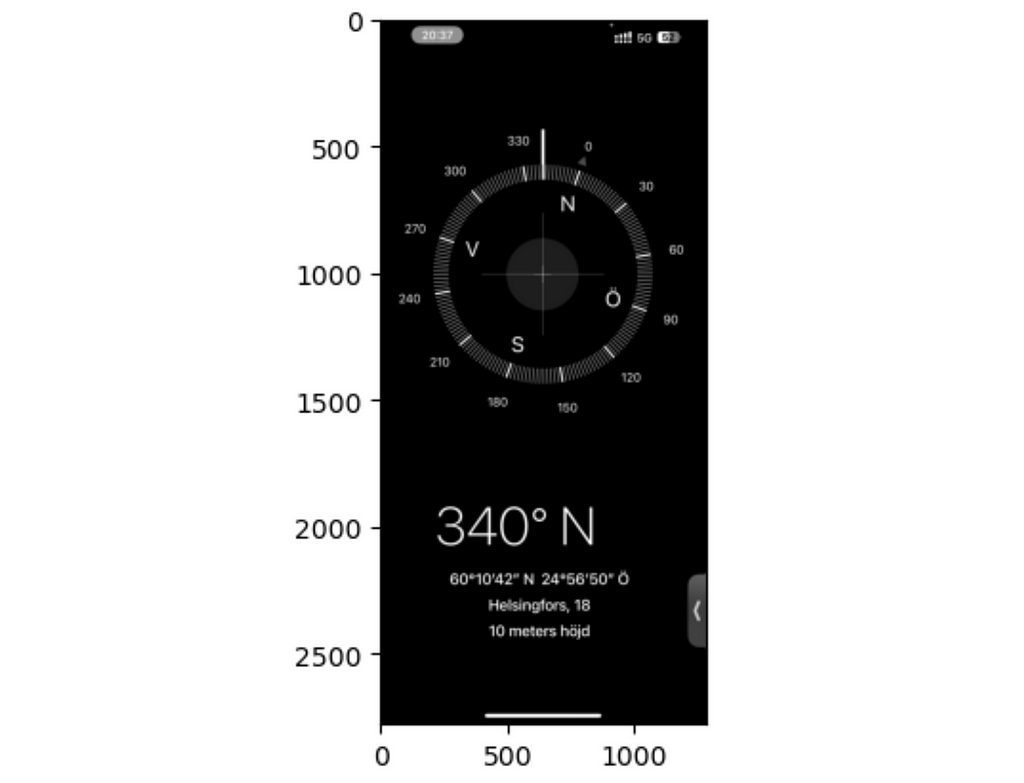

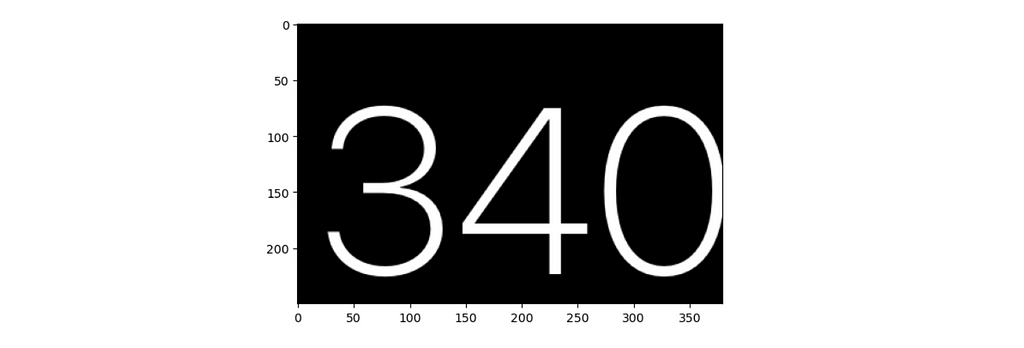

After data collection I had a series of screenshots of the compass app:

Being lazy as I am I didn’t feel like scrolling through all of them patiently writing down the compass degrees. So I decided to send all those images to my notebook and automate the process.

The task was simple — all I needed from the images was the big numbers at the bottom of the screen. Fortunately, there are a lot of small pretrained networks out there that can do basic OCR (stands for Optical character recognition). I opted for an easyocr package which was a bit on a slower end but free and easy to work with.

I’ll walk you through a quick example of using easyocr alongside with opencv to extract the numbers from a single screenshot.

First, let’s load the image and display it:

import cv2

import os

image_dir = '../data/raw/'

img = cv2.imread(image_dir + 'IMG_6828.png')

plt.imshow(img)

plt.show()

Next, I convert the image to greyscale to remove any color information and make it less noisy:

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

plt.imshow(gray, cmap='gray')

plt.show()

And then I zoom in on the area of interest:

Finally, I use easyocr to extract the numbers from the image. It does extract both the number and the confidence score but I am only interested in the number.

import easyocr

reader = easyocr.Reader(['en'])

result = reader.readtext(gray[1850:2100, 200:580])

for bbox, text, prob in result:

print(f"Detected text: {text} with confidence {prob}")

>> Detected text: 340 with confidence 0.999995182215476

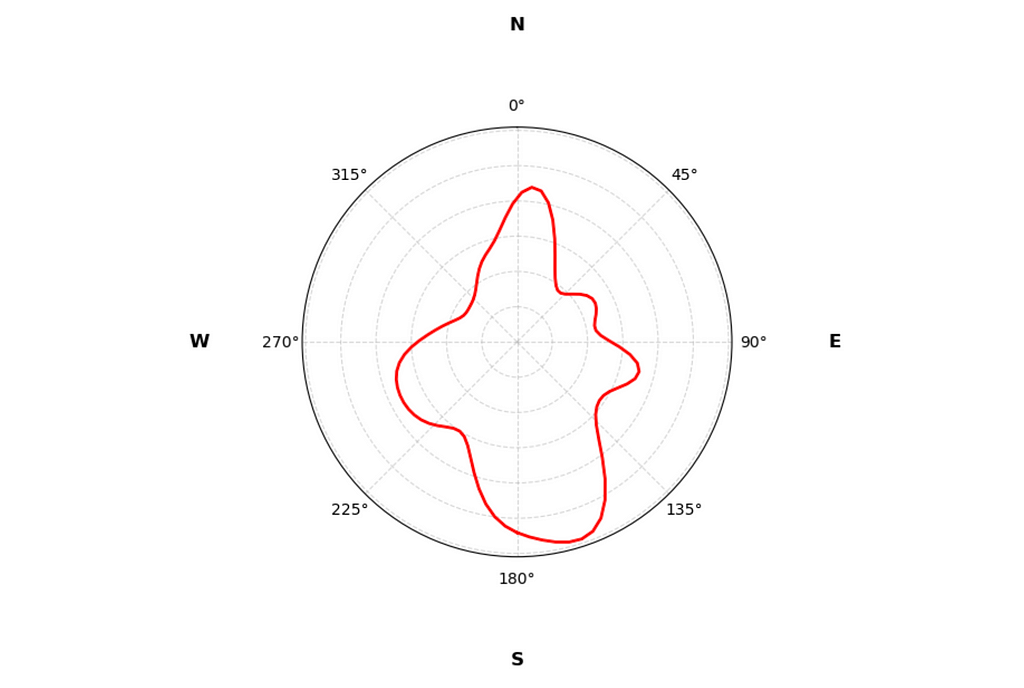

And that’s it! I wrote a simple for loop to iterate through all the screenshots and saved the results to a CSV file.

Here’s a link to the full preprocessing notebook: Data Preprocessing.

Spinning the wheel: Circular Distributions

I don’t usually work with circular distributions so I had to do some reading. Unlike regular data that we are used to, circular data has a peculiar property: the “ends” of the distribution are connected.

For example, if you take the distribution of hours in a day, you would find that the distance between 23:00 and 00:00 is the same as between 00:00 and 01:00. Or, in the case of compass degrees, the distance between 359° and 0° is the same as between 0° and 1°.

Even calculating a sample mean is not straightforward. A standard arithmetic mean between 360° and 0° would give 180° although both 360° and 0° point to the exact same direction.

Similarly in my case, I got almost perfectly opposite estimates when calculating the mean in an arithmetic and in correct way. I converted the degrees to radians using a helper function from this nice library: pingouin and calculated the mean using the circ_mean function.

from pingouin import circ_mean

arithmetic_mean = data['radians'].mean()

circular_mean = circ_mean(data['radians'])

print(f"Arithmetic mean: {arithmetic_mean:.3f}; Circular mean: {circular_mean:.3f}")

>> Arithmetic mean: 0.082; Circular mean: 2.989

Next, I wanted to visualize the compass distribution. I used the Von Mises distribution to model the circular data and drew the polar plot using matplotlib.

The Von Mises distribution is the circular analogue of the normal distribution. It is defined by two parameters: the mean location μ and the concentration κ. The concentration parameter controls the spread and is analogous to the inverse of the variance. When κ is 0 the distribution is uniform, as κ increases the distribution contracts around the mean.

Let’s import the necessary libraries and define the helper functions:

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.stats import vonmises

from pingouin import convert_angles

from typing import Tuple, List

def vonmises_kde(series: np.ndarray, kappa: float, n_bins: int = 100) -> Tuple[np.ndarray, np.ndarray]:

"""

Estimate a von Mises kernel density estimate (KDE) over circular data using scipy.

Parameters:

series: np.ndarray

The input data in radians, expected to be a 1D array.

kappa: float

The concentration parameter for the von Mises distribution.

n_bins: int

The number of bins for the KDE estimate (default is 100).

Returns:

bins: np.ndarray

The bin edges (x-values) used for the KDE.

kde: np.ndarray

The estimated density values (y-values) for each bin.

"""

bins = np.linspace(-np.pi, np.pi, n_bins)

kde = np.zeros(n_bins)

for angle in series:

kde += vonmises.pdf(bins, kappa, loc=angle)

kde = kde / len(series)

return bins, kde

def plot_circular_distribution(

data: pd.DataFrame,

plot_type: str = 'kde',

bins: int = 30,

figsize: tuple = (4, 4),

**kwargs

) -> None:

"""

Plot a compass rose with either KDE or histogram for circular data.

Parameters:

-----------

data: pd.DataFrame

DataFrame containing 'degrees'and 'radians' columns with circular data

plot_type: str

Type of plot to create: 'kde' or 'histogram'

bins: int

Number of bins for histogram or smoothing parameter for KDE

figsize: tuple

Figure size as (width, height)

**kwargs: dict

Additional styling arguments for histogram (color, edgecolor, etc.)

"""

plt.figure(figsize=figsize)

ax = plt.subplot(111, projection='polar')

ax.set_theta_zero_location('N')

ax.set_theta_direction(-1)

# add cardinal directions

directions = ['N', 'E', 'S', 'W']

angles = [0, np.pi / 2, np.pi, 3 * np.pi / 2]

for direction, angle in zip(directions, angles):

ax.text(

angle, 0.45, direction,

horizontalalignment='center',

verticalalignment='center',

fontsize=12,

weight='bold'

)

if plot_type.lower() == 'kde':

x, kde = vonmises_kde(data['radians'].values, bins)

ax.plot(x, kde, color=kwargs.get('color', 'red'), lw=2)

elif plot_type.lower() == 'histogram':

hist_kwargs = {

'color': 'teal',

'edgecolor': 'black',

'alpha': 0.7

}

hist_kwargs.update(kwargs)

angles_rad = np.deg2rad(data['degrees'].values)

counts, bin_edges = np.histogram(

angles_rad,

bins=bins,

range=(0, 2*np.pi),

density=True

)

widths = np.diff(bin_edges)

ax.bar(

bin_edges[:-1],

counts,

width=widths,

align='edge',

**hist_kwargs

)

else:

raise ValueError("plot_type must be either 'kde' or 'histogram'")

ax.xaxis.grid(True, linestyle='--', alpha=0.5)

ax.yaxis.grid(True, linestyle='--', alpha=0.5)

ax.set_yticklabels([])

plt.show()

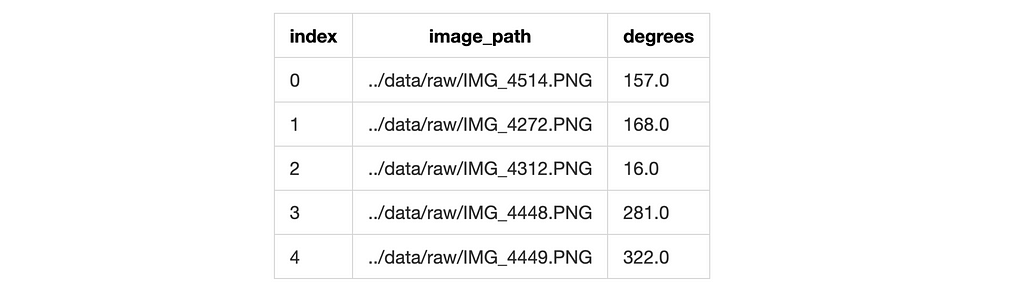

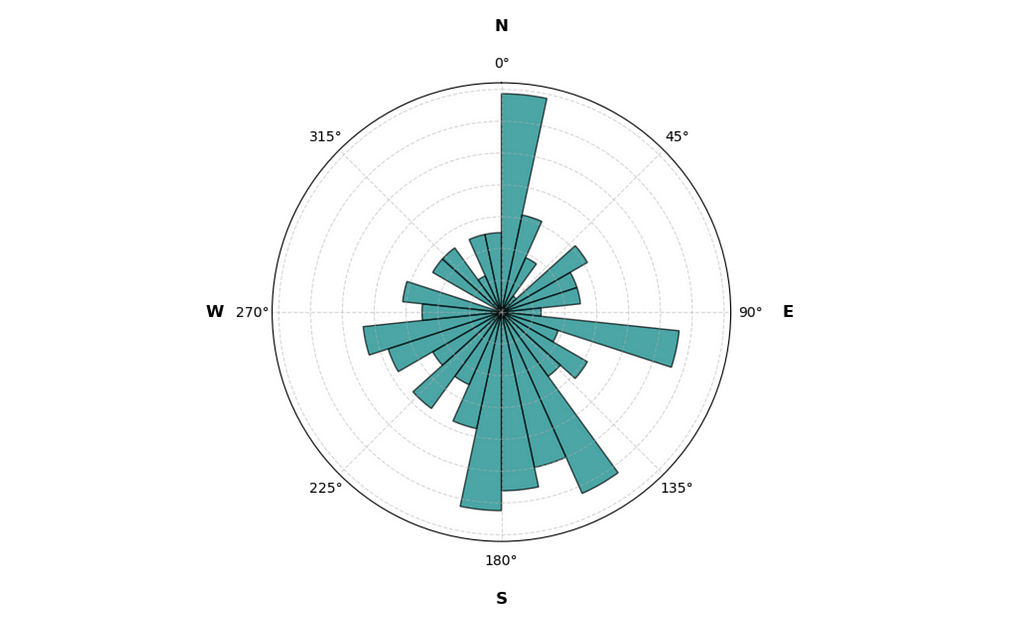

Now let’s load the data and plot the charts:

data = pd.read_csv('../data/processed/compass_degrees.csv', index_col=0)

data['radians'] = convert_angles(data['degrees'], low=0, high=360)

plot_circular_distribution(data, plot_type='histogram', figsize=(6, 6))

plot_circular_distribution(data, plot_type='kde', figsize=(5, 5))

From the histogram it’s clear that Auri has his preferences when choosing the relief direction. There’s a clear spike towards the North and a dip towards the South. Nice!

With the KDE plot we get a smoother representation of the distribution. And the good news is that it is very far from being a uniform circle.

It’s time to validate it statistically!

Statistically Significant Poops

Just as circular data requires special treatment for visualizations and distributions, it also requires special statistical tests.

I’ll be using a few tests from the pingouin library I already mentioned earlier. The first test I’ll use is the Rayleigh test which is a test for uniformity of circular data. The null hypothesis claims that the data is uniformly distributed around the circle and the alternative is that it is not.

from pingouin import circ_rayleigh

z, pval = circ_rayleigh(data['radians'])

print(f"Z-statistics: {z:.3f}; p-value: {pval:.6f}")

>> Z-statistics: 3.893; p-value: 0.020128

Good news, everyone! The p-value is less than 0.05 and we reject the null hypothesis. Auri’s strategic poop positioning is not random!

The only downside is that the test assumes that the distribution has only one mode and the data is sampled from a von Mises distribution. Oh well, let’s try something else then. Auri’s data clearly has multiple modes.

The next on the list is the V-test. This test checks if the data is non-uniform with a specified mean direction. From the documentation we get that:

The V test has more power than the Rayleigh test and is preferred if there is reason to believe in a specific mean direction.

Perfect! Let’s try it.

From the distribution it’s clear that Auri prefers the South direction above all. I’ll set the mean direction to π radians (South) and run the test.

from pingouin import circ_vtest

v, pval = circ_vtest(data['radians'], dir=np.pi)

print(f"V-statistics: {v:.3f}; p-value: {pval:.6f}")

>> V-statistics: 24.127; p-value: 0.002904

Now we’re getting somewhere! The p-value is close to zero and we reject the null hypothesis. Auri is a statistically significant South-facing pooper!

Bayesian Poops: Math Part

And now for something completely different. Let’s try a Bayesian approach.

To start, I decided to see how estimate of the mean direction changes as the sample size increased. The idea is simple: I’ll start with a circular uniform prior distribution and update it with every new data point.

We’ll need to define a few things, so let’s get down to math. If you’re not a fan of equations, feel free to skip to the next section with cool visualizations!

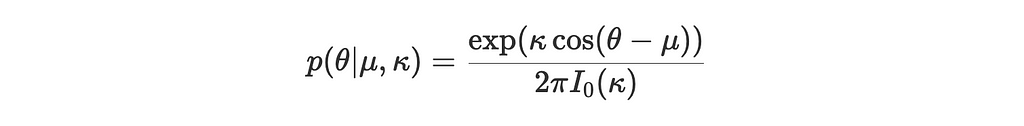

1. The von Mises Distribution

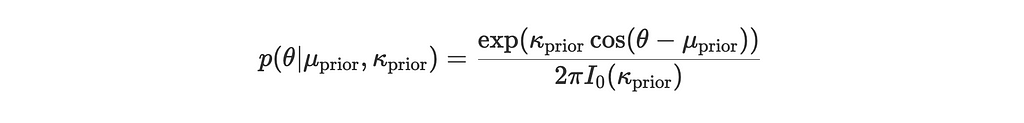

The von Mises distribution p(θ∣μ,κ) has a probability density function given by:

where:

- μ is the mean direction

- κ is the concentration parameter (analogous to the inverse of the variance in a normal distribution)

- I₀(κ) is the modified Bessel function of the first kind, ensuring the distribution is normalized

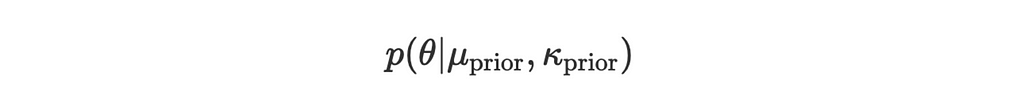

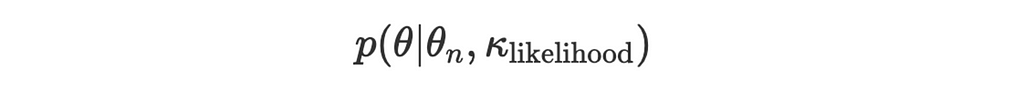

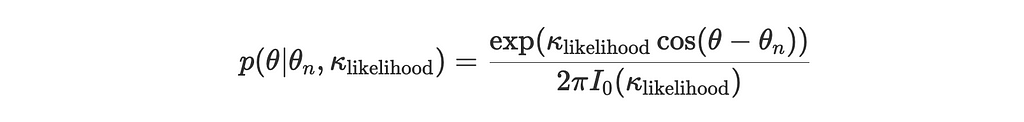

2. Prior and Likelihood

Suppose we have:

- Prior distribution:

- Likelihood for a new observation θₙ:

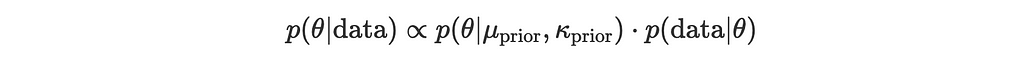

We want to update our prior using Bayes’ theorem:

where

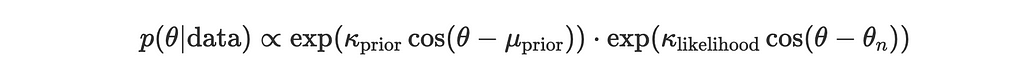

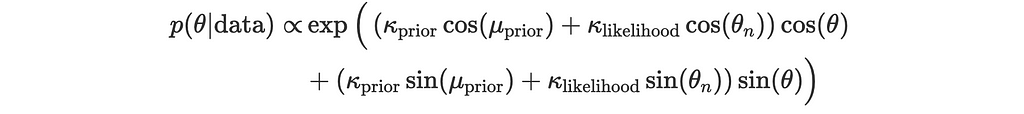

3. Multiply the Prior and Likelihood in the von Mises Form

The product of two von Mises distributions with parameters (μ1, κ1) and (μ2, κ2) leads to another von Mises distribution with updated parameters. Let’s go through the steps:

Given:

and

the posterior is proportional to the product:

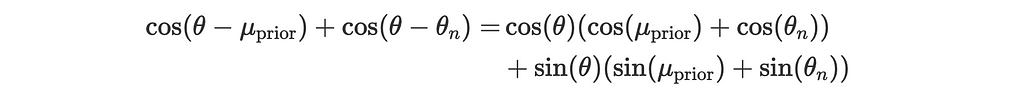

Using the trigonometric identity for the sum of cosines:

This becomes:

4. Convert to Polar Form for the Posterior

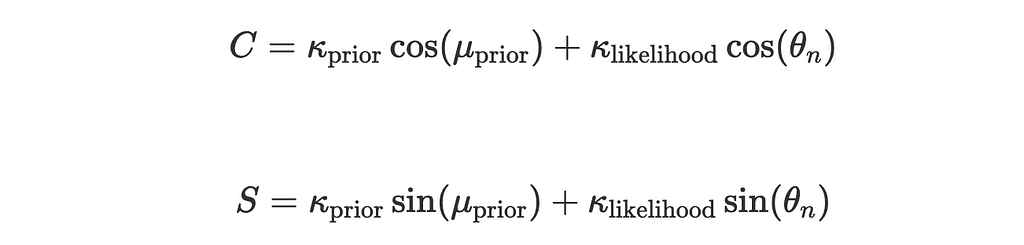

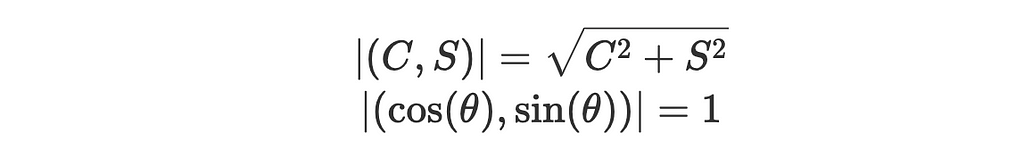

Final stretch! The expression above is a von Mises distribution in disguise. We can rewrite it in the polar form to estimate the updated mean direction and concentration parameter.

Let:

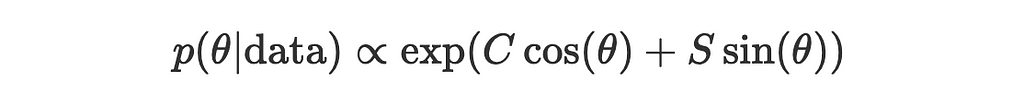

Now the expression for posterior simplifies to:

Let’s pause here and take a closer look at the simplified expression.

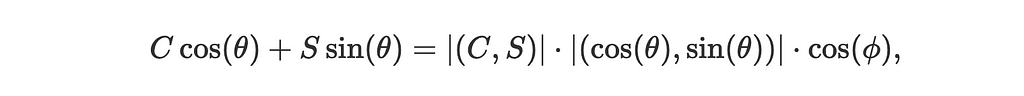

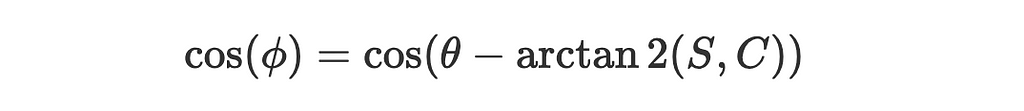

- Notice that C cos(θ)+S sin(θ) is the dot product of two vectors (C,S) and (cos(θ),sin(θ)) which we can represent as:

where ϕ is the angle between the vectors (C,S) and (cos(θ),sin(θ)).

2. The magnitudes of the vectors are:

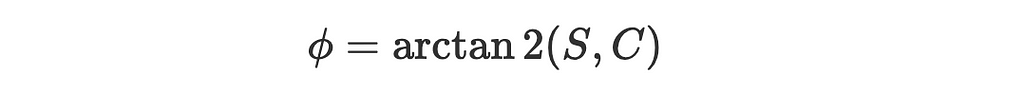

3. The angle between the vector (cos(θ),sin(θ)) and a positive x-axis is just θ and between (C,S) and a positive x-axis is, by definition:

4. So the angle between the two vectors is:

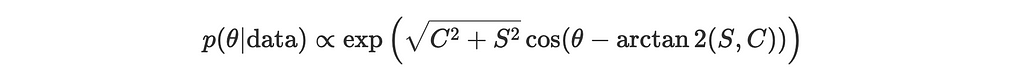

Substitute our findings back into the simplified expression for the posterior:

or

where

- kappa_post is the posterior concentration parameter:

- mu_post is the posterior mean direction

Yay, we made it! The posterior is also a von Mises distribution with updated parameters (mu_post, kappa_post). Now we can update the prior with every new observation and see how the mean direction changes.

Bayesian Poops: Fun Part

Welcome back to those of you who skipped the math and congratulations to those who made it through! Let’s code the Bayesian update and vizualize the results.

First, let’s define the helper functions for visualizing the posterior distribution. We’ll need to it to create a nice animation later on.

import imageio

from io import BytesIO

def get_posterior_distribution_image_array(

mu_grid: np.ndarray,

posterior_pdf: np.ndarray,

current_samples: List[float],

idx: int,

fig_size: Tuple[int, int],

dpi: int,

r_max_posterior: float

) -> np.ndarray:

"""

Creates the posterior distribution and observed samples histogram on a polar plot,

converts it to an image array, and returns it for GIF processing.

Parameters:

-----------

mu_grid (np.ndarray):

Grid of mean direction values for plotting the posterior PDF.

posterior_pdf (np.ndarray):

Posterior probability density function values for the given `mu_grid`.

current_samples (List[float]):

List of observed angle samples in radians.

idx (int):

The current step index, used for labeling the plot.

fig_size (Tuple[int, int]):

Size of the plot figure (width, height).

dpi (int):

Dots per inch (resolution) for the plot.

r_max_posterior (float):

Maximum radius for the posterior PDF plot, used to set plot limits.

Returns:

np.ndarray: Image array of the plot in RGB format, suitable for GIF processing.

"""

fig = plt.figure(figsize=fig_size, dpi=dpi)

ax = plt.subplot(1, 1, 1, projection='polar')

ax.set_theta_zero_location('N')

ax.set_theta_direction(-1)

ax.plot(mu_grid, posterior_pdf, color='red', linewidth=2, label='Posterior PDF')

# observed samples histogram

n_bins = 48

hist_bins = np.linspace(-np.pi, np.pi, n_bins + 1)

hist_counts, _ = np.histogram(current_samples, bins=hist_bins)

# normalize the histogram counts

if np.max(hist_counts) > 0:

hist_counts_normalized = hist_counts / np.max(hist_counts)

else:

hist_counts_normalized = hist_counts

bin_centers = (hist_bins[:-1] + hist_bins[1:]) / 2

bin_width = hist_bins[1] - hist_bins[0]

# set the maximum radius to accommodate both the posterior pdf and histogram bars

r_histogram_height = r_max_posterior * 0.9

r_max = r_max_posterior + r_histogram_height

ax.set_ylim(0, r_max)

# plot the histogram bars outside the circle

for i in range(len(hist_counts_normalized)):

theta = bin_centers[i]

width = bin_width

hist_height = hist_counts_normalized[i] * r_histogram_height

if hist_counts_normalized[i] > 0:

ax.bar(

theta, hist_height, width=width, bottom=r_max_posterior,

color='teal', edgecolor='black', alpha=0.5

)

ax.text(

0.5, 1.1, f'Posterior Distribution (Step {idx + 1})',

transform=ax.transAxes, ha='center', va='bottom', fontsize=18

)

ax.set_yticklabels([])

ax.grid(linestyle='--')

ax.yaxis.set_visible(False)

ax.spines['polar'].set_visible(False)

plt.subplots_adjust(top=0.85, bottom=0.05, left=0.05, right=0.95)

# saving to buffer for gif processing

buf = BytesIO()

plt.savefig(buf, format='png', bbox_inches=None, pad_inches=0)

buf.seek(0)

img_array = plt.imread(buf)

img_array = (img_array * 255).astype(np.uint8)

plt.close(fig)

return img_array

Now we’re ready to write the update loop. Remember that we need to set our prior distribution. I’ll start with a circular uniform distribution which is equivalent to a von Mises distribution with a concentration parameter of 0. For the kappa_likelihood I set a fixed moderate concentration parameter of 2. That’ll make the posterior update more visible.

# initial prior parameters

mu_prior = 0.0 # initial mean direction (any value, since kappa_prior = 0)

kappa_prior = 0.0 # uniform prior over the circle

# fixed concentration parameter for the likelihood

kappa_likelihood = 2.0

posterior_mus = []

posterior_kappas = []

mu_grid = np.linspace(-np.pi, np.pi, 200)

# vizualisation parameters

fig_size = (10, 10)

dpi = 100

current_samples = []

frames = []

for idx, theta_n in enumerate(data['radians']):

# compute posterior parameters

C = kappa_prior * np.cos(mu_prior) + kappa_likelihood * np.cos(theta_n)

S = kappa_prior * np.sin(mu_prior) + kappa_likelihood * np.sin(theta_n)

kappa_post = np.sqrt(C**2 + S**2)

mu_post = np.arctan2(S, C)

# posterior distribution

posterior_pdf = np.exp(kappa_post * np.cos(mu_grid - mu_post)) / (2 * np.pi * i0(kappa_post))

# store posterior parameters and observed samples

posterior_mus.append(mu_post)

posterior_kappas.append(kappa_post)

current_samples.append(theta_n)

# plot posterior distribution

r_max_posterior = max(posterior_pdf) * 1.1

img_array = get_posterior_distribution_image_array(

mu_grid,

posterior_pdf,

current_samples,

idx,

fig_size,

dpi,

r_max_posterior

)

frames.append(img_array)

# updating priors for next iteration

mu_prior = mu_post

kappa_prior = kappa_post

# Create GIF

fps = 10

frames.extend([img_array]*fps*3) # repeat last frame a few times to make a "pause" at the end of the GIF

imageio.mimsave('../images/posterior_updates.gif', frames, fps=fps)

And that’s it! The code will generate a GIF showing the posterior distribution update with every new observation. Here’s the glorious result:

With every new observation the posterior distribution gets more and more concentrated around the true mean direction. If only I could replace the red line with Auri’s silhouette, it would be perfect!

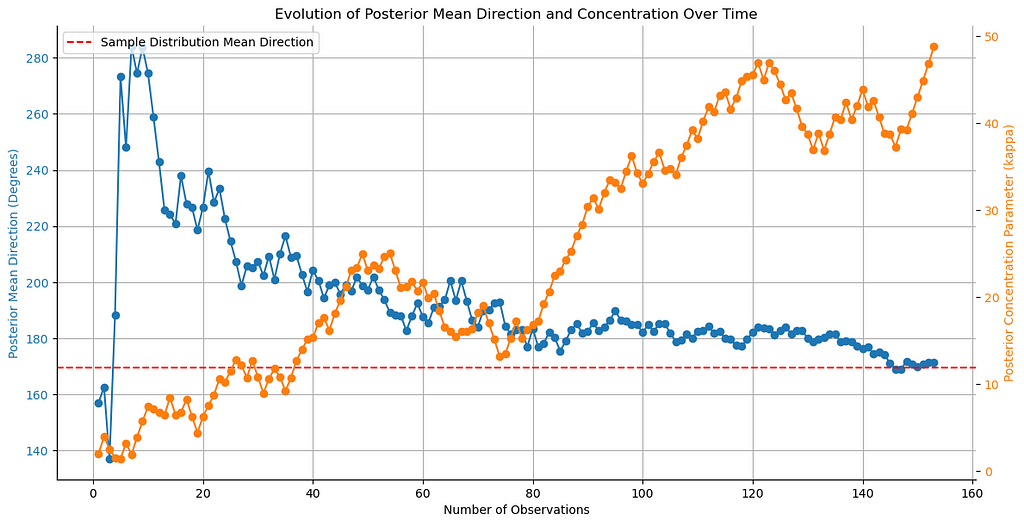

We can further visualize the history of the posterior mean direction and concentration parameter. Let’s plot them:

# Convert posterior_mus to degrees

posterior_mus_deg = np.rad2deg(posterior_mus) % 360

n_samples = data.shape[0]

true_mu = data['degrees'].mean()

# Plot evolution of posterior mean direction

fig, ax1 = plt.subplots(figsize=(12, 6))

color = 'tab:blue'

ax1.set_xlabel('Number of Observations')

ax1.set_ylabel('Posterior Mean Direction (Degrees)', color=color)

ax1.plot(range(1, n_samples + 1), posterior_mus_deg, marker='o', color=color)

ax1.tick_params(axis='y', labelcolor=color)

ax1.axhline(true_mu, color='red', linestyle='--', label='Sample Distribution Mean Direction')

ax1.legend(loc='upper left')

ax1.grid(True)

ax2 = ax1.twinx() # instantiate a second axes that shares the same x-axis

color = 'tab:orange'

ax2.set_ylabel('Posterior Concentration Parameter (kappa)', color=color) # we already handled the x-label with ax1

ax2.plot(range(1, n_samples + 1), posterior_kappas, marker='o', color=color)

ax2.tick_params(axis='y', labelcolor=color)

fig.tight_layout() # otherwise the right y-label is slightly clipped

sns.despine()

plt.title('Evolution of Posterior Mean Direction and Concentration Over Time')

plt.show()

The plot shows how the posterior mean direction and concentration parameter evolve with every new observation. The mean direction eventually converges to the sample value, while the concentration parameter increases, as the estimate becomes more certain.

Bayes Factor: PyMC for Doggy Compass

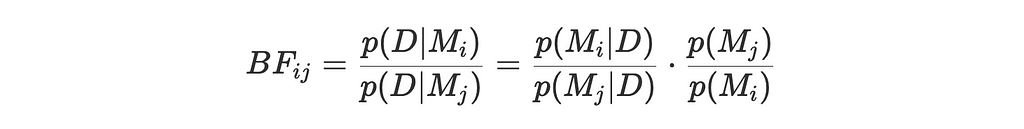

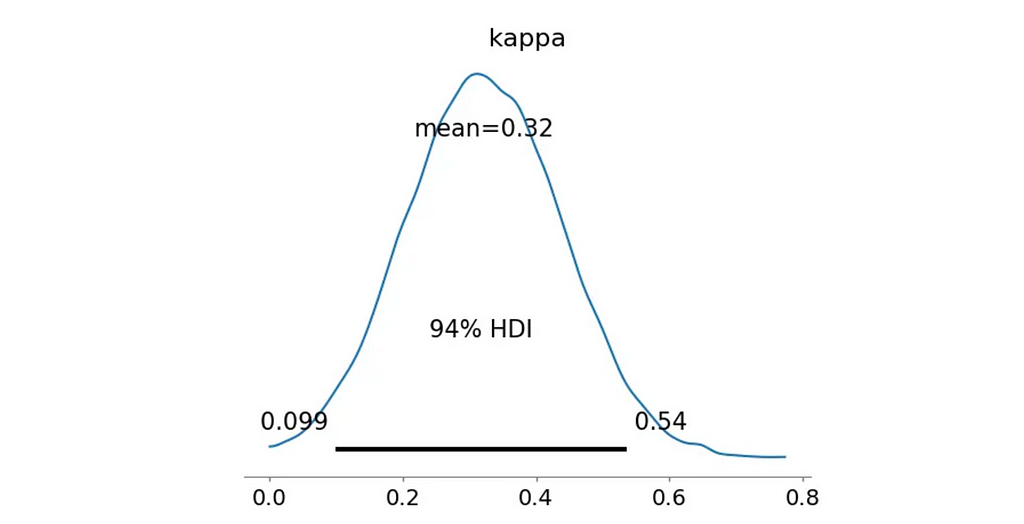

The last thing I wanted to try was to use the Bayes Factor approach for hypothesis testing. The idea behind the Bayes Factor is very simple: it’s the ratio of two marginal likelihoods for two competing hypotheses/models.

In general, the Bayes Factor is defined as:

where:

- p(D∣Mi) and p(D∣Mj) are the marginal likelihoods of the data under the i and j hypothesis

- p(Mi∣D) and p(Mj∣D) are the posterior probabilities of the models given the data

- p(Mi) and p(Mj) are the prior probabilities of the models

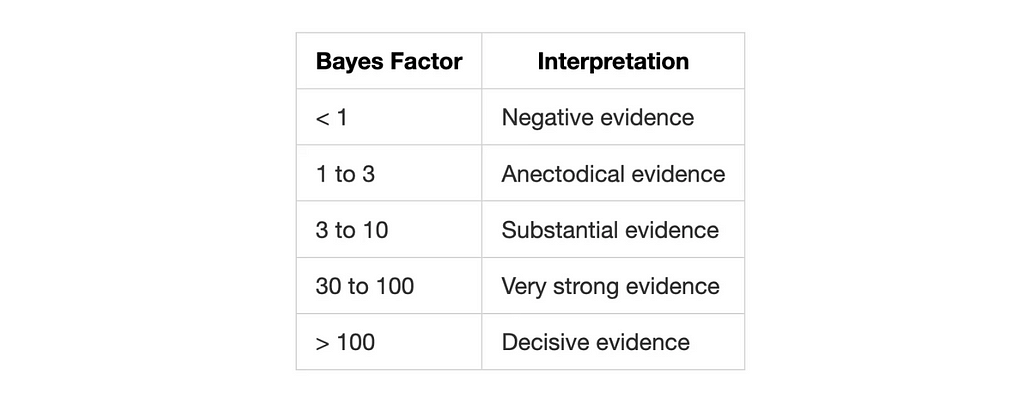

The result is a number that tells us how much more likely one hypothesis is compared to the other. There are different approaches to interpret the Bayes Factor, but a common one is to use the Jeffreys’ scale by Harold Jeffreys:

What are the models you might ask? Simple! They are distributions with different parameters. I’ll be using PyMC to define the models and sample posterior distributions from them.

First of all, let’s re-itroduce the null hypothesis. I still assume it’s a circular uniform Von Mises distribution with kappa=0 but this time we need to calculate the likelihood of the data under this hypothesis. To simplify further calculations, we’ll be calculating log-likelihoods.

# Calculate log likelihood for H0

log_likelihood_h0 = vonmises.logpdf(data['radians'], kappa=0, loc=0).sum()

Next, it’s time to build the alternative model. Starting with a simple scenario of a Unimodal South direction, where I assume that the distribution is concentrated around 180° or π in radians.

Unimodal South

Let’s define the model in PyMC. We’ll use Von Mises distribution with a fixed location parameter μ=π and a Half-Normal prior for the non-negative concentration parameter κ. This allows the model to learn the concentration parameter from the data and check if the South direction is preferred.

import pymc as pm

import arviz as az

import arviz.data.inference_data as InferenceData

from scipy.stats import halfnorm, gaussian_kde

with pm.Model() as model_uni:

# Prior for kappa

kappa = pm.HalfNormal('kappa', sigma=10)

# Likelihood

likelihood_h1 = pm.VonMises('angles', mu=np.pi, kappa=kappa, observed=data['radians'])

# Sample from posterior

trace_uni = pm.sample(

10000, tune=3000, chains=4,

return_inferencedata=True,

idata_kwargs={'log_likelihood': True})

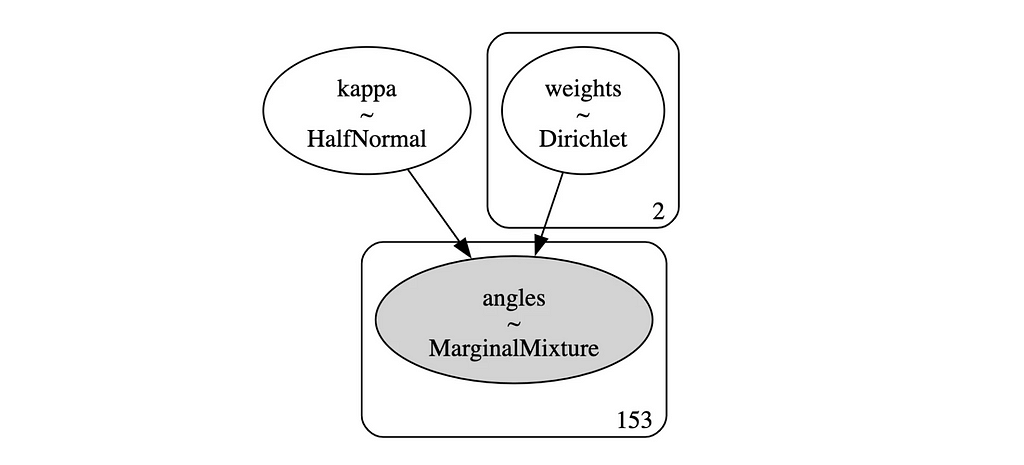

This gives us a nice simple model which we can also visualize:

# Model graph

pm.model_to_graphviz(model_uni)

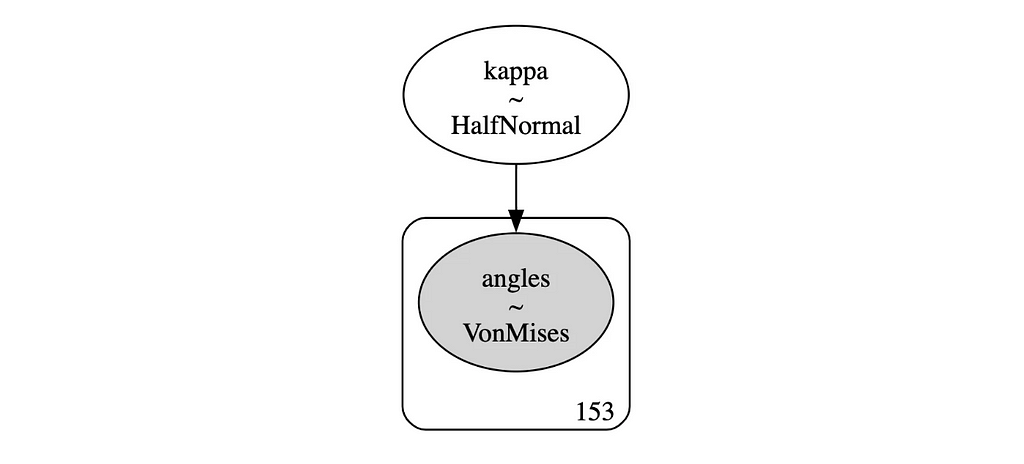

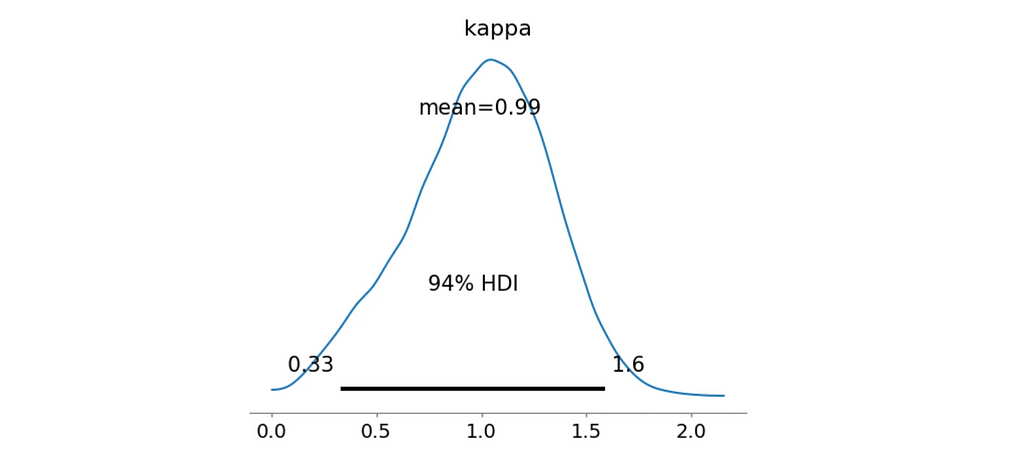

And here’s the posterior distribution for the concentration parameter κ:

az.plot_posterior(trace_uni, var_names=['kappa'])

plt.show()

All that’s left is to calculate the log-likelihood for the alternative model and the Bayes Factor.

# Posterior samples for kappa

kappa_samples = trace_uni.posterior.kappa.values.flatten()

# Log likelihood for each sample

log_likes = []

for k in kappa_samples:

# Von Mises log likelihood

log_like = vonmises.logpdf(data['radians'], k, loc=np.pi).sum()

log_likes.append(log_like)

# Log-mean-exp trick for numerical stability

log_likelihood_h1 = np.max(log_likes) +

np.log(np.mean(np.exp(log_likes - np.max(log_likes))))

BF = np.exp(log_likelihood_h1 - log_likelihood_h0)

print(f"Bayes Factor: {BF:.4f}")

print(f"Probability kappa > 0.5: {np.mean(kappa_samples > 0.5):.4f}")

>> Bayes Factor: 32.4645

>> Probability kappa > 0.5: 0.0649

Since we’re dividing the likelihood of the alternative model by the likelihood of the null model, the Bayes Factor indicates how much more likely the data is under the alternative hypothesis. In this case, we get 32.46, a very strong evidence, suggesting that the data is not uniformly distributed around the circle and there is a preference for the South direction.

However, we additionally calculate the probability that the concentration parameter kappa is greater than 0.5. This is a simple way to check if the distribution is significantly different from the uniform one. With the Unimodal South model, this probabiliy is only 0.0649, meaning that the distribution is still quite spread out.

Let’s try another model: Bimodal North-South Mixture.

Bimodal North-South Mixture

This time I’ll assume that the distribution is bimodal with peaks around 0° and 180°, just as we’ve seen in the compass rose.

To achieve this, I’ll need a mixture of two Von Mises distributions with different fixed mean directions and a shared concentration parameter.

Let’s define a few helper functions first:

# Type aliases

ArrayLike = Union[np.ndarray, pd.Series]

ResultDict = Dict[str, Union[float, InferenceData.InferenceData]]

def compute_mixture_vonmises_logpdf(

series: ArrayLike,

kappa: float,

weights: npt.NDArray[np.float64],

mus: List[float]

) -> float:

"""

Compute log PDF for a mixture of von Mises distributions

Parameters:

-----------

series: ArrayLike

Array of observed angles in radians

kappa: float

Concentration parameter

weights: npt.NDArray[np.float64],

Array of mixture weights

mus: List[float]

Array of means for each component

Returns:

--------

float: Sum of log probabilities for all data points

"""

mixture_pdf = np.zeros_like(series)

for w, mu in zip(weights, mus):

mixture_pdf += w * vonmises.pdf(series, kappa, loc=mu)

return np.log(np.maximum(mixture_pdf, 1e-300)).sum()

def compute_log_likelihoods(

trace: az.InferenceData,

series: ArrayLike,

mus: List[float]

) -> np.ndarray:

"""

Compute log likelihoods for each sample in the trace

Parameters:

-----------

trace: az.InferenceData

The trace from the PyMC3 model sampling.

series: ArrayLike

Array of observed angles in radians

"""

kappa_samples = trace.posterior.kappa.values.flatten()

weights_samples = trace.posterior.weights.values.reshape(-1, 2)

# Calculate log likelihood for each posterior sample

log_likes = []

for k, w in zip(kappa_samples, weights_samples):

log_like = compute_mixture_vonmises_logpdf(

series,

kappa=k,

weights=w,

mus=mus

)

log_likes.append(log_like)

# Calculate marginal likelihood using log-sum-exp trick

log_likelihood_h1 = np.max(log_likes) + np.log(np.mean(np.exp(log_likes - np.max(log_likes))))

return log_likelihood_h1

def posterior_report(

log_likelihood_h0: float,

log_likelihood_h1: float,

kappa_samples: ArrayLike,

kappa_threshold: float = 0.5

) -> str:

"""

Generate a report with Bayes Factor and probability kappa > threshold

Parameters:

-----------

log_likelihood_h0: float

Log likelihood for the null hypothesis

log_likelihood_h1: float

Log likelihood for the alternative hypothesis

kappa_samples: ArrayLike

Flattened posterior samples of the concentration parameter

kappa_threshold: float

Threshold for computing the probability that kappa > threshold

Returns:

--------

summary: str

A formatted string containing the summary statistics.

"""

BF = np.exp(log_likelihood_h1 - log_likelihood_h0)

summary = (

f"Bayes Factor: {BF:.4f}n"

f"Probability kappa > {kappa_threshold}: {np.mean(kappa_samples > kappa_threshold):.4f}"

)

return summary

And now back to the model:

mu1 = 0 # 0 degrees

mu2 = np.pi # 180 degrees

with pm.Model() as model_mixture_bimodal_NS:

# Priors for concentration parameters

kappa = pm.HalfNormal('kappa', sigma=10)

# Priors for component weights

weights = pm.Dirichlet('weights', a=np.ones(2))

# Define the von Mises components

vm1 = pm.VonMises.dist(mu=mu1, kappa=kappa)

vm2 = pm.VonMises.dist(mu=mu2, kappa=kappa)

# Mixture distribution

likelihood = pm.Mixture(

'angles',

w=weights,

comp_dists=[vm1, vm2],

observed=data['radians']

)

# Sample from the posterior

trace_mixture_bimodal_NS = pm.sample(

10000, tune=3000, chains=4, return_inferencedata=True, idata_kwargs={'log_likelihood': True})

# Get kappa samples

kappa_samples = trace_mixture_bimodal_NS.posterior.kappa.values.flatten()

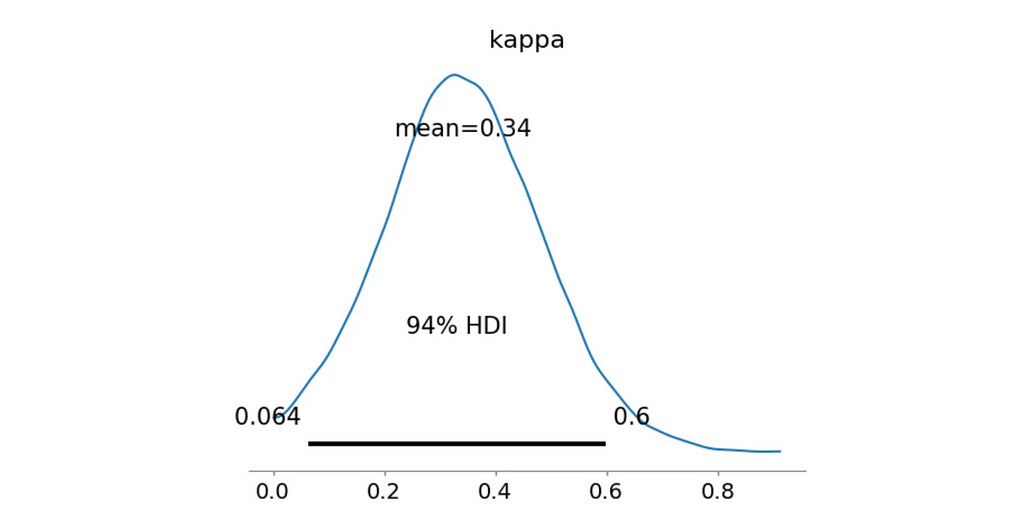

Once again, let’s visualize the model graph and the posterior distribution for the concentration parameter κ:

# Model graph

pm.model_to_graphviz(model_mixture_bimodal_NS)

# Posterior Analysis

az.plot_posterior(trace_mixture_bimodal_NS, var_names=['kappa'])

plt.show()

And finally, let’s calculate the Bayes Factor and the probability that the concentration parameter κ is greater than 0.5:

log_likelihood_h1 = compute_log_likelihoods(trace_mixture_bimodal_NS, data['radians'], [mu1, mu2])

print(posterior_report(log_likelihood_h0, log_likelihood_h1, kappa_samples))

>> Bayes Factor: 214.2333

>> Probability kappa > 0.5: 0.9110

Fantastic! Both our metrics indicate that this model is a much better fit for the data. The Bayes Factor suggests a decisive evidence and most of the posterior κ samples are greater than 0.5 with the mean value of 0.99 as we’ve seen on the distribution plot.

Let’s try a couple more models before wrapping up.

Bimodal West-South Mixture

This model once again assumes a bimodal distribution but this time with peaks around 270° and 180°, which were common directions in the compass rose.

mu1 = np.pi # 180 degrees

mu2 = 3 * np.pi / 2 # 270 degrees

with pm.Model() as model_mixture_bimodal_WS:

# Priors for concentration parameters

kappa = pm.HalfNormal('kappa', sigma=10)

# Priors for component weights

weights = pm.Dirichlet('weights', a=np.ones(2))

# Define the four von Mises components

vm1 = pm.VonMises.dist(mu=mu1, kappa=kappa)

vm2 = pm.VonMises.dist(mu=mu2, kappa=kappa)

# Mixture distribution

likelihood = pm.Mixture(

'angles',

w=weights,

comp_dists=[vm1, vm2],

observed=data['radians']

)

# Sample from the posterior

trace_mixture_bimodal_WS = pm.sample(

10000, tune=3000, chains=4, return_inferencedata=True, idata_kwargs={'log_likelihood': True})

# Get kappa samples

kappa_samples = trace_mixture_bimodal_WS.posterior.kappa.values.flatten()

# Posterior Analysis

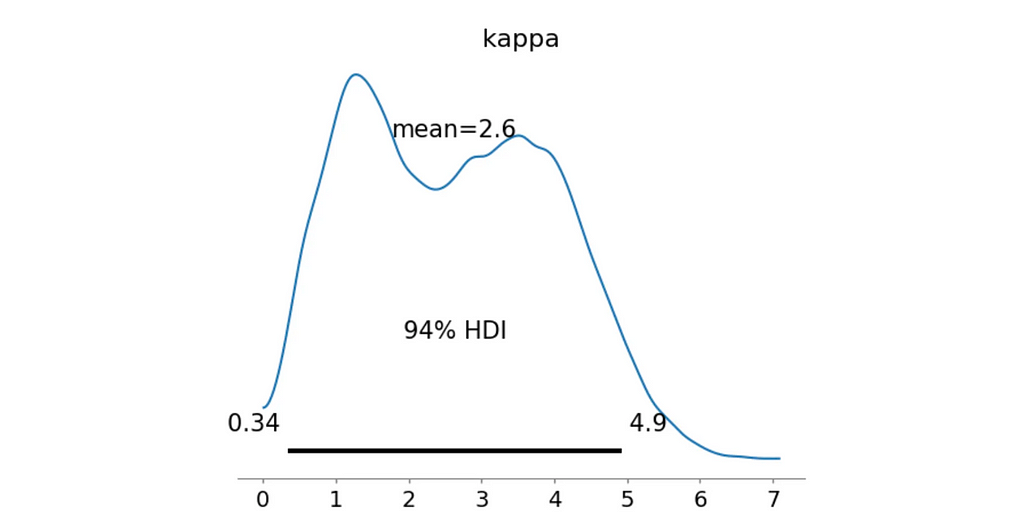

az.plot_posterior(trace_mixture_bimodal_WS, var_names=['kappa'])

plt.show()

log_likelihood_h1 = compute_log_likelihoods(trace_mixture_bimodal_WS, data['radians'], [mu1, mu2])

print(posterior_report(log_likelihood_h0, log_likelihood_h1, kappa_samples))

>> Bayes Factor: 20.2361

>> Probability kappa > 0.5: 0.1329

Nope, definitely not as good as the previous model. Next!

Quadrimodal Mixture

Final round. Maybe my dog really likes to align himself with the cardinal directions? Let’s try a quadrimodal distribution with peaks around 0°, 90°, 180°, and 270°.

mu1 = 0 # 0 degrees

mu2 = np.pi / 2 # 90 degrees

mu3 = np.pi # 180 degrees

mu4 = 3 * np.pi / 2 # 270 degrees

with pm.Model() as model_mixture_quad:

# Priors for concentration parameters

kappa = pm.HalfNormal('kappa', sigma=10)

# Priors for component weights

weights = pm.Dirichlet('weights', a=np.ones(4))

# Define the four von Mises components

vm1 = pm.VonMises.dist(mu=mu1, kappa=kappa)

vm2 = pm.VonMises.dist(mu=mu2, kappa=kappa)

vm3 = pm.VonMises.dist(mu=mu3, kappa=kappa)

vm4 = pm.VonMises.dist(mu=mu4, kappa=kappa)

# Mixture distribution

likelihood = pm.Mixture(

'angles',

w=weights,

comp_dists=[vm1, vm2, vm3, vm4],

observed=data['radians']

)

# Sample from the posterior

trace_mixture_quad = pm.sample(

10000, tune=3000, chains=4, return_inferencedata=True, idata_kwargs={'log_likelihood': True}

)

# Get kappa samples

kappa_samples = trace_mixture_quad.posterior.kappa.values.flatten()

# Posterior Analysis

az.plot_posterior(trace_mixture_quad, var_names=['kappa'])

plt.show()

log_likelihood_h1 = compute_log_likelihoods(trace_mixture_quad, data['radians'], [mu1, mu2, mu3, mu4])

print(posterior_report(log_likelihood_h0, log_likelihood_h1, kappa_samples))

>> Bayes Factor: 0.0000

>> Probability kappa > 0.5: 0.9644

Well… Not really. Although the probability that the concentration parameter κκ is greater than 0.5 is quite high, the Bayes Factor is 0.0.

The great thing about Bayes Factor is that it penalizes overly complex models effectively preventing overfitting.

Model Comparison

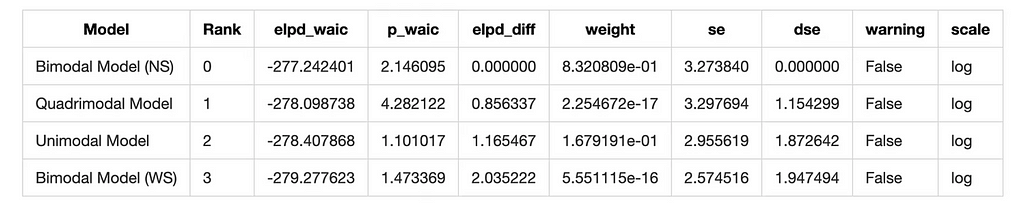

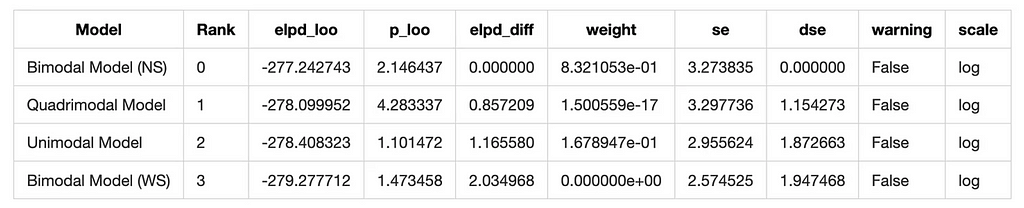

Let’s summarize the results of all models using information criteria. We’ll use the Widely Applicable Information Criterion (WAIC) and the Leave-One-Out Cross-Validation (LOO) to compare the models.

# Compute WAIC for each model

wail_uni = az.waic(trace_uni)

waic_quad = az.waic(trace_mixture_quad)

waic_bimodal_NS = az.waic(trace_mixture_bimodal_NS)

waic_bimodal_WS = az.waic(trace_mixture_bimodal_WS)

model_dict = {

'Quadrimodal Model': trace_mixture_quad,

'Bimodal Model (NS)': trace_mixture_bimodal_NS,

'Bimodal Model (WS)': trace_mixture_bimodal_WS,

'Unimodal Model': trace_uni

}

# Compare models using WAIC

waic_comparison = az.compare(model_dict, ic='waic')

waic_comparison

# Compare models using LOO

loo_comparison = az.compare(model_dict, ic='loo')

loo_comparison

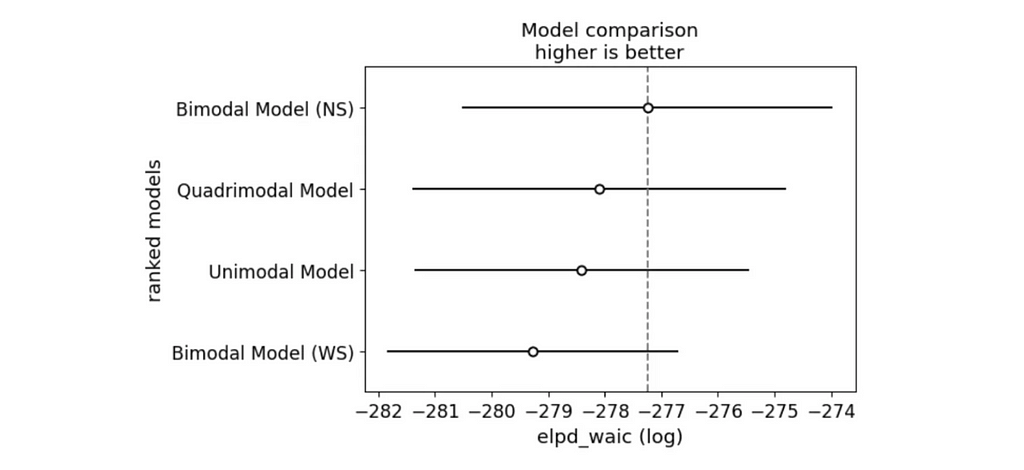

# Visualize the comparison

az.plot_compare(waic_comparison)

plt.show()

And we have a winner! The Bimodal North-South model is the best fit for the data according to both WAIC and LOO.

Conclusion

What a journey! What started a few months ago as a simple observation of my dog’s pooping habits turned into a full-blown Bayesian analysis.

In this article, I’ve shown how to model circular data, estimate the mean direction and concentration parameter, and update the posterior distribution with new observations. We’ve also seen how to use the Bayes Factor for hypothesis testing and compare models using information criteria.

And the results were super interesting! Auri does indeed have preferences and somehow manages to align himself across the North-South axis. If I ever get lost in the woods with my dog, I know what direction to follow. Just need a big enough sample size to be sure!

I hope you enjoyed this journey as much as I did. If you have any questions or suggestions, feel free to reach out. And if you’d like to support my work, consider buying me a coffee ❤️

My socials:

References:

- Dogs are sensitive to small variations of the Earth’s magnetic field, Vlastimil Hart et al., [link]

- Biostatistical Analysis, Fifth Edition, Jerrold H. Zar, [link]

- PyMC, Probabilistic Programming in Python, [link]

Dog Poop Compass: Bayesian Analysis of Canine Business was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Dog Poop Compass: Bayesian Analysis of Canine Business

Go Here to Read this Fast! Dog Poop Compass: Bayesian Analysis of Canine Business