A simple approach using Monte Carlo simulations

I love games. Chess, Scrabble, you name it. However one that I’m embarrassingly bad at is the very simple game of Connect Four. For some reason, that, and a desire to try my hand at the more practical side of data science, gave me the idea of building a simple AI capable of playing the game of Connect Four at a high skill level.

The obvious problem here is, if I’m terrible at Connect Four, how on Earth can I build an AI capable of playing it? Enter Monte Carlo simulations. Monte Carlo simulations are a powerful tool in data science that use random sampling to estimate complex outcomes. This robust approach has a surprisingly wide array of applications from numerical integration, to financial modelling, and as we’ll explore, playing the game of Connect Four.

In this article I’ll cover a brief introduction to Monte Carlo simulations, then dive into the specifics of making that work for Connect Four, before putting it all together and sharing some code. And if you want, I’ll give you a chance to play the AI yourself and see how you fare.

Let’s go!

Introduction to Monte-Carlo Methods:

That idea of Monte-Carlo sampling is pretty simple — if you have a problem that you cannot solve analytically why not run random experiments and try to estimate a numerical answer? If that does not make sense just yet don’t worry, we’ll look at an example in just a moment. But first, let’s get our history straight.

The backstory of Monte Carlo methods is quite interesting. The primary developer of the method was Stanislaw Ulam, a physicist so prominent he worked on the Manhattan project developing the atomic bomb. Important to our story though was Stanislaw’s uncle, who had an unfortunate gambling habit that led to Stanislaw naming the new calculation method after the famous Monte Carlo casino in Monaco.

Now, back to that example I promised you on just what it means to generate random samples.

A worked example

Suppose we want to find the area inside a circle of radius 1. The actual area of such a circle is of course our friend πr² and since r is 1, the area is simply π. But what if we don’t know π – How can we arrive at this answer by generating random experiments as the Monte Carlo method prescribes?

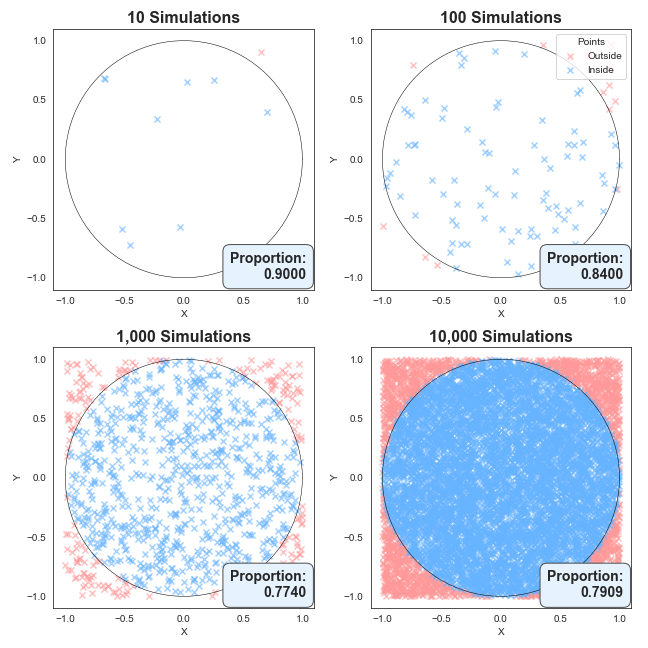

First, simulate random points in the region -1 < x < 1, and -1 < y < 1. Then for each point note whether or not it falls inside or outside the circle. Below I’ve created such simulations for 10, 100, 1000 and 10,000 random coordinates.

What you can see is with only 10 points, the area of the circle (or the proportion that it takes up) is very rough, but as we add more and more points, the proportion of points lying in the circle becomes more consistent.

Now you’re probably asking, well, these charts are pretty and all, but what’s the real takeaway? A very fair question.

Notice we end up with an estimate for the proportion of simulations that results in a point within the circle? Well, we know the area of the square is going to be 2 x 2 = 4, we can then estimate π by multiplying this proportion by 4, because the area is the circle is simply π.

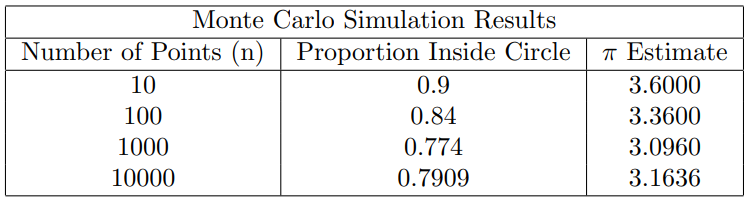

The table below summarises the results. Notice how the π estimate gets closer and closer to the true value as the number of simulations increases.

We can do even better with more simulations of course. The following code snippet that runs one hundred million samples generally gives a number correct to three decimal places:

import numpy as np

n = 100_000_000

points = np.random.rand(n, 2)

inside_circle = np.sum(points[:,0]**2 + points[:,1]**2 <= 1)

pi_estimate = (inside_circle / n) * 4

print(pi_estimate) # prints 3.141x

The key takeaway here is that by generating random simulations (our coordinate pairs), we can achieve a surprisingly precise estimate for a known quantity! Our first example of the Monte Carlo method in action.

Translating the Approach to Games

This is great and all, but we don’t want to calculate π, we want to make an AI capable of playing Connect Four! Fortunately, the logic we just used to calculate π can also be applied to the game of Connect Four.

In the above example we did two things, firstly we generated random samples (coordinate pairs), and then secondly we approximated a quantity (π).

Well here we will do the same. First, we generate random samples as before, but this time those random samples will be choosing random moves, which will simulate entire games of Connect Four.

Then, secondly, we will again approximate a quantity, but the quantity we are chasing is the probability of winning with each move.

A brief refresher of the rules

Before we jump into creating simulations, let’s quickly review the rules of Connect Four. Players take turns dropping their coloured tiles into any unfilled columns on a 7 x 6 game board. The game ends when a player lines up four tiles in any direction, or when the board is full and a draw is reached.

The Monte Carlo method for Connect Four

Okay, now that we are on top of the theory, it’s time to put it into practice and teach an AI to play Connect Four. In order to find the right move in the game of Connect Four we:

- Randomly sample each of the possible legal moves. (What column to drop a tile into).

- then simulate the entire game from this point assuming both players make their moves completely at random.

- Track the outcome of each of the random games to calculate win probabilities for each move.

- Finally, select the move with the highest win probability.

That sounds quite simple, and in reality it is!

To see this method in action, here is a python implementation of this logic that I have written to play the game of Connect Four. There’s a bit going on, but don’t stress if it doesn’t all make sense — the actual implementation details are less important than the concept!

That said, for those interest the approach makes use of object oriented programming, with a Player class capable of making moves on a Board class.

Here’s how it works in practice: we start with a list of possible valid moves, and we draw from them at random. For each move, we call the `_simulate_move` function, which will simulate an entire game from that point onward and return the winning symbol. If that symbol matches that of the AI player, we increment the wins. After running numerous simulations, we calculate the win rates for each move, and finally return the move corresponding to the highest win rate.

def _get_best_move(self, board: Board, num_sims: int):

# Get a list of all viable moves

win_counts = {column: 0 for column in range(board.width) if board.is_valid_move(column)}

total_counts = {column: 0 for column in range(board.width) if board.is_valid_move(column)}

valid_moves = list(win_counts.keys())

for _ in range(num_sims):

column = random.choice(valid_moves) # Pick a move a random

result = self._simulate_move(board, column) # Simulate the game after making the random move

total_counts[column] += 1

if result == self.symbol: # Check whether the AI player won

win_counts[column] += 1

win_rates = {column: win_counts[column] / total_counts[column] if total_counts[column] > 0 else 0 for column in valid_moves}

best_move = max(win_rates, key=win_rates.get) # Find the move with the best win rate

return best_move

In summary, by simulating random moves and tracking the game from that point, this Monte Carlo approach helps the AI start to make much smarter moves than if it were just guessing.

Some Practical Examples:

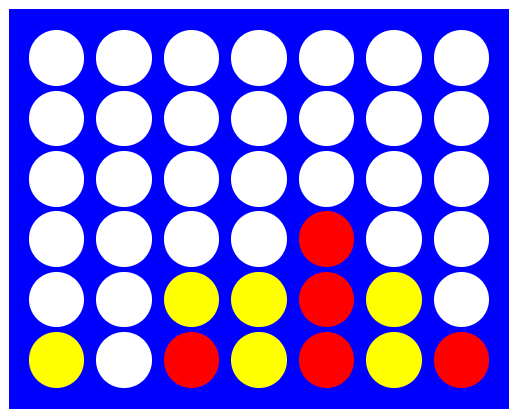

Okay enough code! Let’s put the AI to the test and see how it will perform in a couple of positions. Below we will go through two different positions, and show the outcome of the above block of code. The first situation is quite simple, and the second a bit more nuanced.

It’s red turn, and the obvious best move is to play a move in the 5th column. If we simulate 1000 random games from this position using the method above, the AI player creates the following win rates. Placing a tile in column 5 results in a win every time (as it should!), and is chosen.

Fantastic! Our AI can identify a winning move when one is available. A simple scenario yes, but to be honest I’ve missed plenty of wins in the game before…

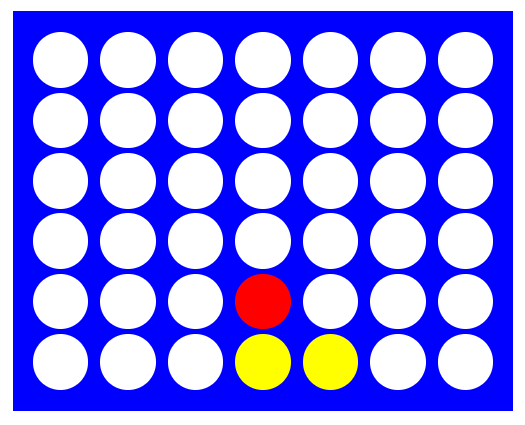

Now, let’s look at another position. This one is a little more tricky. Have you got an idea of what Red should play to prevent Yellow gaining a winning advantage?

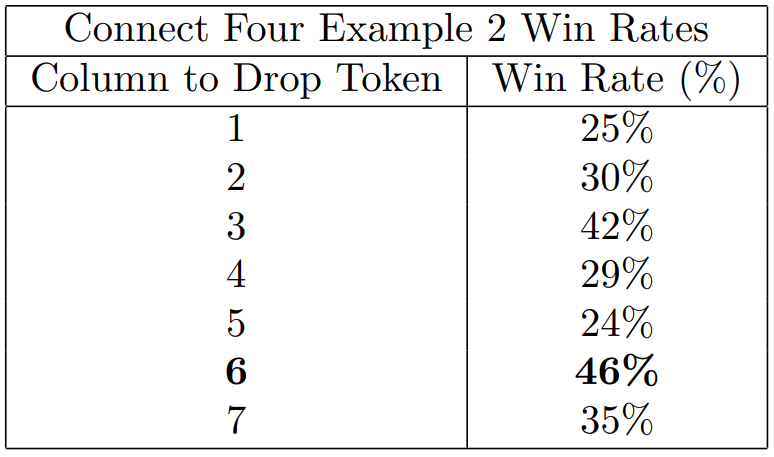

The key here is to prevent yellow from creating an open-sided 3 in a row set up, which would lead to a win. Red needs to block this by playing in either the 3rd or 6th column! Simulating 1000 games from this position we get the below win-rates. Note the AI correctly identifies the two blocking moves (column 3 and 6) as having the highest win rates. Further it realised column 6 has the highest chance of winning and selects that.

How does it fair in practice?

See for yourself! You can challenge the AI here: https://fourinarowgame.online/ . The difficulty is based on adjusting the number of simulations. Easy simulates 50 games, moderate simulates 500 games, and hard simulates 1500 games. Personally I can beat the easy mode pretty consistently, but that’s about it!

Conclusion

Okay, let’s bring it all together. In writing this article I really wanted to do two things. First, I wanted to demonstrate the power of the Monte Carlo method for a straight forward calculation like estimating π by simulating random coordinates.

Next, and more interestingly, I wanted to show the strength of the same approach to board games. What’s fascinating is that despite knowing nothing of Connect Four strategy, it’s entirely possible to just simulate random games, and end up with an AI opponent capable of playing at quite a high level!

As always, thanks for reading and see you next time.

Beating Connect Four with AI was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Beating Connect Four with AI