A surprising experiment to show that the devil is in the details

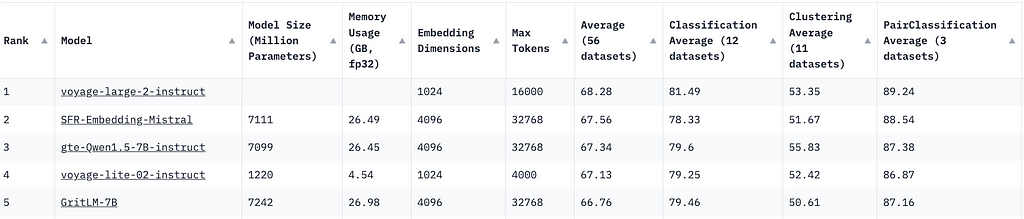

Embeddings Leaderboard

With the growing number of embedding models available, choosing the right one for your machine learning applications can be challenging. Fortunately, the MTEB leaderboard provides a comprehensive range of ranking metrics for various natural language processing tasks.

When you visit the site, you’ll notice that the top five embedding models are Generative Pre-trained Transformers (GPTs). This might lead you to think that GPT models are the best for embeddings. But is this really true? Let’s conduct an experiment to find out.

GPT Embeddings

Embeddings are tensor representation of texts, that converts text token IDs and projects them into a tensor space.

By inputting text into a neural network model and performing a forward pass, you can obtain embedding vectors. However, the actual process is a bit more complex. Let’s break it down step by step:

- Convert the text into token IDs

- Pass the token IDs into a neural network

- Return the outputs of the neural network

In the first step, I am going to use a tokenizer to achieve it. model_inputs is the tensor representation of the text content, “some questions” .

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained(

"mistralai/Mistral-7B-Instruct-v0.1"

)

messages = [

{

"role": "user",

"content": "some questions",

},

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt")

model_inputs = encodeds.to("cuda")

The second step is straightforward, passing the model_inputs into a neural network. The logits of generated tokens can be accessed via .logits .

import torch

with torch.no_grad():

return model(model_inputs).logits

The third step is a bit tricky. GPT models are decoder-only, and their token generation is autoregressive. In simple terms, the last token of a completed sentence has seen all the preceding tokens in the sentence. Therefore, the output of the last token contains all the affinity scores (attentions) from the preceding tokens.

Bingo! You are most interested in the last token because of the attention mechanism in the transformers.

The output dimension of the GPTs implemented in Hugging Face is (batch size, input token size, number of vocabulary). To get the last token output of all the batches, I can perform a tensor slice.

import torch

with torch.no_grad():

return model(model_inputs).logits[:, -1, :]

Quality of these GPT Embeddings

To measure the quality of these GPT embeddings, you can use cosine similarity. The higher the cosine similarity, the closer the semantic meaning of the sentences.

import torch

def compute_cosine_similarity(input1, input2):

cos = torch.nn.CosineSimilarity(dim=1, eps=1e-6)

return cos(input1, input2)

Let’s create some util functions that allows us to loop through list of question and answer pairs and see the result. Mistral 7b v0.1 instruct , one of the great open-sourced models, is used for this experiment.

from transformers import AutoTokenizer, AutoModelForCausalLM

from torch.distributions import Categorical

import torch

from termcolor import colored

model = AutoModelForCausalLM.from_pretrained(

"mistralai/Mistral-7B-Instruct-v0.1"

)

tokenizer = AutoTokenizer.from_pretrained(

"mistralai/Mistral-7B-Instruct-v0.1"

)

def generate_last_token_embeddings(question, max_new_tokens=30):

messages = [

{

"role": "user",

"content": question,

},

]

encodeds = tokenizer.apply_chat_template(messages, return_tensors="pt")

model_inputs = encodeds.to("cuda")

with torch.no_grad():

return model(model_inputs).logits[:, -1, :]

def get_similarities(q_batch, a_batch):

for q in q_batch:

for a in a_batch:

q_emb, a_emd = generate_last_token_embeddings(q), generate_last_token_embeddings(a),

similarity = compute_cosine_similarity(q_emb, a_emd)

print(colored(f"question: {q} and ans: {a}", "green"))

print(colored(f"result: {similarity}", "blue"))

q = ["Where is the headquarter of OpenAI?",

"What is GPU?"]

a = ["OpenAI is based at San Francisco.",

"A graphics processing unit (GPU) is an electronic circuit that can perform mathematical calculations quickly",

]

Results and Observations

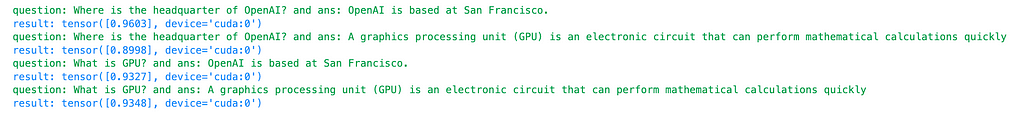

For the first question and answer pair:

- Question: “What is the headquarter of OpenAI?”

- Answer: “OpenAI is based at San Francisco.”

- Cosine Similarity: 0.96

For the second question and answer pair:

- Question: “What is GPU?”

- Answer: “A graphics processing unit (GPU) is an electronic circuit that can perform mathematical calculations quickly.”

- Cosine Similarity: 0.94

For an irrelevant pair:

- Question: “Where is the headquarter of OpenAI?”

- Answer: “A graphics processing unit (GPU) is an electronic circuit that can perform mathematical calculations quickly.”

- Cosine Similarity: 0.90

For the worst pair:

- Question: “What is GPU?”

- Answer: “OpenAI is based at San Francisco.”

- Cosine Similarity: 0.93

These results suggest that using GPT models, in this case, the mistral 7b instruct v0.1, as embedding models may not yield great results in terms of distinguishing between relevant and irrelevant pairs. But why are GPT models still among the top 5 embedding models?

Contrastive Loss Comes to the Rescue

tokenizer = AutoTokenizer.from_pretrained("intfloat/e5-mistral-7b-instruct")

model = AutoModelForCausalLM.from_pretrained(

"intfloat/e5-mistral-7b-instruct"

)

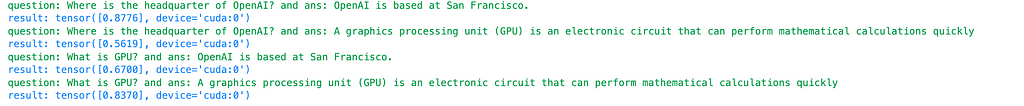

Repeating the same evaluation procedure with a different model, e5-mistral-7b-instruct, which is one of the top open-sourced models from the MTEB leaderboard and fine-tuned from mistral 7b instruct, I discover that the cosine similarity for the relevant question and pairs are 0.88 and 0.84 for OpenAI and GPU questions, respectively. For the irrelevant question and answer pairs, the similarity drops to 0.56 and 0.67. This findings suggests e5-mistral-7b-instruct is a much-improved model for embeddings. What makes such an improvement?

Delving into the paper behind e5-mistral-7b-instruct, the key is the use of contrastive loss to further fine tune the mistral model.

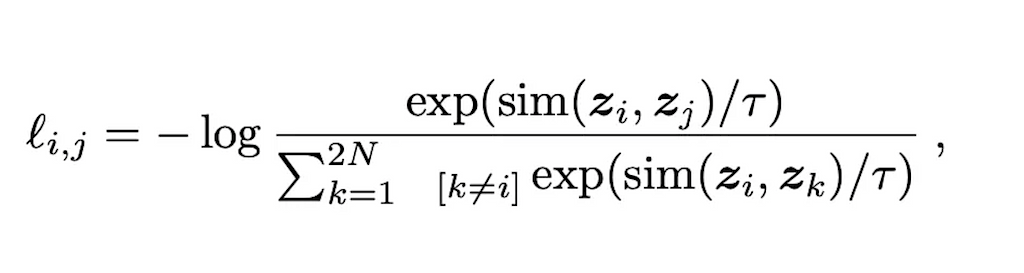

Unlike GPTs that are trained or further fine-tuned using cross-entropy loss of predicted tokens and labeled tokens, contrastive loss aims to maximize the distance between negative pairs and minimize the distance between the positive pairs.

This blog post covers this concept in greater details. The sim function calculates the cosine distance between two vectors. For contrastive loss, the denominators represent the cosine distance between positive examples and negative examples. The rationale behind contrastive loss is that we want similar vectors to be as close to 1 as possible, since log(1) = 0 represents the optimal loss.

Conclusion

In this post, I have highlighted a common pitfall of using GPTs as embedding models without fine-tuning. My evaluation suggests that fine-tuning GPTs with contrastive loss, the embeddings can be more meaningful and discriminative. By understanding the strengths and limitations of GPT models, and leveraging customized loss like contrastive loss, you can make more informed decisions when selecting and utilizing embedding models for your machine learning projects. I hope this post helps you choose GPTs models wisely for your applications and look forward to hearing your feedback! 🙂

Are GPTs Good Embedding Models was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Are GPTs Good Embedding Models