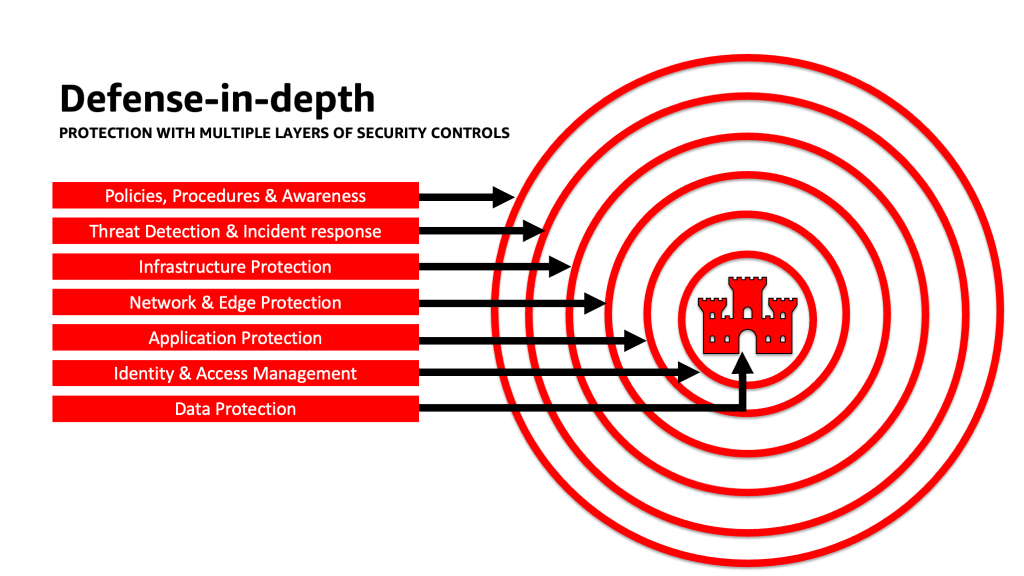

This post provides three guided steps to architect risk management strategies while developing generative AI applications using LLMs. We first delve into the vulnerabilities, threats, and risks that arise from the implementation, deployment, and use of LLM solutions, and provide guidance on how to start innovating with security in mind. We then discuss how building on a secure foundation is essential for generative AI. Lastly, we connect these together with an example LLM workload to describe an approach towards architecting with defense-in-depth security across trust boundaries.

Originally appeared here:

Architect defense-in-depth security for generative AI applications using the OWASP Top 10 for LLMs