A victim of childhood sexual abuse is suing Apple over its 2022 dropping of a previously-announced plan to scan images stored in iCloud for child sexual abuse material.

Apple has retained nudity detection in images, but dropped some CSAM protection features in 2022.

Apple has retained nudity detection in images, but dropped some CSAM protection features in 2022.

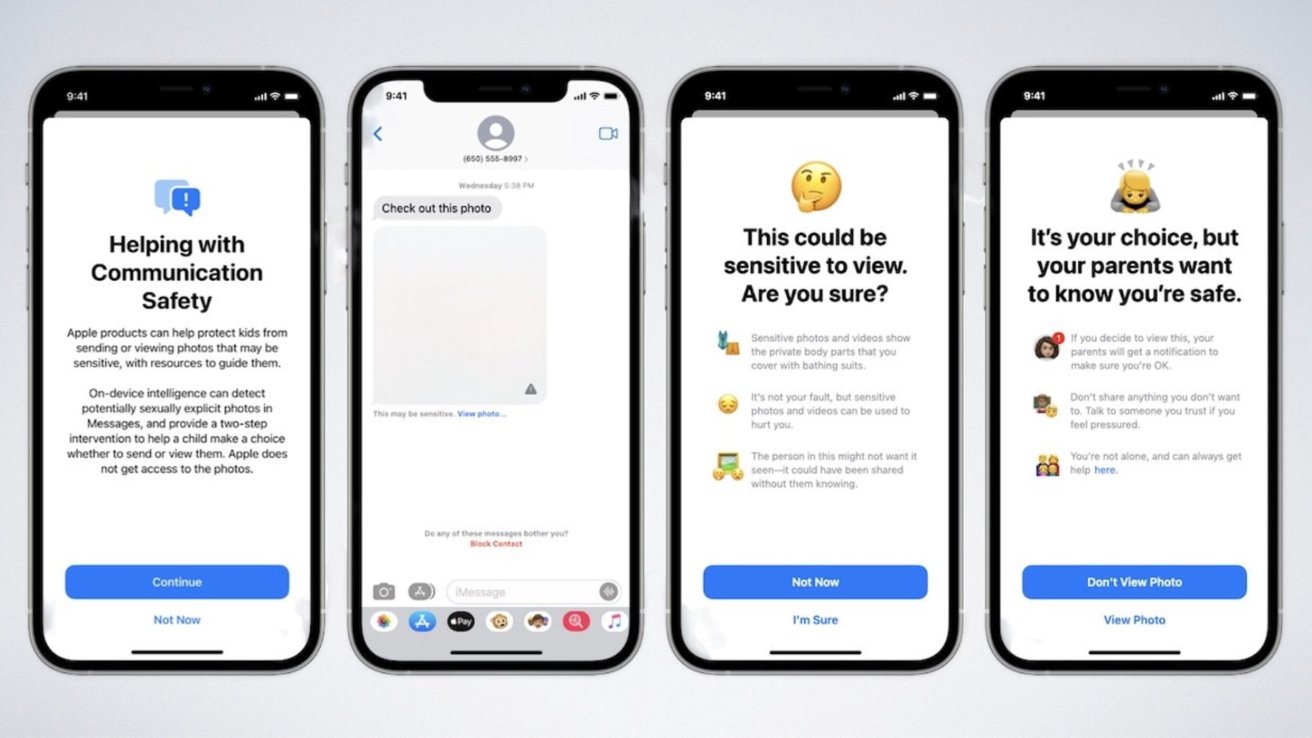

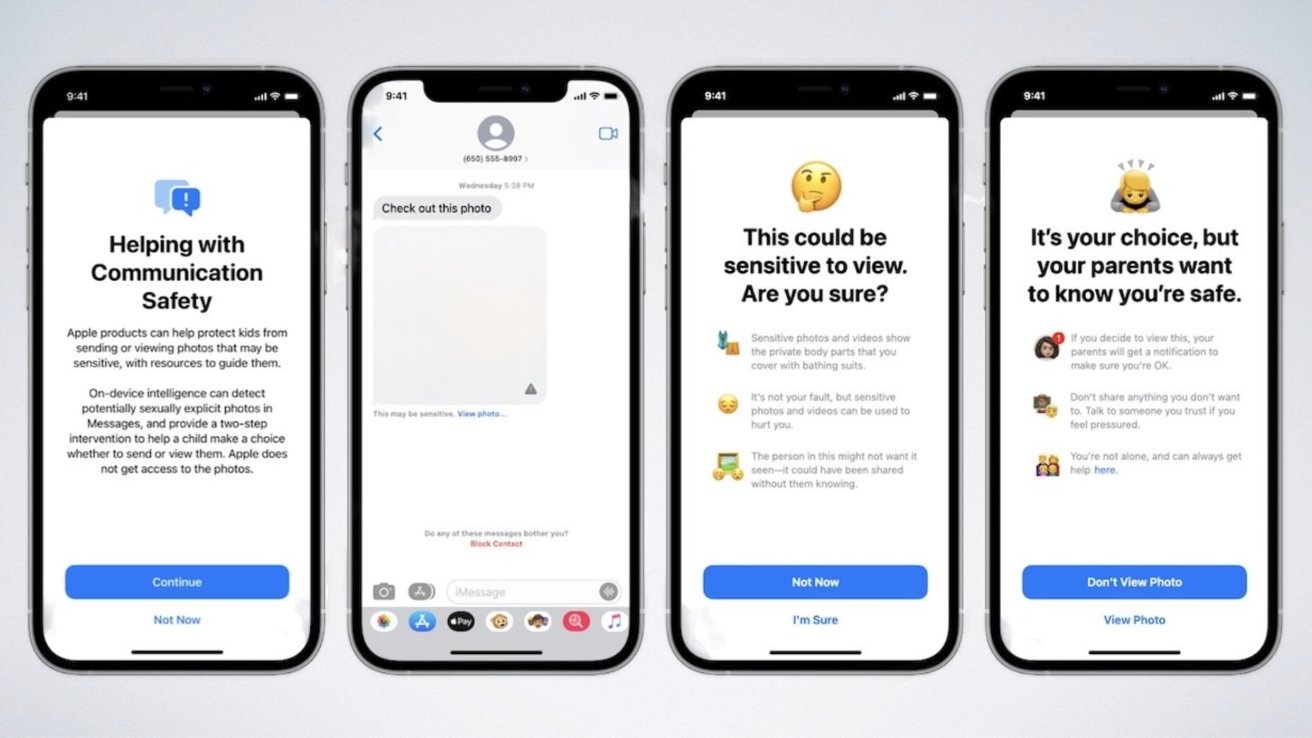

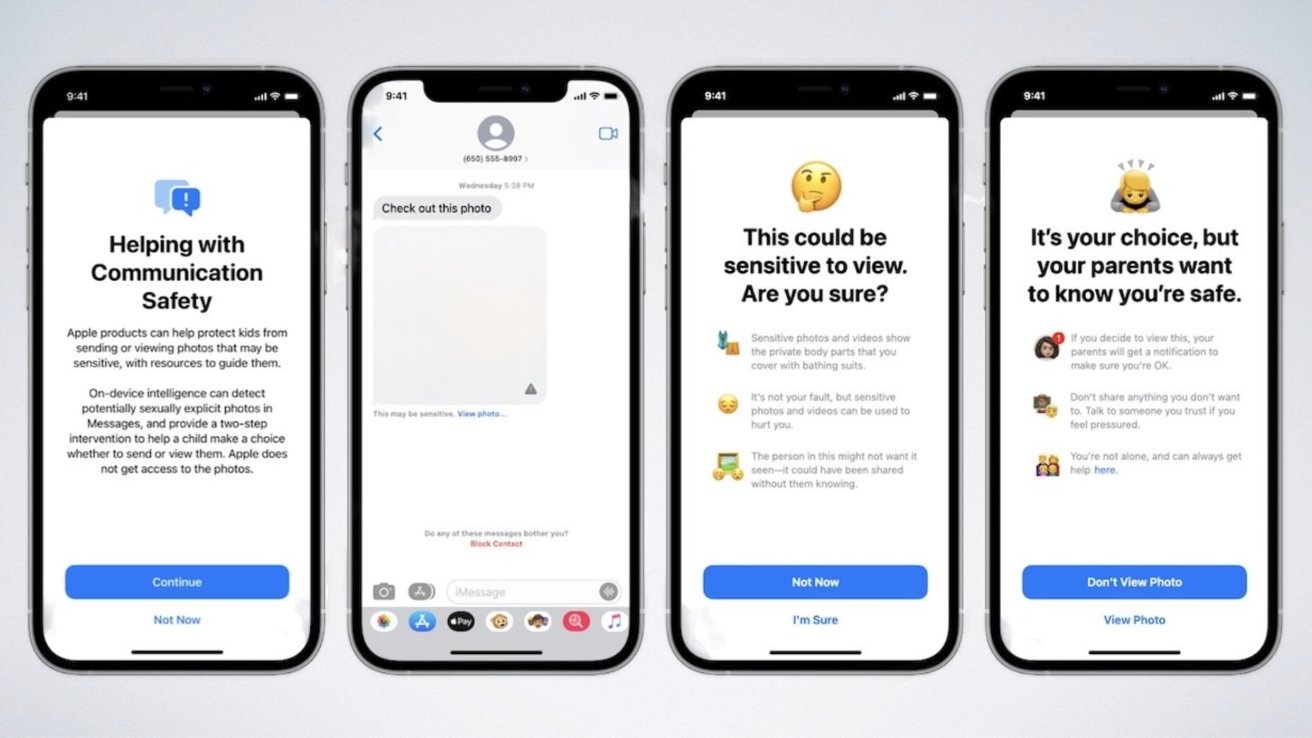

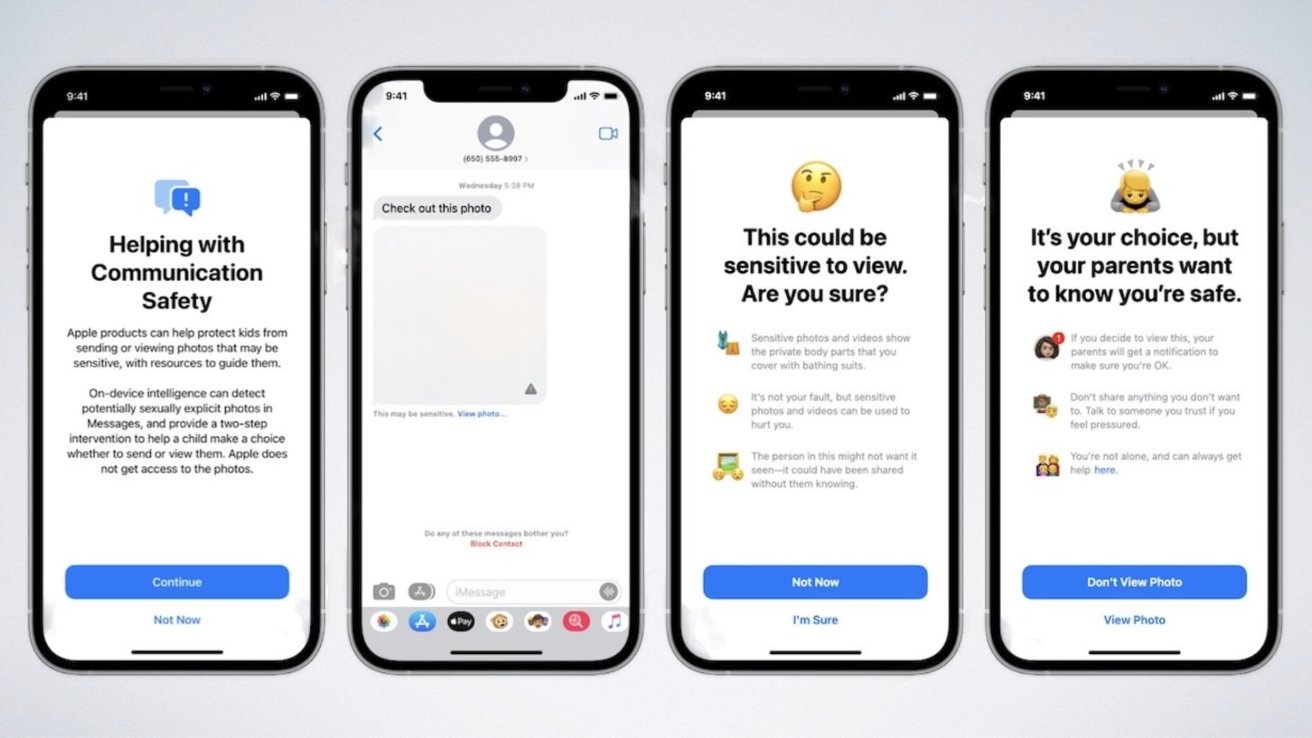

Apple originally introduced a plan in late 2021 to protect users from child sexual abuse material (CSAM) by scanning uploaded images on-device using a hashtag system. It would also warn users before sending or receiving photos with algorithically-detected nudity.

The nudity-detection feature, called Communication Safety, is still in place today. However, Apple dropped its plan for CSAM detection after backlash from privacy experts, child safety groups, and governments.

Go Here to Read this Fast! Apple sued over 2022 dropping of CSAM detection features

Originally appeared here:

Apple sued over 2022 dropping of CSAM detection features