In large language model (LLM) training, effective orchestration and compute resource management poses a significant challenge. Automation of resource provisioning, scaling, and workflow management is vital for optimizing resource usage and streamlining complex workflows, thereby achieving efficient deep learning training processes. Simplified orchestration enables researchers and practitioners to focus more on model experimentation, hyperparameter tuning, […]

Originally appeared here:

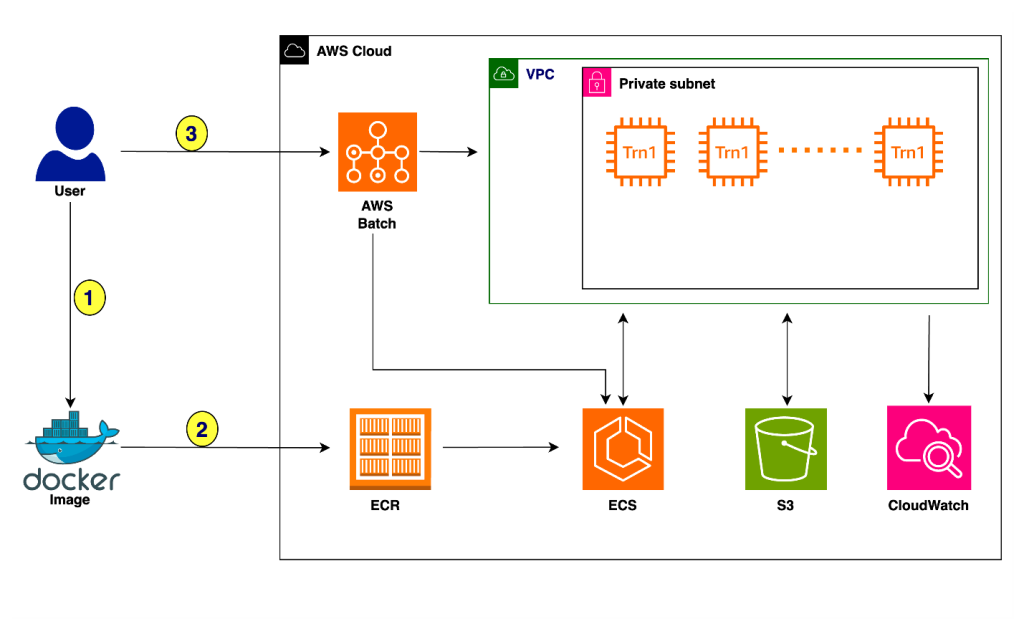

Accelerate deep learning training and simplify orchestration with AWS Trainium and AWS Batch