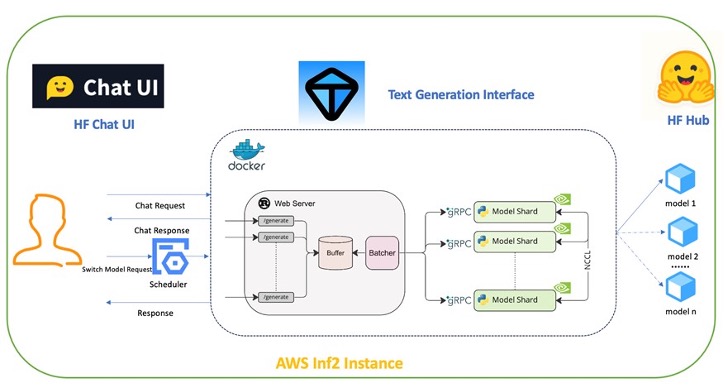

In this post, we will introduce how to use an Amazon EC2 Inf2 instance to cost-effectively deploy multiple industry-leading LLMs on AWS Inferentia2, a purpose-built AWS AI chip, helping customers to quickly test and open up an API interface to facilitate performance benchmarking and downstream application calls at the same time.

Originally appeared here:

Brilliant words, brilliant writing: Using AWS AI chips to quickly deploy Meta LLama 3-powered applications