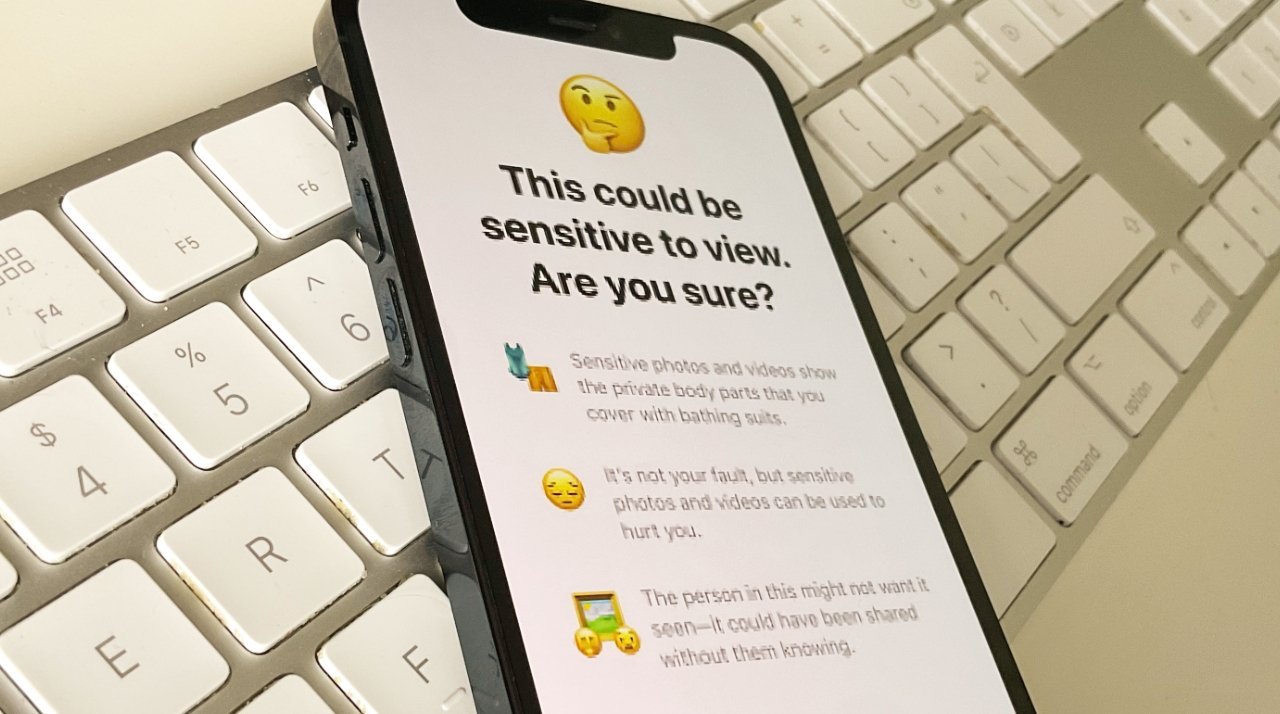

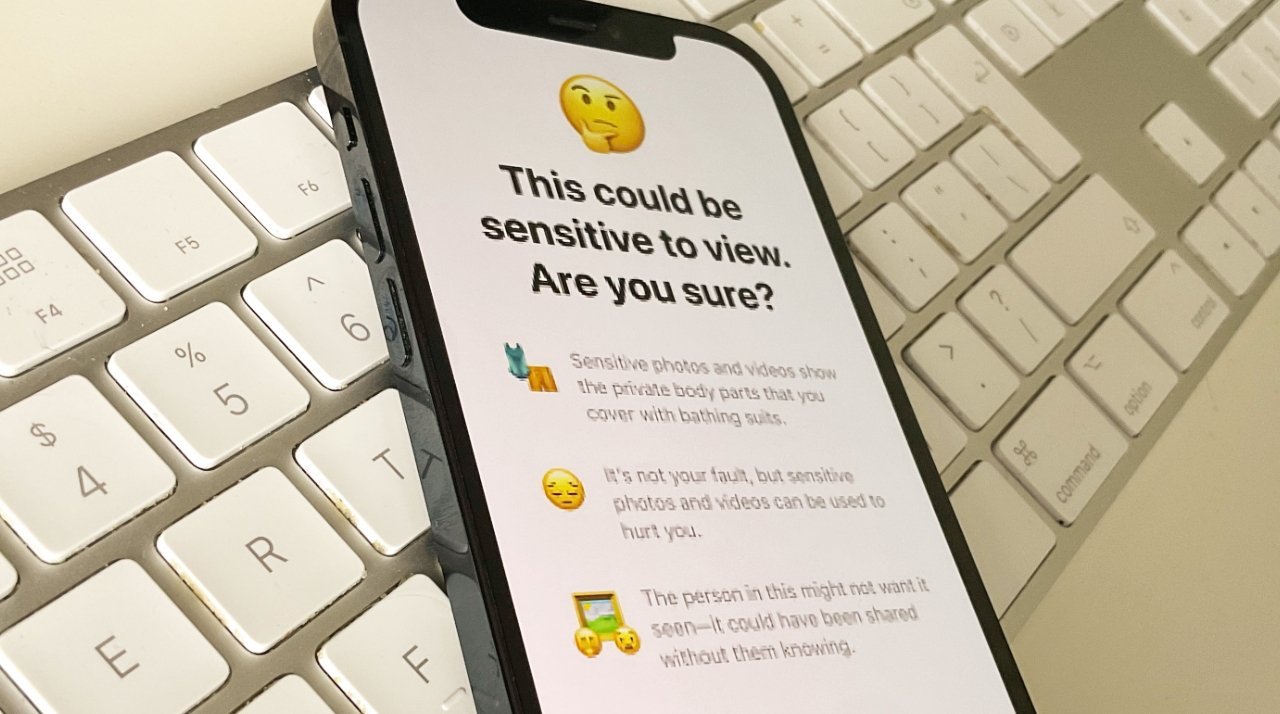

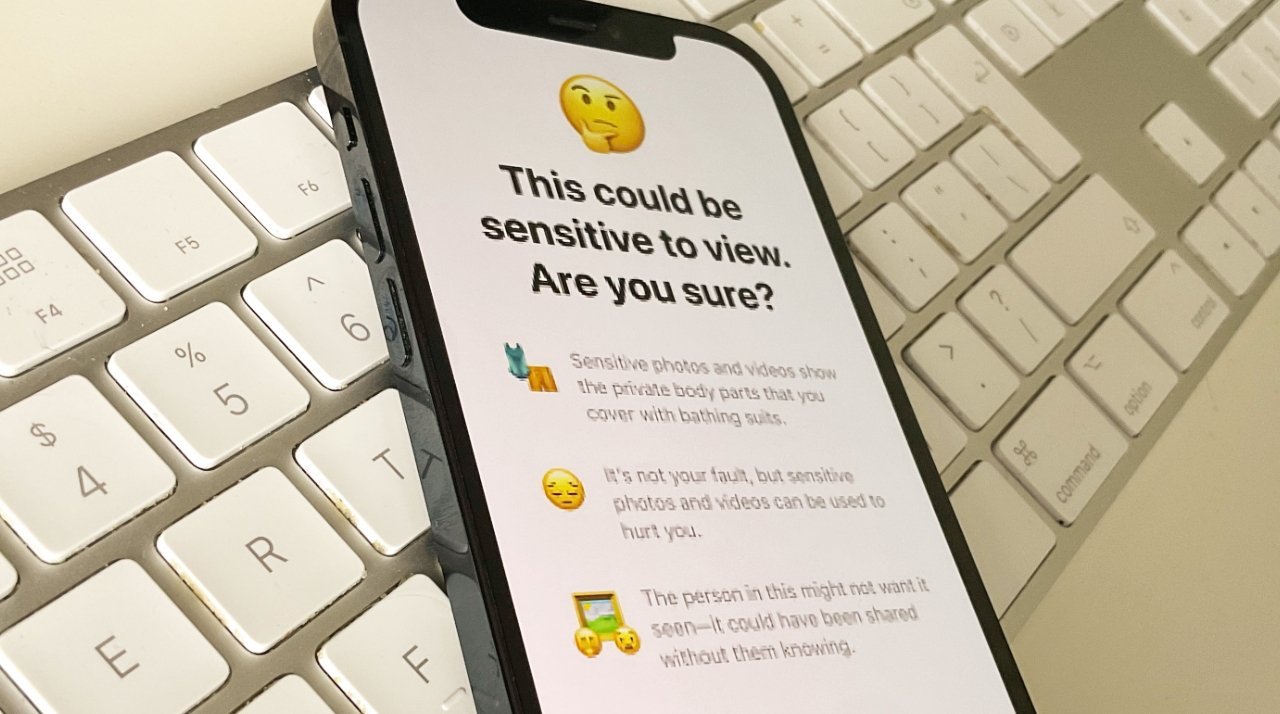

Apple cancelled its major CSAM proposals but introduced features such as automatic blocking of nudity sent to children

In 2022, Apple abandoned its plans for Child Sexual Abuse Material (CSAM) detection, following allegations that it would ultimately be used for surveillance of all users. The company switched to a set of features it calls Communication Safety, which is what blurs nude photos sent to children.

According to The Guardian newspaper, the UK’s National Society for the Prevention of Cruelty to Children (NSPCC) says Apple is vastly undercounting incidents of CSAM in services such as iCloud, FaceTime and iMessage. All US technology firms are required to report detected cases of CSAM to the National Center for Missing & Exploited Children (NCMEC), and in 2023, Apple made 267 reports.

Go Here to Read this Fast! Child safety watchdog accuses Apple of hiding real CSAM figures

Originally appeared here:

Child safety watchdog accuses Apple of hiding real CSAM figures