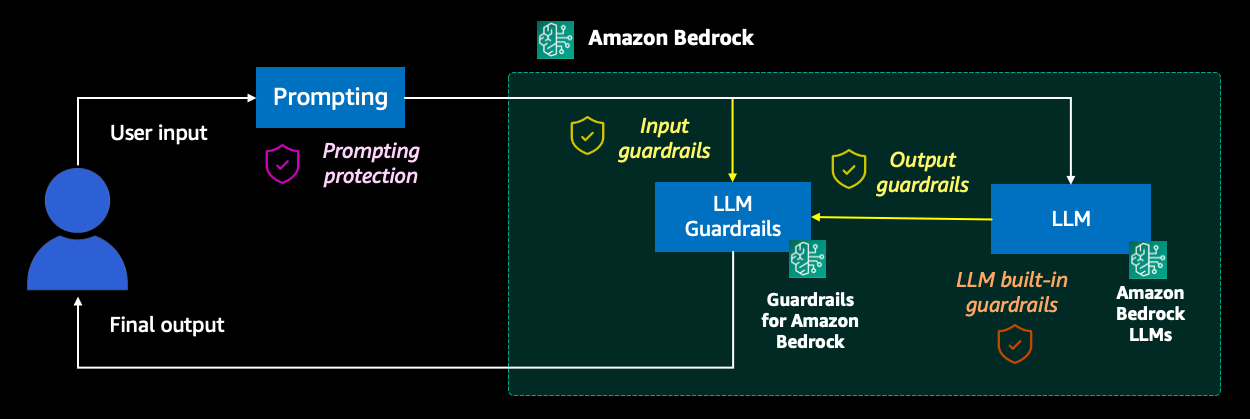

In this post, we explore a comprehensive solution for addressing the challenges of securing a virtual travel agent powered by generative AI. We provide an end-to-end example and its accompanying code to demonstrate how to implement prompt engineering techniques, content moderation, and various guardrails to make sure the assistant operates within predefined boundaries by relying on Guardrails for Amazon Bedrock. Additionally, we delve into monitoring strategies to track the activation of these safeguards, enabling proactive identification and mitigation of potential issues.

Originally appeared here:

Safeguard a generative AI travel agent with prompt engineering and Guardrails for Amazon Bedrock