Go here to Read this Fast! AI reveals what the next crypto to pump after SOL and DOGE will be

Originally appeared here:

AI reveals what the next crypto to pump after SOL and DOGE will be

Go here to Read this Fast! AI reveals what the next crypto to pump after SOL and DOGE will be

Originally appeared here:

AI reveals what the next crypto to pump after SOL and DOGE will be

Go here to Read this Fast! Bitwise proposed Bitcoin-Ethereum tied ETF to SEC

Originally appeared here:

Bitwise proposed Bitcoin-Ethereum tied ETF to SEC

The crypto world is buzzing, and all eyes are on Lightchain Protocol AI, the newest Layer 1 blockchain that’s capturing massive attention with its explosive LCAI token ICO. It’s been a while since the market has seen a truly innovative Layer 1 platform, and Lightchain Protocol AI is blowing up with a presale that has […]

The post Lightchain Protocol AI’s ICO Is Blowing Up The Hottest New Layer 1 Presale in Years appeared first on CryptoSlate.

Originally appeared here:

Lightchain Protocol AI’s ICO Is Blowing Up The Hottest New Layer 1 Presale in Years

AVAX’s breakout from a long-term downtrend underlined potential for a rally beyond the $45 resistance zone

Active addresses surged by 28.56%, reinforcing bullish network activity trends

For

The post AVAX’s next breakout to take it to $65? Here’s why that’s possible! appeared first on AMBCrypto.

ENS has a strongly bullish outlook for the coming weeks.

On-chain metrics signaled increased market activity and demand.

Ethereum Name Service [ENS] has flipped its long-term bearish trend a

The post Ethereum Name Service: Why $20 is critical for ENS’ next rally appeared first on AMBCrypto.

Base reinforced its lead as the top Ethereum layer 2 with new TVL ATH.

Daily transaction count soars to new highs as utility continues to fuel growth.

The Base network’s relentless push to

The post Base secures top Layer 2 spot, crosses THESE key milestones appeared first on AMBCrypto.

Go here to Read this Fast! Base secures top Layer 2 spot, crosses THESE key milestones

Originally appeared here:

Base secures top Layer 2 spot, crosses THESE key milestones

VET’s breakout from the descending channel faced a $0.037 retest.

Technical indicators and bearish sentiment signaled consolidation before any potential recovery.

VeChain [VET] has experie

The post VeChain [VET] price prediction: Will $0.037 support spark recovery? appeared first on AMBCrypto.

Go here to Read this Fast! VeChain [VET] price prediction: Will $0.037 support spark recovery?

Originally appeared here:

VeChain [VET] price prediction: Will $0.037 support spark recovery?

Leading web3 launchpad, overHere is working with internet personality Hailey Welch to launch her Hawk Tuah ($HAWK) meme coin. On an episode of her talk show Talk Tuah with billionaire Mark Cuban, Hailey Welch announced that she was officially launching the $HAWK meme coin. Hailey is partnering with OverHere to launch a unique meme coin […]

Originally appeared here:

Web3 Platform and Launchpad overHere Launches Hawk Tuah ($HAWK) Memecoin with Hailey Welch

There are periodic proclamations of the coming neuromorphic computing revolution, which uses inspiration from the brain to rethink neural networks and the hardware they run on. While there remain challenges in the field, there have been solid successes and continues to be steady progress in spiking neural network algorithms and neuromorphic hardware. This progress is paving the way for disruption in at least some sectors of artificial intelligence and will reduce the energy consumption per computation at inference and allow artificial intelligence to be pushed further out to the edge. In this article, I will cover some neuromorphic computing and engineering basics, training, the advantages of neuromorphic systems, and the remaining challenges.

The classical use case of neuromorphic systems is for edge devices that need to perform the computation locally and are energy-limited, for example, battery-powered devices. However, one of the recent interests in using neuromorphic systems is to reduce energy usage at data centers, such as the energy needed by large language models (LLMs). For example, OpenAI signed a letter of intent to purchase $51 million of neuromorphic chips from Rain AI in December 2023. This makes sense since OpenAI spends a lot on inference, with one estimate of around $4 billion on running inference in 2024. It also appears that both Intel’s Loihi 2 and IBM’s NorthPole (successor to TrueNorth) neuromorphic systems are designed for use in servers.

The promises of neuromorphic computing can broadly be divided into 1) pragmatic, near-term successes that have already found successes and 2) more aspirational, wacky neuroscientist fever-dream ideas of how spiking dynamics might endow neural networks with something closer to real intelligence. Of course, it’s group 2 that really excites me, but I’m going to focus on group 1 for this post. And there is no more exciting way to start than to dive into terminology.

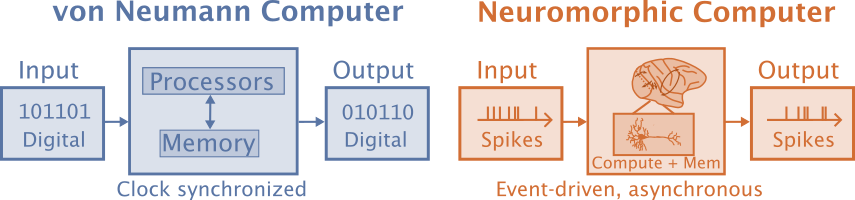

Neuromorphic computation is often defined as computation that is brain-inspired, but that definition leaves a lot to the imagination. Neural networks are more neuromorphic than classical computation, but these days neuromorphic computation is specifically interested in using event-based spiking neural networks (SNNs) for their energy efficiency. Even though SNNs are a type of artificial neural network, the term “artificial neural networks” (ANNs) is reserved for the more standard non-spiking artificial neural networks in the neuromorphic literature. Schuman and colleagues (2022) define neuromorphic computers as non-von Neuman computers where both processing and memory are collocated in artificial neurons and synapses, as opposed to von Neuman computers that separate processing and memory.

Neuromorphic engineering means designing the hardware while “neuromorphic computation” is focused on what is being simulated rather than what it is being simulated on. These are tightly intertwined since the computation is dependent on the properties of the hardware and what is implemented in hardware depends on what is empirically found to work best.

Another related term is NeuroAI, the goal of which is to use AI to gain a mechanistic understanding of the brain and is more interested in biological realism. Neuromorphic computation is interested in neuroscience as a means to an end. It views the brain as a source of ideas that can be used to achieve objectives such as energy efficiency and low latency in neural architectures. A decent amount of the NeuroAI research relies on spike averages rather than spiking neural networks, which allows closer comparison of the majority of modern ANNs that are applied to discrete tasks.

Neuromorphic systems are event-based, which is a paradigm shift from how modern ANN systems work. Even real-time ANN systems typically process one frame at a time, with activity synchronously propagated from one layer to the next. This means that in ANNs, neurons that carry no information require the same processing as neurons that carry critical information. Event-driven is a different paradigm that often starts at the sensor and applies the most work where information needs to be processed. ANNs rely on matrix operations that take the same amount of time and energy regardless of the values in the matrices. Neuromorphic systems use SNNs where the amount of work depends on the number of spikes.

A traditional deployed ANN would often be connected to a camera that synchronously records a frame in a single exposure. The ANN then processes the frame. The results of the frame might then be fed into a tracking algorithm and further processed.

Event-driven systems may start at the sensor with an event camera. Each pixel sends updates asynchronously whenever a change crosses a threshold. So when there is movement in a scene that is otherwise stationary, the pixels that correspond to the movement send events or spikes immediately without waiting for a synchronization signal. The event signals can be sent within tens of microseconds, while a traditional camera might collect at 24 Hz and could introduce a latency that’s in the range of tens of milliseconds. In addition to receiving the information sooner, the information in the event-based system would be sparser and would focus on the movement. The traditional system would have to process the entire scene through each network layer successively.

One of the major challenges of SNNs is training them. Backpropagation algorithms and stochastic gradient descent are the go-to solutions for training ANNs, however, these methods run into difficulty with SNNs. The best way to train SNNs is not yet established and the following methods are some of the more common approaches that are used:

One method of creating SNNs is to bypass training the SNNs directly and instead train ANNs. This approach limits the types of SNNs and hardware that can be used. For example, Sengupta et al. (2019) converted VGG and ResNets to ANNs using an integrate-and-fire (IF) neuron that does not have a leaking or refractory period. They introduce a novel weight-normalization technique to perform the conversion, which involves setting the firing threshold of each neuron based on its pre-synaptic weights. Dr. Priyadarshini Panda goes into more detail in her ESWEEK 2021 SNN Talk.

Advantages:

Disadvantages:

The most common methods currently used to train SNNs are backpropagation-like approaches. Standard backpropagation does not work to train SNNs because 1) the spiking threshold function’s gradient is nonzero except at the threshold where it is undefined and 2) the credit assignment problem needs to be solved in the temporal dimension in addition spatial (or color etc).

In ANNs, the most common activation function is the ReLU. For SNNs, the neuron will fire if the membrane potential is above some threshold, otherwise, it will not fire. This is called a Heaviside function. You could use a sigmoid function instead, but then it would not be a spiking neural network. The solution of using surrogate gradients is to use the standard threshold function in the forward pass, but then use the derivative from a “smoothed” version of the Heaviside function, such as the sigmoid function, in the backward pass (Neftci et al. 2019, Bohte 2011).

Advantages:

Disadvantages:

Spike-timing-dependent plasticity (STDP) is the most well-known form of synaptic plasticity. In most cases, STDP increases the strength of a synapse when a presynaptic (input) spike comes immediately before the postsynaptic spike. Early models have shown promise with STDP on simple unsupervised tasks, although getting it to work well for more complex models and tasks has proven more difficult.

Other biological learning mechanisms include the pruning and creation of both neurons and synapses, homeostatic plasticity, neuromodulators, astrocytes, and evolution. There is even some recent evidence that some primitive types of knowledge can be passed down by epigenetics.

Advantages:

Disadvantages:

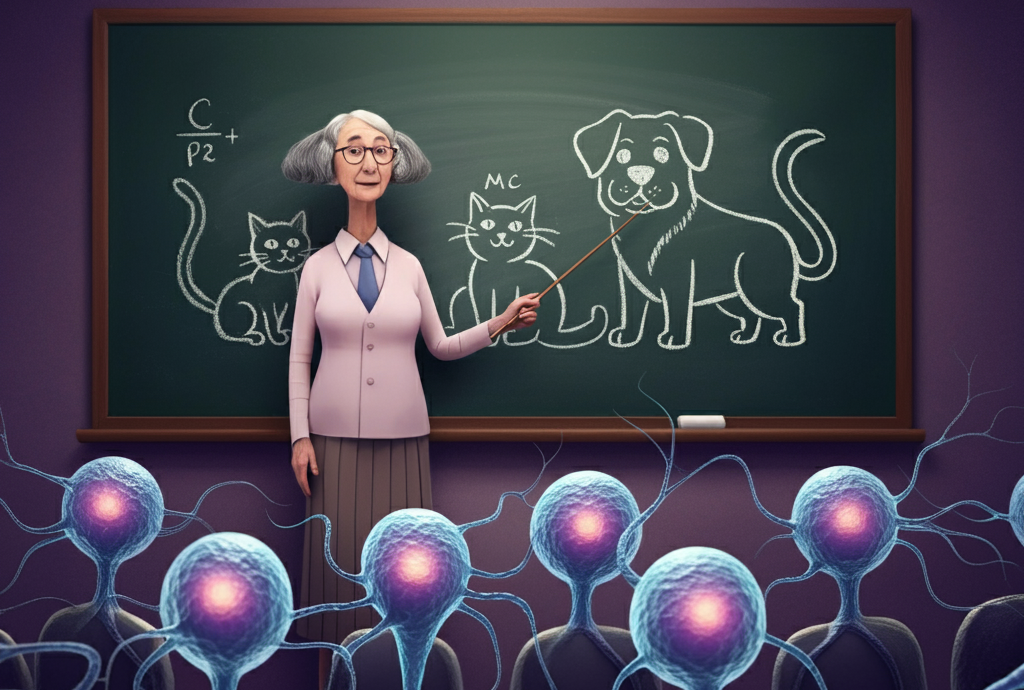

Evolutionary optimization is another approach that has some cool applications that works well with small networks. Dr. Catherine Schuman is a leading expert and she gave a fascinating talk on neuromorphic computing to the ICS lab that is available on YouTube.

Advantages:

Disadvantages:

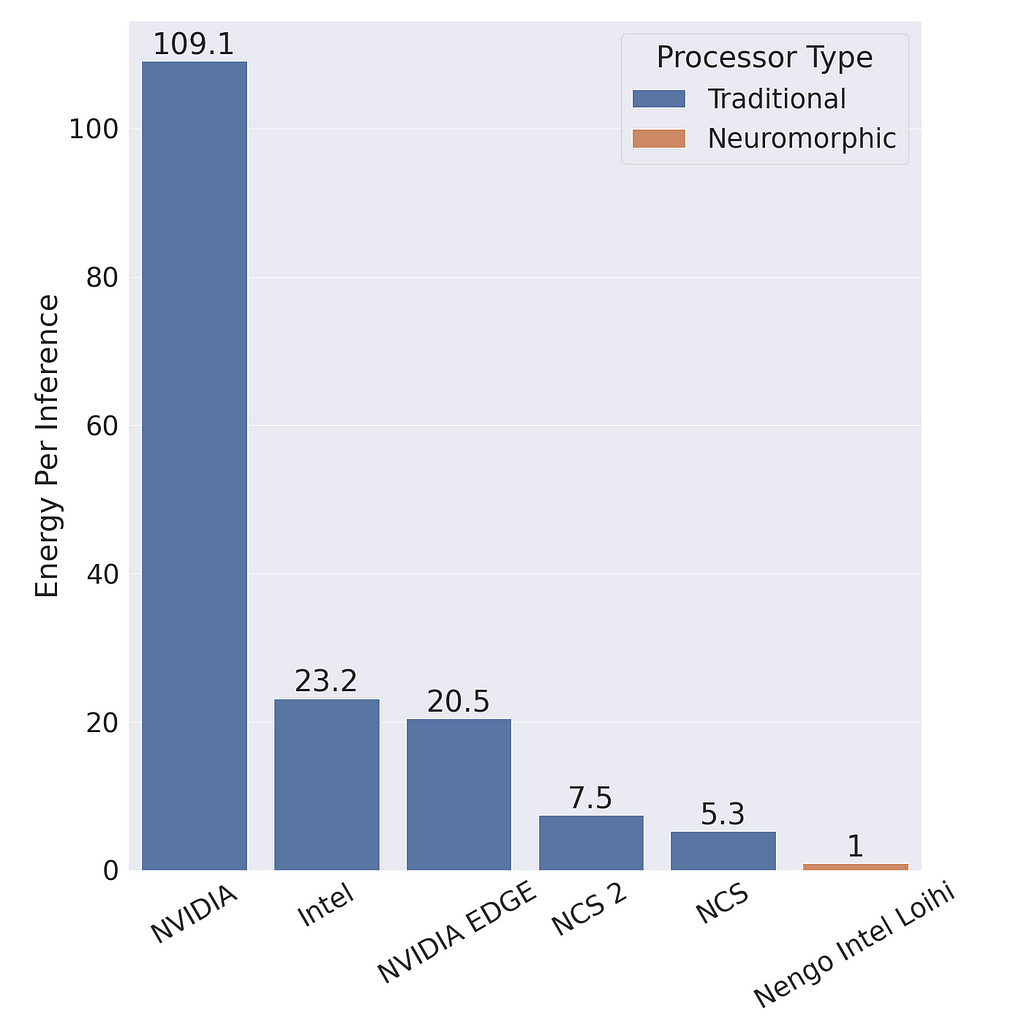

Neuromorphic systems have two main advantages: 1) energy efficiency and 2) low latency. There are a lot of reasons to be excited about the energy efficiency. For example, Intel claimed that their Loihi 2 Neural Processing Unit (NPU) can use 100 times less energy while being as much as 50 times faster than conventional ANNs. Chris Eliasmith compared the energy efficiency of an SNN on neuromorphic hardware with an ANN with the same architecture on standard hardware in a presentation available on YouTube. He found that the SNN is 100 times more energy efficient on Loihi compared to the ANN on a standard NVIDIA GPU and 20 times more efficient than the ANN on an NVIDIA Jetson GPU. It is 5–7 times more energy efficient than the Intel Neural Compute Stick (NCS) and NCS 2. At the same time the SNN achieves a 93.8% accuracy compared to the 92.7% accuracy of the ANN.

Neuromorphic chips are more energy efficient and allow complex deep learning models to be deployed on low-energy edge devices. In October 2024, BrainChip introduced the Akida Pico NPU which uses less than 1 mW of power, and Intel Loihi 2 NPU uses 1 W. That’s a lot less power than NVIDIA Jetson modules that use between 10–50 watts which is often used for embedded ANNs and server GPUs can use around 100 watts.

Comparing the energy efficiency between ANNs and SNNs are difficult because: 1. energy efficiency is dependent on hardware, 2. SNNs and ANNs can use different architectures, and 3. they are suited to different problems. Additionally, the energy used by SNNs scales with the number of spikes and the number of time steps, so the number of spikes and time steps needs to be minimized to achieve the best energy efficiency.

Theoretical analysis is often used to estimate the energy needed by SNNs and ANNs, however, this doesn’t take into account all of the differences between the CPUs and GPUs used for ANNs and the neuromorphic chips for SNNs.

Looking into nature can give us an idea of what might be possible in the future and Mike Davies provided a great anecdote in an Intel Architecture All Access YouTube video:

Consider the capabilities of a tiny cockatiel parrot brain, a two-gram brain running on about 50 mW of power. This brain enables the cockatiel to fly at speeds up to 20 mph, to navigate unknown environments while foraging for food, and even to learn to manipulate objects as tools and utter human words.

In current neural networks, there is a lot of wasted computation. For example, an image encoder takes the same amount of time encoding a blank page as a cluttered page in a “Where’s Waldo?” book. In spiking neural networks, very few units would activate on a blank page and very little computation would be used, while a page containing a lot of features would fire a lot more units and use a lot more computation. In real life, there are often regions in the visual field that contain more features and require more processing than other regions that contain fewer features, like a clear sky. In either case, SNNs only perform work when work needs to be performed, whereas ANNs depend on matrix multiplications that are difficult to use sparsely.

This in itself is exciting. A lot of deep learning currently involves uploading massive amounts of audio or video to the cloud, where the data is processed in massive data centers, spending a lot of energy on the computation and cooling the computational devices, and then the results are returned. With edge computing, you can have more secure and more responsive voice recognition or video recognition, that you can keep on your local device, with orders of magnitude less energy consumption.

When a pixel receptor of an event camera changes by some threshold, it can send an event or spike within microseconds. It doesn’t need to wait for a shutter or synchronization signal to be sent. This benefit is seen throughout the event-based architecture of SNNs. Units can send events immediately, rather than waiting for a synchronization signal. This makes neuromorphic computers much faster, in terms of latency, than ANNs. Hence, neuromorphic processing is better than ANNs for real-time applications that can benefit from low latency. This benefit is reduced if the problem allows for batching and you are measuring speed by throughput since ANNs can take advantage of batching more easily. However, in real-time processing, such as robotics or user interfacing, latency is more important.

One of the challenges is that neuromorphic computing and engineering are progressing at multiple levels at the same time. The details of the models depend on the hardware implementation and empirical results with actualized models guide the development of the hardware. Intel discovered this with their Loihi 1 chips and built more flexibility into their Loihi 2 chips, however, there will always be tradeoffs and there are still many advances to be made on both the hardware and software side.

Hopefully, this will change soon, but commercial hardware isn’t very available. BrainChip’s Akida was the first neuromorphic chip to be commercially available, although apparently, it does not even support the standard leaky-integrate and fire (LIF) neuron. SpiNNaker boards used to be for sale, which was part of the EU Human Brain Project but are no longer available. Intel makes Loihi 2 chips available to some academic researchers via the Intel Neuromorphic Research Community (INRC) program.

The number of neuromorphic datasets is much less than traditional datasets and can be much larger. Some of the common smaller computer vision datasets, such as MNIST (NMNIST, Li et al. 2017) and CIFAR-10 (CIFAR10-DVS, Orchard et al. 2015), have been converted to event streams by displaying the images and recording them using event-based cameras. The images are collected with movement (or “saccades”) to increase the number of spikes for processing. With larger datasets, such as ES-ImageNet (Lin et al. 2021), simulation of event cameras has been used.

The dataset derived from static images might be useful in comparing SNNs with conventional ANNs and might be useful as part of the training or evaluation pipeline, however, SNNs are naturally temporal, and using them for static inputs does not make a lot of sense if you want to take advantage of SNNs temporal properties. Some of the datasets that take advantage of these properties of SNNs include:

Synthetic data can be generated from standard visible camera data without the use of expensive event camera data collections, however these won’t exhibit the high dynamic range and frame rate that event cameras would capture.

Tonic is an example python library that makes it easy to access at least some of these event-based datasets. The datasets themselves can take up a lot more space than traditional datasets. For example, the training images for MNIST is around 10 MB, while in N-MNIST, it is almost 1 GB.

Another thing to take into account is that visualizing the datasets can be difficult. Even the datasets derived from static images can be difficult to match with the original input images. Also, the benefit of using real data is typically to avoid a gap between training and inference, so it would seem that the benefit of using these datasets would depend on their similarity to the cameras used during deployment or testing.

We are in an exciting time with neuromorphic computation, with both the investment in the hardware and the advancements in spiking neural networks. There are still challenges for adoption, but there are proven cases where they are more energy efficient, especially standard server GPUs while having lower latency and similar accuracy as traditional ANNs. A lot of companies, including Intel, IBM, Qualcomm, Analog Devices, Rain AI, and BrainChip have been investing in neuromorphic systems. BrainChip is the first company to make their neuromorphic chips commercially available while both Intel and IBM are on the second generations of their research chips (Loihi 2 and NorthPole respectively). There also seems to have been a particular spike of successful spiking transformers and other deep spiking neural networks in the last couple of years, following the Spikformer paper (Zhou et al. 2022) and the SEW-ResNet paper (Fang et al. 2021).

Originally published at https://neural.vision on November 22, 2024.

Neuromorphic Computing — an Edgier, Greener AI was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

Originally appeared here:

Neuromorphic Computing — an Edgier, Greener AI

Go Here to Read this Fast! Neuromorphic Computing — an Edgier, Greener AI

Originally appeared here:

Best Black Friday gaming PC deals 2024: Live sales on prebuilt PCs, GPUs, monitors, and more